I noticed in Wendell’s recent GPU pass-through live stream, that he mentioned that there isn’t a reliable solution for the AMD GPU reinitialization problem. He mentioned that once a Windows guest VM on linux is shut down, the AMD GPU will refuse to reinitialize, and that this requires a reboot of the Host machine to fix.

In my personal experience, this actually seemed to be rather random. Sometimes the VM would be able to boot back up, sometimes not. I found that it was not actually the shut down of the VM, but if the host machine enters a sleep state, while the VM was shut down, after having run at least once.

The root issue I suspect has less to do with how Linux is treating the GPU, but the behaviour of the Windows OS while it is a guest. Mainly the Windows guest OS is not sending a signal to the GPU to shut-down or start up while it is running in a VM. I assume this is because when on bare-metal, when a Windows OS shuts down, power is actually killed to the GPU from the motherboard/PSU but when in a guest VM, this does not happen, leaving the GPU in an initialized state. Then if/when the host machine enters a low power state, and power is significantly reduced to the GPU, it enters a non-responsive state after the host machine wakes up.

Anyway, I found a fix/work-around. I’ve borrowed bits from around the web for this. I don’t write how-to’s often, so bare with me.

(note, all these steps take place inside the Windows Guest OS)

Step 1

We are going to need the Windows Device Console (DevCon)

It is included bundled with the Windows Driver Kit, which you can download and install like a chump, but you don’t require all the added bloat.

So, instead download and install Chocolatey, so you can install it from terminal like a bad-ass. Follow the instructions on the Chocolatey website to install.

Once Chocolatey is installed open a Windows command prompt and run this command to install DevCon:

choco install devcon.portable

DevCon is rather straight forward to use. It Enables/Disables hardware. It runs in terminal and can easily be executed by a bat script.

devcon64.exe enable|disable "<device_id>"

Step 2

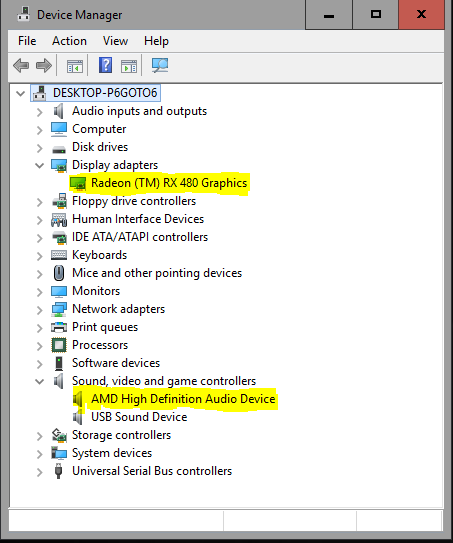

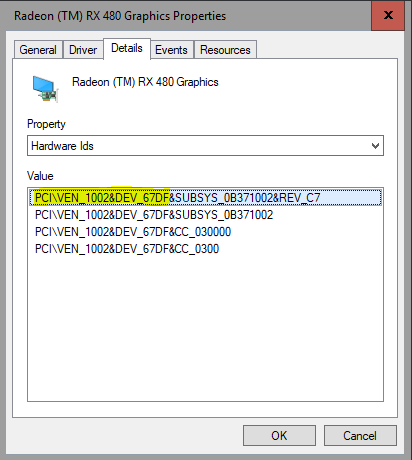

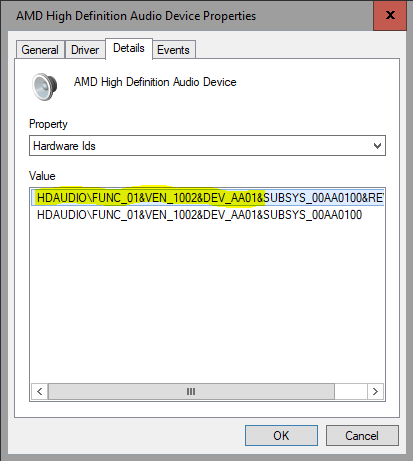

Now go to Device Manager and make note of the Hardware ID for the GPU and the GPU’s sound device. You will notice that there are multiple device ID’s. We are only going to use the first half, ending where the device ID’s become unique. DevCon accepts wild-cards. This way we will be able to de-initialize the entire device. Do not forgot the sound device. If you forget this, the Windows Guest will become unstable over time after reboots. Games and 3D applications will start randomly crashing. I’ve included some example images.

Step 3

We are now going to create two bat files. I created them under C:\ so they will be easy to find later. Maybe not the best practice, but whatever.

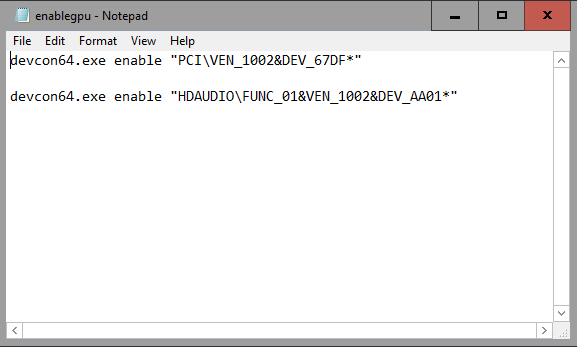

You can use notepad to create two separate bat files. The first one I called enablegpu.bat and included these two lines.

devcon64.exe enable "PCI\VEN_1002&DEV_67DF*"

devcon64.exe enable "HDAUDIO\FUNC_01&VEN_1002&DEV_AA01*"

We are having the bat file use DevCon to enable the GPU. Use that first half the of GPU hardware id that we made note of in device manager. This hardware ID will be different on your system, depending on you GPU model/AIB. Make sure to end it with a wild-card asterix, so we get all the sub-devices. Do not forget to include the GPU’s sound device, which has a separate hardware ID.

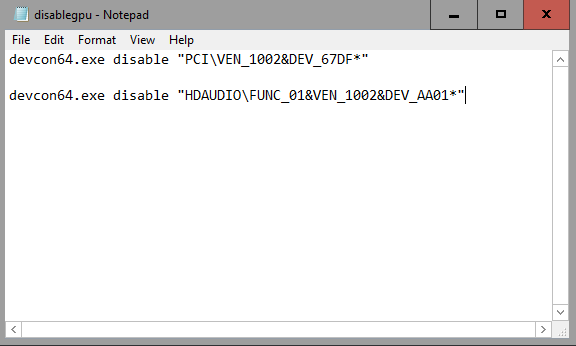

Now create the second bat file. I called this one disablegpu.bat and saved it in the same location as the first one. It is exactly the same as the first bat file but it disables the GPU instead of enabling it.

devcon64.exe disable "PCI\VEN_1002&DEV_67DF*"

devcon64.exe disable "HDAUDIO\FUNC_01&VEN_1002&DEV_AA01*"

Step 4

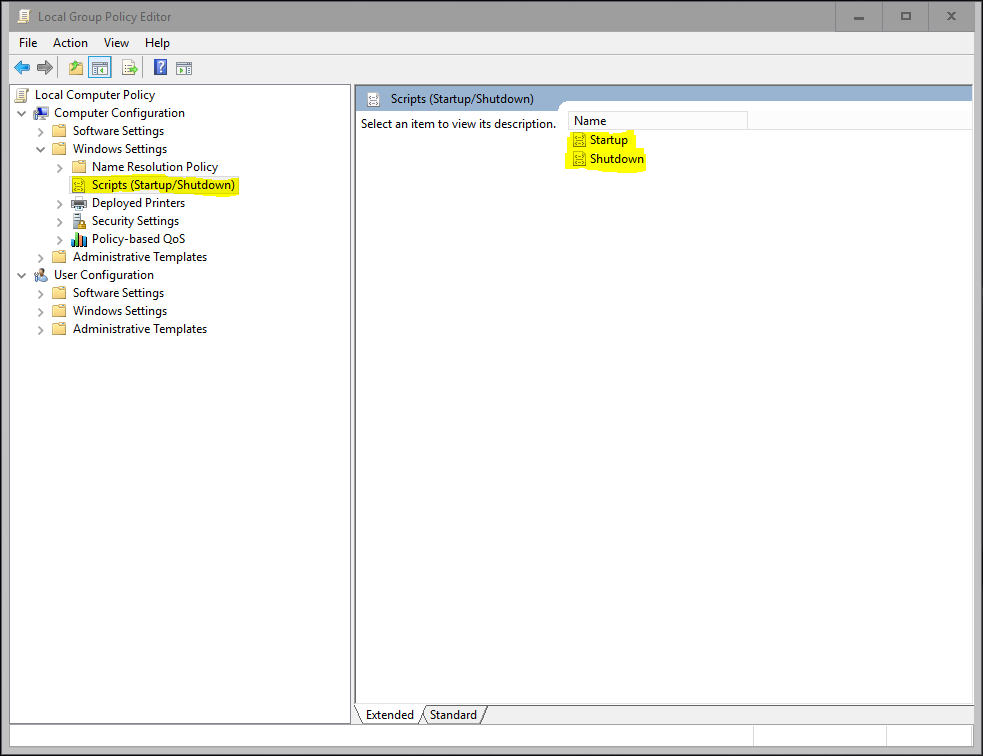

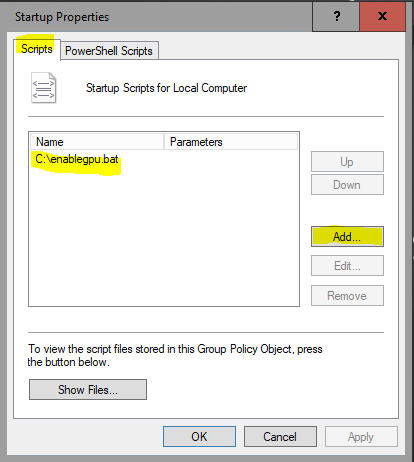

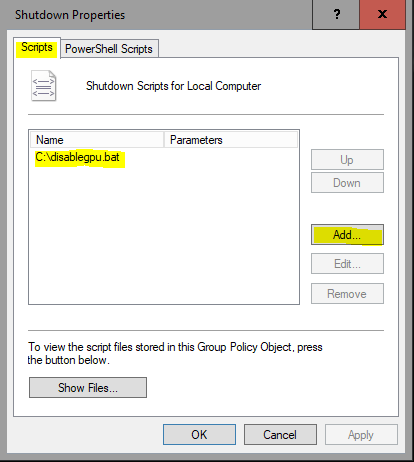

Add the two bat files to the Windows Group Policy Startup and Shutdown scripts.

You can open the Local Group Policy Editor by running gpedit.msc in the Windows run prompt.

Then, navigate to Local Computer Policy > Computer Configuration > Windows Settings > Scripts (Startup/Shutdown) and select Startup. Click the Add button and select your enablegpu.bat script. Repeat this process for your disablegpu.bat script via the Shutdown item in the Scripts (Startup/Shutdown) section.

Conclusion

Now, you should be set as far as user-initiated resets of your VM are concerned – you shouldn’t have any issues with your VM failing to start whenever you explicitly reset your VM or stability issues over subsequent resets. I have noticed however, that restarts due to Windows Update still seem to cause issues, perhaps because these scripts aren’t run. You’ll probably want to disable automatic updates in order to prevent these unwanted reboots from happening.

I didn’t mean to reset it from the host. What I mean is would you be able to deinitialise and reset the GPU in the guest and then be able to initialise it in the host. If you could then deinitialise and reset it in the host, would you be able to initialise it again in the guest?

I didn’t mean to reset it from the host. What I mean is would you be able to deinitialise and reset the GPU in the guest and then be able to initialise it in the host. If you could then deinitialise and reset it in the host, would you be able to initialise it again in the guest?