Question: Has anyone had success getting ASUS WRX90E-SAGE SE to pass POST with more than 4 GPUs, particularly nvidia 4090?

Solution: Same as “[WRX90e won’t boot with 6 GPUs]” (WRX90e won’t boot with 6 GPUs). It recommends Discrete USB4 Support = Disabled and this worked for me on BIOS v 0404 (July 2024).

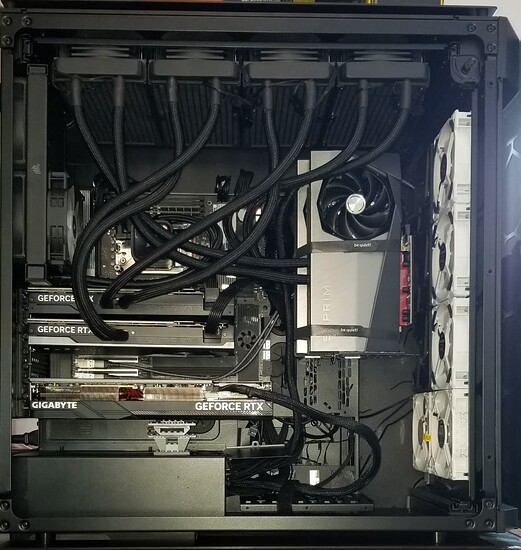

Background: I built something like this earlier this year with ASRock TRX90 and had no problem, but it only had 3 GPUs. This DIY deep learning workstation build has 2 PSUs (2800W total using different dedicated mains in the USA) and use the method described in the ASUS manual which uses the three cables provided by ASUS (Y-cable, and two 8-pin) to connect the PSUs to the mobo. We undervolt the 4090s to 375W to stay under the power budget. 4 GPUs are installed without any problems (passes POST, BIOS 0404 is happy, boots fine to Ubuntu Server 24.04, and nvidia-smi shows the expected 4 GPUs). Two GPUs are in slots 1 and 3. Two GPUs are on PCIe extenders (set BIOS to PCI Gen 3) in slots 5 and 6. These GPUs work as expected, visible in OS, etc. The 4 GPUs are spread across the 2 PSUs. The PSU has enough power available to supply a 5th GPU. All GPUs have been proven to operate properly on another PC, using Windows (Aesthetic Tip: while testing on Windows, used Mystic Light to turn off LEDs and the GPUs save that state when moved to Ubuntu workstation). I use HDMI to the monitor (not tried DP).

Symptom: Adding a fifth GPU in slot 7 or a riser or an extender or any other slot combination on the ASUS WRX90E-SAGE SE results in never passing POST.

Q-Code cycles through codes without stopping. The Q-LEDs light up the various options (CPU, DRAM, VGA, BOOT) without stopping.

The impact is that neither the Q-Code nor the Q-LED provide diagnostic information. The system never passes POST (even after a few hours) and therefore does not boot. BIOS is also not available in this state.

I have tried moving the symptom including, different slots, different known-good GPUs, including an nvidia 1050 to remove the power question. Any combination of 4 GPUs, risers, slots, setting any PCI Gen in BIOS, etc. can work fine. The symptom occurs when adding the 5th GPU regardless of where or how.

About me: I read manuals before buying parts and write a work plan before building. I am not a pro, but I have experience building DIY systems: many gaming PCs with Intel and AMD. A few Deep Learning Workstations with current Threadripper CPUs using multiple nvidia 4090 GPUs.

About the hardware:

ht tps: // www. asus. com/motherboards-components/motherboards/workstation/pro-ws-wrx90e-sage-se/techspec/

-

ASUS Pro WS WRX90E-SAGE SE EEB Workstation motherboard

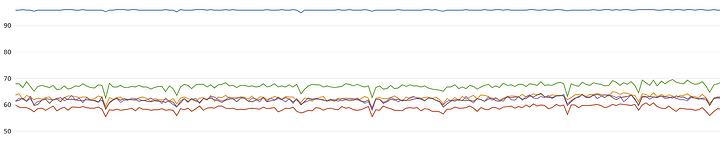

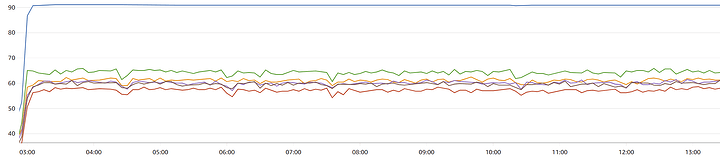

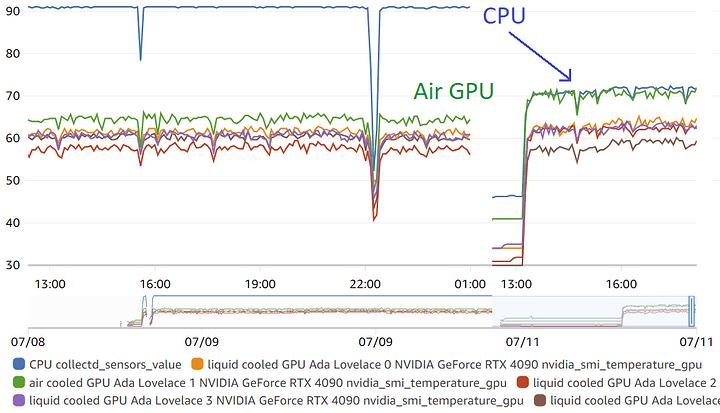

- FYI: This BIOS has overclock enabled by default and temperature capped at ~96C. This was confusing at first because, under heavy load, more cooling created higher clocks instead of lower temps. BIOS has controls for this.

-

Threadripper PRO 7975WX (32c/64t)

-

Corsair CPU liquid cooler

- Plan to add 360mm server grade AIO CPU cooler (not on TR 7000 QVL, but specs are good): Amazon.com

-

be quiet! Dark Power PRO 13 1600W ATX Titanium

- FYI: to obtain “single rail” behavior for this PSU, enable “overclock” with bridge “key” or physical switch

- plus extra: be quiet! BC072 12VHPWR Adapter Cable for Dark Power Pro

-

SilverStone Technology Extreme 1200R Platinum Cybenetics Platinum 1200W SFX-L

- FYI: This is a single rail PSU and single rail is required

- FYI: Use bridged cables that came with mobo to connect to 2nd PSU headers

- plus extra: SilverStone Technology PP14-EPS Dual EPS 8 pin (PSU) to 12+4 pin (GPU) 12VHPWR PCIe Gen5

-

NEMIX RAM 256GB (4X64GB) DDR5 5600MHZ PC5-44800

-

2 x 4TB Gen5 Team Group T-FORCE GE PRO M.2 2280 4TB PCIe Gen 5.0x4 with NVMe 2.0

-

2 x 4TB Gen5 Crucial T705 NVMe M.2 SSD

-

4 x MSI RTX 4090 SUPRIM LIQUID X 24G

-

1 x air cooled 4090 GPU

Note: the PSUs are different due to a space limitation in the case, but both are high end, single rail, devices that I have worked with before. This works fine.

Manuals:

ht tps :// dlcdnets. asus. com/pub/ASUS/mb/SocketsTR5/Pro_WS_WRX90E-SAGE_SE/E23789_Pro_WS_WRX90E-SAGE_SE_EM_V2_WEB.pdf?model=Pro%20WS%20WRX90E-SAGE%20SE

ht tps :// dlcdnets. asus. com/pub/ASUS/mb/SocketsTR5/Pro_WS_WRX90E-SAGE_SE/E22761_AMD_TR5_Series_BIOS_manual_EM_WEB.pdf?model=Pro%20WS%20WRX90E-SAGE%20SE

BIOS is version 0404 – 2024/01/03 (nothing newer is available on ASUS website that this time, July 2024). I note the BIOS manual does not exactly match the BIOS as seen on-screen, but this does not appear to be significant.

Edits made to bring post up to date with operational experience in case it helps someone else.