This is my personal blog page on the forum. Inspired by others here and to make it easier for people to follow my projects or listen to my rants. Feel free to hit “watch” on this page if you want notifications. Also feel free to ask me stuff related to posts in this topic, bellow.

I reserved this spot for links related to tutorials / recurrent / reusable materials.

Void Linux update check (swaybar or desktop notifications)

Void Linux util to check services and programs that have been updated and need to be restarted to run the latest version.

iSCSI target on FreeBSD and iSCSI initiator on Void.

Some insight on large production ZFS configs.

RockPro64 NAS fully-decked build guide.

This message was originally the first reply on this thread, but I reserved the spot for more important stuff.

To begin, this is one of my latest rants in the lounge about ZFS with 70 disks (theorycrafting):

https://forum.level1techs.com/t/the-lounge-one-more-for-the-road-edition/166891/33065?u=biky

Edit for those who cannot view the lounge. In the future I will try to either link to topics / replies that can be publicly viewed, or like in this case, quote it mot-a-mot.

Quick rant: it is a bit late, since System76 forked Ubuntu a long time ago by now (in terms of computer years), but I just realized that Pop!_OS could have collaborated with Linux Mint team instead of rolling their own OS and maybe helped improve that. I did read that Pop!_OS is looking to do its own DE written in Rust and I understand they’d want a bit more control over their own desktop, but I see no reason why they couldn’t have just done a Linux Mint Pop! spin.

I mean sure, the distros have different goals, but I think they could have worked together. Unless I’m missing something important for why they just couldn’t and why System76 had to fork Ubuntu instead of working alongside Mint devs. I think combining their efforts and using the same repos, with maybe at most having an additional Pop! repo checkbox at first startup could have been a better option than working by themselves. I’m just guessing though. Combining the Mint and Pop!_OS resources (like servers, binary repositories, code versioning repository etc.) might have improved the main project and might have benefited both System76 and Mint teams. Maybe to the point that Linux Mint Pop! Spin could have been a better choice than Ubuntu Mate (which is no easy feat IMO, Mate is among the best Ubuntus out there, if not the best).

I usually am not the person to encourage projects to merge or combine efforts, especially because I find different distros with different goals to be genuinely good (more choice is pretty much always good). This is the only exception where I personally don’t see the reason behind Pop!_OS’es existence. Heck, I think Feren, Zorin and KDE Neon have a purpose and support their existence, even if I use neither of them. Just that Pop!_OS and Linux Mint are so similar in their target user base that I think we could see a merger between them and maybe 2 flavors (Cinnamon and Pop! RustyDE variants).

I especially think System76 using Mint would be a fantastic idea, because it is not the only system manufacturer that would be making use of Mint. There are other manufacturers who use Mint, like Compulab who make the awesome Mintboxes passively-cooled mini-PCs and I think there are others too. The projects are so similar that both Linux Mint and Pop!_OS reject snaps and have added flatpak support, yet both still maintain apt as it’s main package manager.

Looking online for "Linux Mint vs Pop!_OS lands quite a few results, meaning that people do see the similarities and potential similar target audiences of the two distros.

Looking even more online, it appears that Pop!_OS is usually based on the latest Ubuntu release, while Mint is usually based on Ubuntu LTS. This could be a good enough reason for the projects to diverge, but I think something could be worked out IMO.

Thanks for coming to my Ted Ttalk.

I have to address some stuff on Proxmox Admin Guide:

https://pve.proxmox.com/pve-docs/pve-admin-guide.html

`pmxcfs` is probably the only reason why I had such a pain with Proxmox these past few months, because once a cluster is failed, you can't easily restore it or modify it or exit the cluster without having fixed the quorum. No quorum means pmxcfs gets locked up. I think the devs believe this to be a feature, so that the cluster never gets screwed up. But I beg to differ. I find it to be hand-holding the user. I believe software should allow the users to do dumb stuff, not try to protect the users from themselves.Proxmox Cluster File System (pmxcfs)

Proxmox VE uses the unique Proxmox Cluster file system (pmxcfs), a database-driven file system for storingconfiguration files. This enables you to store the configuration of thousands of virtual machines. By using corosync, these files are replicated in real time on all cluster nodes. The file system stores all data inside a persistent database on disk, nonetheless, a copy of the data resides in RAM which provides a maximum storage size of 30MB - more than enough for thousands of VMs.

Proxmox VE is the only virtualization platform using this unique cluster file system.

Another thing related to pmxcfs and failed quorum is that when I tried adding a VM from a dead server into the remaining server, I couldn’t, exactly because pmxcfs gets locked up, so I cannot bring my VM back to life because of it. Creating a new VM in local mode doesn’t help either in my case.

To be honest, I really don’t mind having a separate control plane for the hosts, as long as the software is well documented. For example, it would be easier to backup an sqlite or a postgresql DB and some config files from a location, modify things to your heart content and when you want to revert, just restore the stuff. Proxmox pmxcfs is pretty good though, because modifications on one node will get reflected on all nodes, it is nifty, but it has some drawbacks.

I also think the idea of running your control plane on the worker nodes is not ideal. It can work, like you can install most of the stuff on one machine and not feel any downsides for a long time, but I think scaling can become an issue. But I don’t know how much you can push proxmox, as I never had more than 10 virtualization hosts and about 20 NAS attached to them. For what I needed it, it worked fine. And Proxmox does reduce the costs by trying to run as many things on the hosts themselves and have an easy way to enable replication and other features, so I’m not bashing Proxmox as a whole.

Again, my beef with proxmox is especially due to pmxcfs. My home lab had its quorum fail due to reasons outside of the scope of this talk and I cannot easily recover. One easy fix would have been being allowed to remove my last host from my cluster by hitting an exit button and running it permanently in standalone mode with whatever VMs I had remaining on it, but for now, the server is still stuck in a half-working state in a broken cluster. This has been a long standing issue, with some posts on their forums, but Proxmox team didn’t want to address it. I understand that the point should be to recover your cluster, not to start afresh, but I really think having the option open for the user would strike a good balance.

Proxmox VE is a virtualization platform that tightly integrates compute, storage and networking resources, manages highly available clusters, backup/restore as well as disaster recovery

It’s funny that they promote disaster recovery, but in my case where it was a disaster indeed (thankfully no data loss, because I backup), I can’t bring up my remaining host back up and running again, it’s half working in local mode.

I didn’t read the whole manual, but the rest of it is pretty good, I recommend you take a look, it’s a good read. Also didn’t know Proxmox contributed to KVM in its infancy, I see Proxmox in an even better light. But I will still try to get away from it. It doesn’t fit my needs anymore. And that’s fine. As long as others still have a use for it and people are happy with Proxmox, I am happy, even if I am not going to use it anymore.

tl;dr Don’t be a Linux evangelist and backup utilities in Linux, while they do a good enough job, they aren’t promoted enough and they could be much better, to the point that you could backup your files and export your installed programs and run a “restore” using the same backup utility on another instance of the same OS and have an identical replica of your backed-up system on another machine, without using disk cloning tricks, like Clonezilla, but just copying files and installing the software from the repo and maintaining your desktop settings if you have customized it:

You found the wrong person to play Devil’s advocate with. Because I am the Devil in this conversation, lmao. They shouldn’t. That’s what I kept saying in the past 3 or 4 threads related to switching to Linux, including the LTT Linux gaming challenge. I believe they don’t care because they are uninformed. And people who believe they know and th…

I especially think System76 using Mint would be a fantastic idea

Use your autistic powers to elaborate even further and send that to system76. Basing their distro on Ubuntu is taking the long way and likely to give much worse results than a Mint based version.

I don’t know, the rant was just my reasoning behind a Mint-Pop merger, with the elephant in the room being my lack of knowledge / understanding why Pop!_OS exists as a distro, as in, what it brings to the table different than Mint does. Again, the only reason I see for Pop being developed is that it uses a more recent version of Ubuntu, while Mint uses LTS, which is pretty important when it comes to using new hardware and to gaming.

Maybe there are more stuff I am unaware of, like, idk, maybe Linux Mint also lacks or tends to remove support for multilib, that Steam requires in order to function? Who knows. I was hoping someone could answer this, but making a whole thread for each and every one of my rants is kinda pointless, because they usually don’t attract more than 1 or 2 replies.

Mint’s Nvidia stuff is already in the distro, whereas Pop has an amd and nvidia version. That’s just one of the few things that system76 could develop further on. Instead of an nvidia and amd version, they could provide an LTS and a latest kernel release, version.

A lot of ‘problems’ on Mint only stem from the usage of an older kernel on newer hardware. Mint even has a kernel manager for it aswell, with a gui. Drag 'n drop kernel. So pop doesn’t have to develop their own. Instead they can make it easier to manage and use.

Those two things alone u could elaborate on and send forth to system 76. Including your own ideas and to further the idea perhaps even making it a monthly thing to do. Could be kinda awesome

The bellow reply is addressed to PLL (his neighbor had loads of toilet paper), but it’s worth putting it out here. There are also references to the video PLL posted that I was replying to, which is this one:

If it weren’t for even more artificial scarcity by government intervention (lockdowns) I doubt the economy would be in such a dire state. Sure, we had other supply issues regarding silicon wafers, but it’s not like we didn’t have those before. Prices went up like 2-3% and that was it.

I agree with that guy though, if people stop buying the scalpers’ inventories, they will not afford to buy more stuff or to sell for a premium. I can’t wait to see the day when scalpers will cry out online that they paid $500 for software, $500 for 5 months of subscription and $6000 in GPUs and they cannot sell them for a profit, because newer and better stuff came out. But GPU scalpers are techy people, they probably follow the trends and if there’s rumors of new GPUs coming out that will outperform their stock, they can always lower the price closer to MSRP and people will flock to buy them and still earn them a profit.

To be honest, I don’t see hoarding as a bad practice (like your neighbor having mountains of toilet paper in the garage), but scalping is an awful practice. Once we see a few planets align, we should see scalpers starting to stop scalping:

- Increased supply of silicon wafers (always a good thing)

- New GPU architecture on the market that outperform the old ones

- Increased supply of GPUs. Chip manufacturers don’t have unlimited capacity and they operated at full capacity for a while now. I really hope it won’t last until 2023 when more manufacturing plants are opened in the US and Europe, or even worse, 2024, because if they open in late 2023, it’s going to take 2-3 months for products to come out of the production line.

- Less GPU artificial market segmentation. Both nVidia and AMD are at fault here, because instead of unlocking the full potential of a chip for everyone and just allow people use a different driver for their needs, they make the same chips, lock them up and create different products. There is no reason why a precision driver should not work on a performance card and vice versa. Artificial scarcity really is a lucrative business model. But this isn’t going to change any time soon, until there is more competition than 2-3 players in the GPU market. And I mean, things like Imagination Technologies, Qualcomm’s Adreno division and ARM’s Mali getting into serious graphics architecture designing.

I don’t believe mining to be such a big issue as it was a few years ago. Today we see way worse shortages. Scalping is a worse issue. Miners, once done with their GPUs don’t flip them for double the price, they flip them for about half the price. And miners didn’t use bots to buy out all the GPUs.

To be honest, I really don’t like being limited to buy 1 product per shopping cart, what if I want to build a PC for more than 1 person? I think there should be a better way to sell GPUs than queue up people in a lottery. I don’t have the answer, but I would want to try a bidding system on products in short supply, like GPUs. You can buy more than 1 GPU, but you have to bid on them individually and the highest payer wins.

Scalpers can afford to buy up all the stock because the price of products is usually fixed, but imagine that AMD, nVidia and retailers would collude, so that both AMD and nVidia would have a bidding system on their websites, but they would sell stuff to retailers for their normal retailer deal prices, but retailers also implement a bidding system. Bots can’t just outbid everyone to infinity, because scalpers don’t have infinite supply of cash. And what makes this even worse of a nightmare for scalpers is that they don’t know if they’ll be able to sell the cards for a profit, because they paid more on some and less on some. That way, retailers and manufacturers earn the revenue they deserve and scalpers get the short end of the stick.

Of course, I don’t know if this could be the answer, but it’s worth trying. We won’t see the GPU shortages go away if the retailers and manufacturers pretend to be the good guys and allow themselves to be trampled all over by scalpers. If there’s a high demand, prices have to be increased. It’s the only way a healthy market can function, otherwise scalpers get born. Again, I doubt scalpers would buy everything up when prices go higher on retailers’ and manufacturers’ websites too. You know what the best part of “just increasing the price” is? You don’t even have to worry about how to redistribute products and prevent botting. Just rip the benefits of higher prices, which is more revenue for yourself. Again, AMD, nVidia and retailers should stop pretending like they’re good guys and show their fangs of the greedy corporations that they are. At the very least, we will only have to deal with greedy corporations and not both greedy corporations and greedy scalpers.

I like the idea of the Turing Pi. And the motherboard is pretty decently thought out, as in, in correlation to the ATX / ITX standard (like the inclusion of an ATX power header, that some ITX Pi CM4 carrier boards lack).

But the Turing Pi 2 is kinda… idk… lame? I mean, it’s an amazing board to get into baby cluster computing, but it seems a bit weird. 2 SATA ports are attached to 1 Pi, the USB 3 and display outputs to another Pi, the M.2 to another one and idk what else to the last. And all communicate via the on-board ethernet switch and have their own ethernet controllers.

But I was expecting something more… idk… resilient may be the right word? The first Turing Pi had 7 Pi CM3s attached to it and didn’t had much in terms of expansion, but made up for it with its clear purpose: be a cluster made of more or less equal parts. What that meant is that your could use each CM3 for anything interchangeably. This worked wonders for a kubernetes cluster or LXD cluster for example. It was somewhat analogous to a blade server chassis. You insert compute modules with their own CPU, RAM and storage, but they all get their power and networking from the chassis. Brilliant design. Sure, a single point of failure, but no problem, just get 2 of them.

I understand that the first Turing Pi was expensive, because it had to account for 7 Pis which may not have been all populated, not taking into account the cost of 7 Pi CM3s. The Turing Pi 2 has somewhat less of a clear goal. TP2 seems like a good choice for combining with other TP2 to make up better redundancy. The choice of only 4 CM4 modules is weird though. I guess they thought of it like 1 k8s master node and 3 k8s worker nodes. The problem I see with it is that with the expandability, you create a very big dependence on each CM4 board to work, with maybe the exception of the one connected to display and USB depending on whether you make use of the board in headless mode or not.

While not recommended, it would have probably been better to have 3 boards and either run the master and worker on the same node (even though it is not a recommended k8s configuration, but I believe it is a default in Canonical’s microk8s) and get another 2x TP2 to combine with it. Using 2 switches (1 in standby mode after you switch the TP2 ports from bridges to active-standby) would have given quite a lot of redundancy, but again, if you use the SATA ports on 1 Pi and the nvme on another, you create a dependence on certain nodes to be active. It’s not the end of the world if you have other TP2s populated to take care of the work in case one of them goes out, but it might be an issue if one of them malfunctions only slightly. I guess an edge infrastructure would have to account for that somehow, maybe if the Pi storage on the board dies, send a shutdown signal to all the Pis on the cluster, so that the other TP2s can take care of the work? I don’t know.

The Turing Pi 2 seems like an interesting experiment platform, but I feel that what you give up in terms of reliability (compared to multiple standalone SBC) is not worth it in terms of space saving. I feel like people that are serious about edge cluster computing on SBCs (now define what serious means, when you are using SBCs…) would use multiple NanoPi R4S’es to achieve better reliability / get rid of SPOFs.

What I find kind of a pity is that stacked switches are expensive and for the price of those, you can easily buy unstacked ones and make an active-standby setup, but are not able to take advantage of LAgg, since you can’t combine 2 cheap switches for traffic. I should read more on this, maybe there is a way to send traffic from some vlan on one port and have the other port do traffic for other vlans, then when one port dies, move all the vlans on the active port at the cost of more congestion. That way, at least you should be making use of the standby ports without just letting them sit there doing nothing, just waiting for the active ports to fail, which can realistically expected to never happen, so that would be a wasted resource just for the sake of reliability.

If there’s no solution for that, I bet that it can be automated quite easily with a few connection tricks, but it won’t be instantaneous and may require to kill traffic for a few seconds while the interface is getting restarted. It’s probably not needed to reconfigure the switch ports too, just have all the vlans available on both of them and let the computers handle their own port trunk switching.

Now the question would be, how well do SBCs deal with vlans…

I called it before! Compressed hydrogen is a better energy storage than batteries. You basically just need a pressurized container and you have your energy storage. Making a container capable of withstanding 10,000 PSI is easier than creating batteries and is easier on the environment, for the concerned tree huggers out there. In the video it was even shown that because there was more production than demand for electricity, some eolian farms had their wind turbines breaks engaged. Literally wasting power!

That additional juice could have been used to convert water into hydrogen and oxygen. And this serves a double purpose! Hydrogen for energy storage, either for H2 cars or even future hydrogen combustion electric generators (well, the technology is there, it’s only a matter of someone producing those in high enough quantities or big enough sizes for industrial applications) and the oxygen for whatever applications it is needed, like oxygen tanks for hospitals and medical purposes, or really any other environment that requires oxygen.

And it gets even better! Your car, if it would run using just the inefficient burning of hydrogen (K.I.S.S.), instead of what Toyota did with powering an electric motor using hydrogen to create electricity (“hydrogen fuel cells”), could realistically run millions of miles. Try doing that with an electric! Your batteries will lose capacity before you reach even a quarter of that. I admit I pulled that number out of my ass, but I highly doubt batteries in electric cars can withstand so many charge / discharge cycles.

But the thing is that brushless DC and AC electric motors have their own benefits: they basically last you a lifetime. An engine that is burning (technically exploding) hydrogen will at some point need to be replaced or reconstructed just like petroleum (benzine / diesel) engines, but with normal care, I suspect most hydrogen motors would reach a million miles driven before they fail.

The bad part about that kind of hydrogen engines that I am thinking about is that they will likely need oil. I believe electric cars don’t. But this shouldn’t add too much to the long-run costs compared to replacing lithium batteries, these things are crazy expensive!

Still, the Mirai II is a really ingenious car and an engineering marvel. You get the power of an electric motor, but without the really heavy weight of the lithium batteries. Too bad the electric motor is small, I suspect that’s just to keep the plebs off of a future Lexus model with a better one.

I am very disappointed with this video though. Indeed, we have a lot of inefficiencies with making hydrogen and transporting it to pumps, unless it is done locally at the pump station. But the video and basically nobody on the internet talks about the inefficiency of electric cars having to carry 2T of batteries. Tesla cars and other electric vehicles that aren’t the size of a golf cart or a a matchbox, are insanely heavy.

Besides that, people don’t even take into account the pressure that is being put on the roads themselves. Roads also need energy to get created and repaired. And if you introduce a lot of way heavier cars on the road, the roads will have to be repaired even more often. And that’s exactly what we need! (/s) How much energy is being wasted there? And again, that is besides the electricity needed to transport double the weight of a hydrogen car (again, number pulled out of my ass, but it’s a reasonable estimation given my limited knowledge on the subject).

I am highly suspicious of the claim that electric vehicles are 70-90% efficient. In the bigger picture, that number is way lower. Add the cost of producing the batteries themselves and replacing them and you add even more inefficiency to the mix, besides the pollution that comes with that industry. In addition to that, creating electricity for electric car is just as polluting as creating hydrogen for fuel cells. Which can be big, or none at all. We need better nuclear power plants, but that’s another discussion.

One thing that the video noted is that the Mirai II is slow going from a full stop. Just as Alex said, it’s an electric motor, it doesn’t have to be slow. If the engine had a capacitor behind it for burstier loads, like going from 0 mph, the car would probably accelerate faster, even without a big maximum speed (you don’t really need that though). This is likely a safety feature on the batteries. Or maybe that’s just how much power the battery can provide the motor, but the motor itself should be capable of going faster, even if it is small. But again, I have my hypothesis on the Lexus premium models that may come out, but I can’t prove that, Toyota has to prove it themselves.

Apologies if I overlooked a refutation here, but IIRC, the main problem with hydrogen-powered transport is that you’re essentially driving a bomb. A collision or leak could result in a much larger explosion than a gasoline vehicle, especially if it’s highly compressed. Even a tank of highly compressed inert gas could become a deadly projectile if punctured.

Correct me if I’m wrong here…

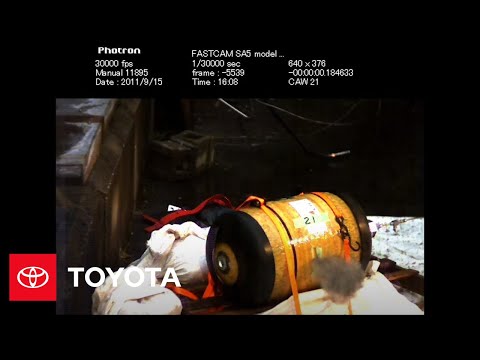

If you watch the video, or if you search for “toyota shooting car hydrogen tank on fire” on your search engine of choice, you will see a video of Toyota tests where the hydrogen tank, being pressurized at 10,000 PSI, gets shot by a bullet and the hydrogen expands so quickly that it doesn’t even have time to combust.

Actually here’s the video

Unlike containing hydrogen at normal or close to normal atmospheric pressure, under high pressure any gas acts differently.

I would want to see a larger variety of safety tests, for instance, where 2 tanks collide with each other head on and are completely obliterated, but that is interesting. A similarly neglected energy storage technology that I’ve always been curious about is a trompe. They can be built relatively easily on a residential property. Since it mainly involves burying a tank and some plumbing, I imagine it’s similar to installing a septic system, granted the tank would have to handle high PSI, so probably somewhat more complicated/expensive, but still achievable.

Yeah, basically what I want to try to build in the very distant future (probably 5-7 years +). Wendell mentioned hydrogen leakage because of its small atoms that could happen like 10% overnight, but even still, I would have a big tank where I could store excess energy from solar panels and when needed, automatically tap into it with a diesel generator with a mixture of probably 10% diesel and 90% hydrogen (a ratio which I’ve seen before in diesel cars). Especially useful if I am going to have harsh winters, but I’ll likely have to also find ways to create hydrogen through other means.

The nice thing about diesel engines is that they can run on almost anything you feed them, even cooking oil, and as mentioned, hydrogen. I’d like to be able to harvest the heat created by combustion to heat up my future house.

The problem is hydrogen these days mostly comes from steam reforming[1] which is a extremely strong CO2 emitting process. What would be good, and in theory could be CO2 neutral, would by methane pyrolysis [2][3]. Converting methane into hydrogen has an efficiency of about 80%. Almost nobody is doing this today, tough. This however we can only do until we run out of methane. Then we could only do electrolysis from water[4], which has an efficiency of about 20-30%.

EVs on the other hand are also only in the beginning of an evolution. Since cobalt an other elements used in battery production become increasingly expensive, research and development is looking into batteries based on more common elements like sodium. There are already alternative products on the market and in development, only that those don’t reach the same energy density for the same price yet. But you can be optimistic that in 10-20 years we will have far more unproblematic batteries on the market.

To put it in a nutshell, I think both technologies are suited for the future and should be developed simultaneously.

I am a fan of simplicity. Electric motors are simple. Unfortunately the car industry does all kinds of complicated stuff with sensors and software.

As for hydrogen, I really like electrolysis. It is highly inefficient, but the inefficiencies pale in comparison to having a good and simple energy storage medium. Again, make some high-pressure containers, fill them up with hydrogen and you got energy reserves. As shown in that video, we can produce way more energy if we really need to. The same thing happens with solar, when energy production is too high, the panels get moved at a slight worse angle from the Sun, so they don’t work at their peak efficiency because of overproduction.

That additional energy we could tap into and store it for usage when demand is high. Some people thought of getting big and heavy electric trains on top of hills using excess energy and dropping them when there is not enough supply of electricity. But the space utilization and potential deforestation of such a device is more waste than storing lots of big, high pressure containers.

That additional energy we could tap into and store it for usage when demand is high.

Ya but then the utility companies would lose money, so no.

I plan on being off-grid, but even then, the utility companies can MAKE money by having energy reserves and buying cheaper electricity. Of course it won’t translate into cheaper prices for the consumers, but as civilization evolves, we will make more and more use of electricity, not less.

We will be discovering better ways to make and store energy and more efficient ways to use it. Maybe in the distant future we’ll even discover other technologies. 300 years ago, nobody would be thinking that petroleum engines would revolutionize the world. 200 years ago, nobody would be thinking that electricity would be used for communication. 100 years ago, the internet did not exist. Who knows what will come in 100 years if no other cataclysmic event like the world wars will happen?