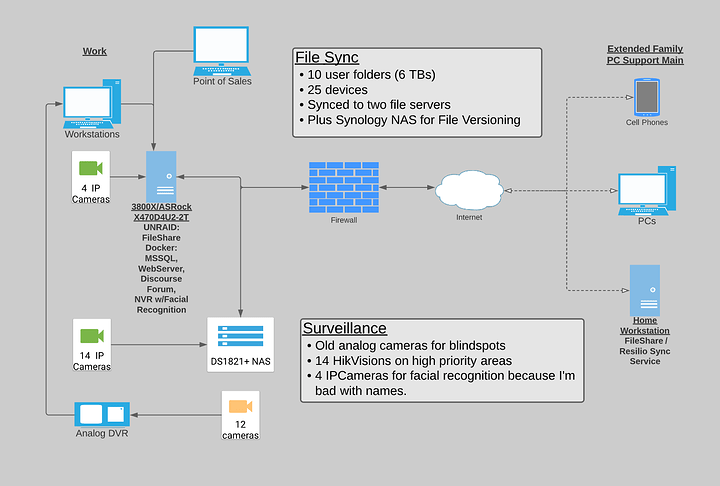

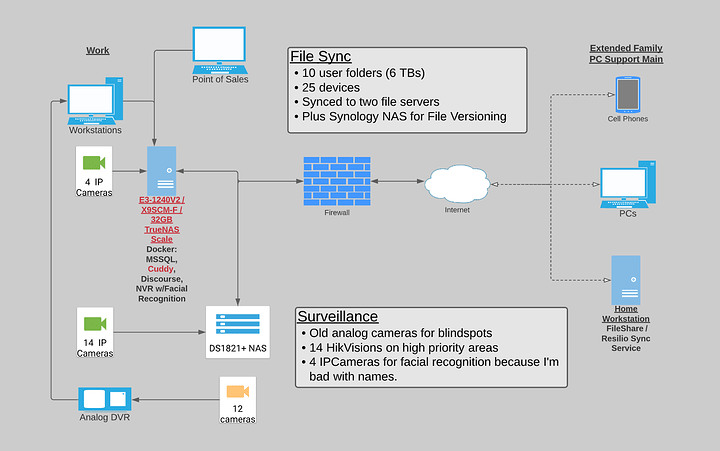

I’m vulnerable to ransomware attacks via my co-workers (aka elderly parents) and it’s past time for an upgrade. I have an idea of what I need, just not sure of the how.

Currently, I’m on Resilio Sync (formerly BitTorrent Sync). I have the work PCs plus a number of personal machines (fam’s designated PC support main) synching to two locations.

My Goals for the upgrade:

- File versioning to protect against ransomware.

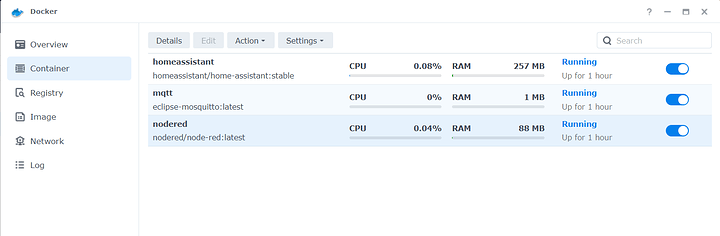

- Containerized server

- MSSQL for POS (3 concurrent users)

- static business website (max 5 concurrent users)

- Discourse forum for a niche CAD community (20 concurrent users)

- Facial recognition to assist me with remembering names

- 14ish IP cameras that always records footage in a useful resolution.

So far I’ve purchased a Synology DS1821+ after seeing @wendell 's Surveillance Station video. The low TDP with 5400 rpm drives is perfect to put in an unventilated, walk-in bank vault. The biggest 5400 drives I could find were 6TB, so that’s 31.4 TB in Raid6.

I’m shopping for the container server, but am thinking about copying Wendell’s Unraid GN build:

- ASRock Rack X470D4U2-2T

- AMD 3800X

- 64 GB ECC RAM

- Old Quadro k4200 (PCIe x8)

- 8 WD Reds plus this Dell SAS HBA thing (?) (PCIe x8)

- Plus a M.2 (E key) Coral Accelerator for facial recognition (PCIe x2)

Questions:

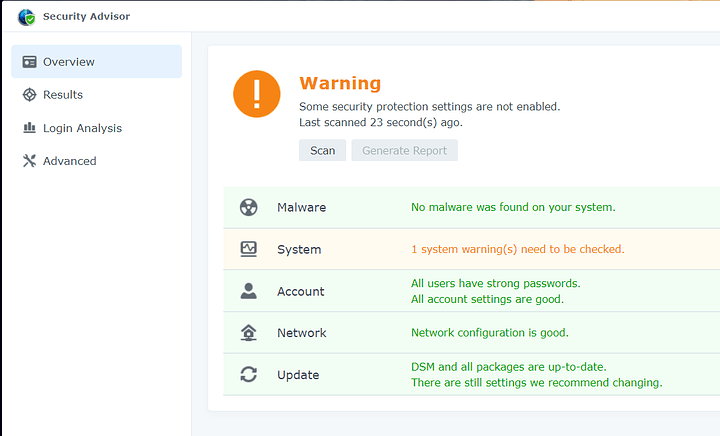

How should I enable file versioning on the Synology NAS?

I tried Active Backup, but it’s a once-a-day thing that stores files in a *.img format. I need to grab changes as they happen (I do a point-in-time restore on SQL at least once a year because co-workers) and archive them for a month.

How safe is it to open port 5001 if I set up SSL and order Yubikeys for Syn DSM accounts?

Sending big files to outside vendors is a pain. I have to upload to the cloud, wait for the share link, and then email it to them. I’d love to create a read-only, password-less, TTL link on the fly.

Is there an updated recommendation for the ASRock Rack X470D4U2-2T? Can it handle an x8/x8/x2 configuration?

Facial recognition is a future wish (LTT’s Coral vid). I’d love to put two cameras on the POS to train faces to accts, then have two on the doors to recognize people as they enter.