Yeah, I think they were in 14.04.

@tatsu, have a look at /etc/initramfs-tools/modules and see if that looks right.

I don't have access to Ubuntu at the moment, so I can't confirm, but all you need to do for this config is add the kernel modules to the list then run update-initramfs -u and you should be good.

yes this thread confirms this is the way (plus lets me know syntax) :

oh I almost forgot : I didn't blacklist anything more than THE vga port. why is it you also blacklist a usb and the nvidia audio? will driver install in the VM fail if I don't?

I blacklisted a USB controller so I could pass it through. You didn't have to. The USB controller must be a PCIe device though, can't be on the motherboard itself, from what I understand.

The Nvidia audio is a virtual subdevice of the GPU itself, so if both aren't passed through, you will have major problems.

I've gotten up to this point : "Before we do that, however, I'd like to stop and take a quick look at a few different storage options. I'm currently using pure SSD storage for my VM's, but you may want to choose a mix of SSD and HDD" so i went back to another part of the thread here : https://forum.level1techs.com/t/the-pragmatic-neckbeard-2-zfs-ssd-speeds-at-hdd-capacities/110094 and I find that I DO want ZFS for my windows emulation.

g̶i̶v̶e̶n̶ ̶u̶b̶u̶n̶t̶u̶ ̶i̶s̶n̶'̶t̶ ̶c̶o̶v̶e̶r̶e̶d̶ ̶i̶n̶ ̶t̶h̶i̶s̶ ̶t̶u̶t̶o̶r̶i̶a̶l̶ ̶s̶h̶o̶u̶l̶d̶ ̶I̶ ̶a̶b̶a̶n̶d̶o̶n̶ ̶t̶h̶e̶ ̶i̶d̶e̶a̶?̶ I found this : https://wiki.ubuntu.com/ZFS and ran sudo apt install zfsutils

is this what you meant by raw format: "for my VM's C:/ Drive, with a raw storage format. raw disks will give us the best performance."

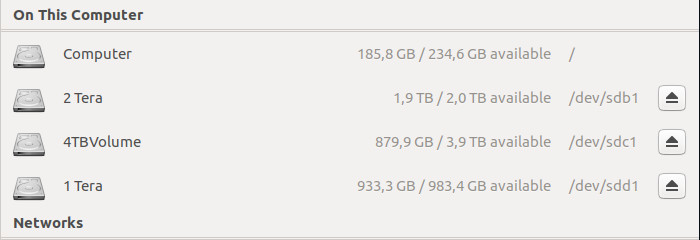

I have an SSD for boot with 186 GB free but I suspect it won't be enough given I'm planning to pile on ALOT of games /software most notable of which Star Citizen which by itself can take up 100GB. on the side i have some 2.3T free on a 4Tera and a whole 1Tera sitting around doing nothing.

also i downloaded the virtio-win-0.1.137.iso but I'm kinda clueless as to what to do with it. I mounted it.

do i copy it's files to a folder in /usr/share/?

thanks!

@SgtAwesomesauce Hey! back at it today I spent the day sorting my files so that I had a setup that was prone for use for ZFS partitioning.

here's my output for fdisk -l :

Disk /dev/sda: 223,6 GiB, 240057409536 bytes, 468862128 sectors

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disklabel type: gpt

Disk identifier: F536857C-7D12-4852-93D5-8FF582CA348D

Device Start End Sectors Size Type

/dev/sda1 2048 1175551 1173504 573M EFI System

/dev/sda2 1175552 468860927 467685376 223G Linux filesystem

Disk /dev/sdb: 1,8 TiB, 2000398934016 bytes, 3907029168 sectors

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 4096 bytes

I/O size (minimum/optimal): 4096 bytes / 4096 bytes

Disklabel type: gpt

Disk identifier: 74C34D56-67A9-466D-A8E4-7921DFF86FEA

Device Start End Sectors Size Type

/dev/sdb1 2048 3907028991 3907026944 1,8T Linux filesystem

Disk /dev/sdc: 3,7 TiB, 4000787030016 bytes, 7814037168 sectors

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 4096 bytes

I/O size (minimum/optimal): 4096 bytes / 4096 bytes

Disklabel type: gpt

Disk identifier: 33D9F960-68B6-464E-8263-4E5029285086

Device Start End Sectors Size Type

/dev/sdc1 2048 7814035455 7814033408 3,7T Linux filesystem

Disk /dev/sdd: 931,5 GiB, 1000204886016 bytes, 1953525168 sectors

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disklabel type: gpt

Disk identifier: D9B014AD-E51B-4EE1-8641-4FDEAE27112C

Device Start End Sectors Size Type

/dev/sdd1 2048 1953523711 1953521664 931,5G Linux filesystemAs you can see I have a Boot SSD of 234 GB with some free space that can accommodate some of the space of the ZFS partition so that the emulated windows starts up faster. and I have a 2Tera and a 1Tera hard drives freshly formatted with a GPT partition table to ext4. The 4Tera is where my life is. but I can invert that : i could split it into two and store on the 1T and 2T leaving the 4T for windows.

My guess is that 1T will be enough for even my 120 games and some 30 office and graphic programs.

How do I set up the ZFS partition with combined space over two hard drives, the 180 free GB of the SSD and the Terabyte hard drive?

I don't wanna get this wrong : / (systemctl is definitely part of ubuntu)

zpool create -f my-pool /dev/sdd # the whole 1Tera drive

zpool create tank -omountpoint=/media/tank -oashift=12 /dev/sdd log /dev/sda2 cache /dev/sda3 # the third partition i'll have created on the SDD to this end

systemctl enable zfs.target

systemctl enable zfs-import-cache.service

mkdir /ZFS

zfs create -o mountpoint=/ZFS -o setuid=off -o sync=disabled -o devices=off tank/ZFS

systemctl mask ZFS.mount

#after having logged out and logged in as root to a busybox

mv /home /home-old

zfs create -omountpoint=/home tank/home

rsync -arv --progress /home-old/* /home

rm -rf /home-old

zfs create -s -V 1T tank/windows-vm

zfs snapshot tank/home@first_Savei did this so far :

root@ts:/home/t# zpool create -f my-pool /dev/sdd1

root@ts:/home/t# zpool create tank -omountpoint=/media/tank -oashift=12 /dev/sdd1 log /dev/sda2 cache /dev/sda3

property 'mountpoint' is not a valid pool propertyI also tried with a space separating the -o and montpoint :

root@ts:/home/t# zpool create tank -o mountpoint=/media/tank -oashift=12 /dev/sdd1 log /dev/sda2 cache /dev/sda3

property 'mountpoint' is not a valid pool propertyYou're best off either allocating the whole disk to ZFS or none of it. Trust me on this, it gets nasty when you try to do otherwise.

I would make a ZFS pool out of the 2TiB and 1TiB drives. You'll have more spindles, which equates to more IOPS.

Depends on the games. I fit my 200ish games steam in 950GB. You can also enable ZFS transparent compression.

Okay, so if you want to use the 180GB of free space on the SSD, you'll have to repartition it. Once that space is used, you'll have an extremely difficult time getting it back, so make absolutely sure you're ready to commit to that.

To set up a ZFS pool making use of the raw space of the two large drives, all you need to do is this:

zfs create -f pool-name /dev/sdd /dev/sdbSo, you're mixing up the commands for zpool creation and dataset creation. Remember a pool is the raw storage (think of it kind of like a disk) and a dataset is a subset of data that's represented as a snapshot, filesystem or raw volume. (think of it similarly to a partition of the disk).

So, if you want to mount the root of your dataset on /media/tank, you use the -m flag, which sets the mount point of the pool. This is different from the mountpoint of a dataset. By default, the mountpoint of a dataset is the mountpoint of the pool, followed by the path to the dataset. EX: for tank/home/tatsu, with pool mountpoint of /media/tank, you would have an effective mountpoint of /media/tank/home/tatsu for that dataset if you didn't specify -O mountpoint on the dataset.

Now, let's build you a command that will do what you want.

# zpool create tank -m /media/tank -oashift=12 /dev/sdd1 /dev/sdb1 log /dev/sda2 cache /dev/sda3-oashift is not really needed, since ZFS will automatically decide what the best option is. It used to be necessary a few years back. If you're using disks with different alignments, it can be used to align to the largest alignment to improve performance across the board.

-m /media/tank gives you your pool mount point. when creating a tank, you don't use -O mountpoint since that is a dataset parameter. It doesn't really make sense to me why they'd use two different parameters for what is functionally the same thing, but that's just the way it is.

Now, on creating your datasets:

Close! Lower case -o and upper case -O are two different things. Uppercase -O is what you want here. It gives you your dataset parameters. Again, it's silly, but once you get these nuances down, you're going to be good.

That should work just fine.

Not sure what this is for. This isn't necessary for the operation of ZFS. Unless you specifically want this dataset for some reason.

Should be good here.

Always good to have a snapshot!

Yeah in the end I don't want to use the SSD that sounds like a bad idea now. You had an SSD dedicated entirely to the windows ZFS partition correct? that is not my case my SSD is the one i boot ubuntu from.

okay but is the fact that I ran :

zpool create -f my-pool /dev/sdd1problematic here?

I imagine for the zfs create -f pool-name to work I need to have both drives in ext4 or both drives in solaris ZFS and one of each (like I currently have) is not going to work.

Must I revert the 1T back to ext4 first? or make the 2T ZFS first?

Sorry for being so slow with this :S

I had an SSD dedicated to ZFS, not just the windows partition. ZFS also hosted other things (/tmp, /var, /usr) on my system.

Honestly, ZFS absolutely RAVAGES SSDs when it comes to data written. I had an SSD on my NAS and after 2 years, it had written about 300TB of data to the SSD. My NAS is 16TB raw... If you don't absolutely need the speed, it's better to not use a cache/log SSD.

Not at all. Let's teach you how to add a drive!

zpool add my-pool /dev/sdxZFS is designed to be able to add drives and expand your storage. That's the magic of it! Be aware, though, that you can't remove devices from the pool. Once they're in, if you want to remove them, you need to destroy and rebuild.

Don't worry at all! I feel bad for ignoring you for a week. (I had an insane week at work... new datacenter deployment is a bit crazy when the contractors half-ass their jobs...)

@SgtAwesomesauce Thanks a million you're by far the best aid any forum user has ever given me and that's saying alot!

root@ts:/home/t# zpool add my-pool /dev/sdb

root@ts:/home/t# zfs create -Omountpoint=/home tank/home

invalid option 'O'

usage:

create [-p] [-o property=value] ... <filesystem>

create [-ps] [-b blocksize] [-o property=value] ... -V <size> <volume>

For the property list, run: zfs set|get

For the delegated permission list, run: zfs allow|unallow

root@ts:/home/t#the adding of the ext4 2T to the pool worked like a charm!

however even with uppercase O the next command is problematic although the error message is different. I'm tempted to run it's suggested commands.

Damn it, I'm slipping!

Let me look back into the docs real quick. the above post was all from memory. It's possible I'm thinking of a different program that has separate option flags.

Let's try running the lowercase -o and see what happens. I was probably wrong above.

root@ts:/home/t# zfs create -omountpoint=/home tank/home

cannot create 'tank/home': no such pool 'tank' without mountpoint?

without mountpoint?

Not quite.

It's complaining that it can't find the zpool.

so, use zpool status to find out the name of your pool. From there, replace tank in the command with whatever the pool name is from the status command.

@SgtAwesomesauce

Doh! ofc!

that worked amazing Thanks! On to the Qemu-KVM bit...

WOOP! WOOP!

Weird :

root@ts:/home/t# libvirtd

2017-05-28 07:48:19.090+0000: 5473: info : libvirt version: 2.5.0, package: 3ubuntu5 (Christian Ehrhardt <[email protected]> Tue, 21 Mar 2017 08:02:37 +0100)

2017-05-28 07:48:19.090+0000: 5473: info : hostname: ts

2017-05-28 07:48:19.090+0000: 5473: error : virPidFileAcquirePath:422 : Failed to acquire pid file '/var/run/libvirtd.pid': Resource temporarily unavailable

root@ts:/home/t# status libvirtd

status: Unable to connect to Upstart: Failed to connect to socket /com/ubuntu/upstart: Connection refused

root@ts:/home/t# ps ax | grep libvirtd

2595 ? Ssl 0:00 /usr/sbin/libvirtd

5562 pts/0 S+ 0:00 grep --color=auto libvirtd

root@ts:/home/t# kill -9 2595

root@ts:/home/t# rm -rf /var/run/libvirtd.pid

root@ts:/home/t# status libvirtd

status: Unable to connect to Upstart: Failed to connect to socket /com/ubuntu/upstart: Connection refused

root@ts:/home/t# libvirtd

2017-05-28 07:50:11.363+0000: 5786: info : libvirt version: 2.5.0, package: 3ubuntu5 (Christian Ehrhardt <[email protected]> Tue, 21 Mar 2017 08:02:37 +0100)

2017-05-28 07:50:11.363+0000: 5786: info : hostname: ts

2017-05-28 07:50:11.363+0000: 5786: error : qemuMonitorJSONCheckError:387 : internal error: unable to execute QEMU command 'query-cpu-definitions': The command query-cpu-definitions has not been found

2017-05-28 07:50:11.363+0000: 5786: warning : virQEMUCapsLogProbeFailure:4441 : Failed to probe capabilities for /usr/bin/qemu-system-alpha: internal error: unable to execute QEMU command 'query-cpu-definitions': The command query-cpu-definitions has not been foundshould i not have deleted /var/run/libvirtd.pid?

or maybe it's because i need to make the line in /etc/libvirt/qemu.conf a little bit different from :

nvram = [

"/usr/share/ovmf/ovmf-x86_64.bin:/usr/share/ovmf/ovmf-x86_64-vars.bin"

]found this but disabling IOMMU wouldn't work for me right ?

another potential solution :

"

I was able to get through this error by doing 'brew unlink qemu' and

then creating a symlink only for$(brew --prefix qemu)/bin/qemu-system-x86_64

"

booooop bop.

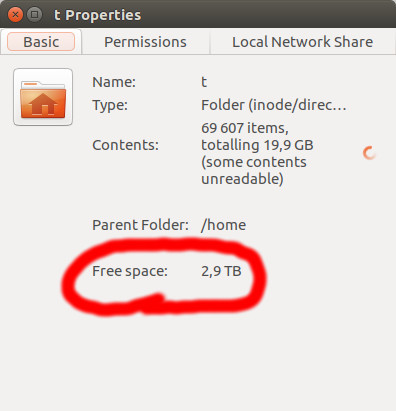

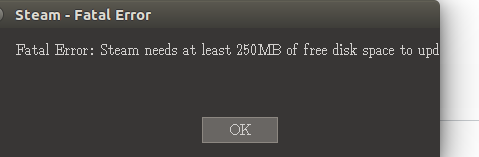

I have a new Issue that makes very little sense to me . when i run steam I get :

t@ts:~$ steam

ILocalize::AddFile() failed to load file "public/steambootstrapper_english.txt".

[2017-05-30 19:09:30] Startup - updater built Nov 23 2016 01:05:42

[2017-05-30 19:09:30] Verifying installation...

[2017-05-30 19:09:30] Unable to read and verify install manifest /home/t/.steam/package/steam_client_ubuntu12.installed

[2017-05-30 19:09:30] Verification complete

[2017-05-30 19:09:30] Downloading Update...

../tier1/fileio.cpp (3186) : Assertion Failed: Failed to determine free disk space for ., error 75

[2017-05-30 19:09:30] Error: Steam needs at least 250MB of free disk space to update.

[2017-05-30 19:09:30] Error: Steam needs to be online to update. Please confirm your network connection and try again.

[2017-05-30 19:09:52] Shutdown

threadtools.cpp (3283) : Assertion Failed: Illegal termination of worker thread 'Thread(0x0x576b3530/0x0xefd7db'

cat: '/home/t/.steam/ubuntu12_32/steam-runtime.tar.xz.part*': No such file or directory

tar: This does not look like a tar archive

xz: (stdin): File format not recognized

tar: Child returned status 1

tar: Error is not recoverable: exiting now

find: ‘/home/t/.steam/ubuntu12_32/steam-runtime’: No such file or directory

t@ts:~$

Sorry, Memorial day weekend turned into track weekend. Took the roadster up to willow springs and destroyed tires for a few hours.

You shouldn't have. First thing's first. don't manually start libvirtd. use the systemd service like so:

systemctl status libvirtd and systemctl start libvirtd. To enable start on boot: systemctl enable libvirtd

Ubuntu's moved to systemd recently, so the status command is not the best way to check daemon status.`

This line should be good as is. It's just a config, and it's 100% correct syntax.

First thing's first, are all your packages up to date? If not, do that and see if that's fixed it.

If not, let's start looking at why we're getting this error:

../tier1/fileio.cpp (3186) : Assertion Failed: Failed to determine free disk space for ., error 75Most likely, there's something going on with ZFS that steam isn't happy with. Let's try starting steam with the following command:

STEAM_RUNTIME=0 steam