Conventional wisdom is AMD punted consumer/gaming graphics intentionally to focus on EPYC and Enterprise GPU where it could (higher margin). So, their showing in gaming was a “brave face” by sales guys left swinging in the wind by an intentional and rational, but painful shift away from the obnoxious and perennially low margin gaming GPU market.

Area is area though… every square inch of die space is opportunity cost. In a market where Intel is scrambling and chewing up 14nm fab lines all over place…

Didn’t there zen1 core and cash use les space as intels??, not sure its been some time since its out.

That may be the case but they are also fighting NVidia not just for gaming… but for AI learning. If Navi can be great for gaming at least give an option at a reasonable price and slice into some of the AI market you would have both divisions swinging upwards at the same time which is a huge boon to the bottom line.

I still would like to see AMD get out of debt which judging by everything they have announced is something quite possible in the next couple of years.

Ditto on finally and permanently getting to a point where they can invest broadly in themselves and present a better long-term competitor to Intel.

Found it the core is smaller the sram is however bigger.

https://www.eteknix.com/amd-zen-smaller-core-size-intel-skylake/

yep, L3 cache density on the GloFo process is better than what Intel can make (relative). Curious really, I can’t think of an obvious reason why that is.

Edit:I think the cores are smaller because of the new “from ground up” architecture.

I think Navi doing well AND being able to meet all supply demands both for gaming and enterprise is critical… they can’t continue to have one division zooming while the other is meh.

They also use a different kind of Library, and “automated” layout (more compact) as intel stil draws there layout.

Interesting, I didn’t know that.

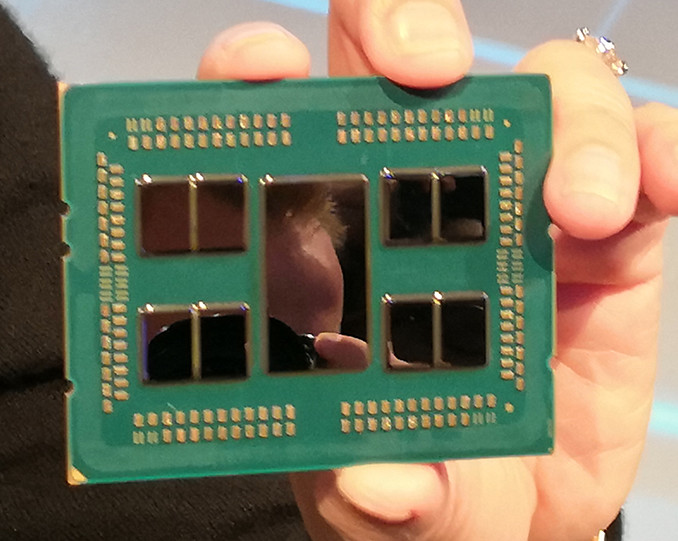

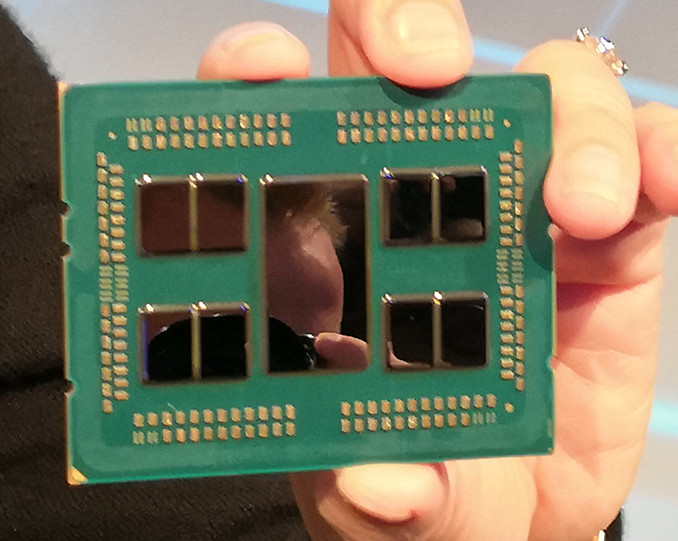

Does anyone know how the cache layout on the Rome processors is supposed to be? Like L1 and L2 on the 7nm dies and L3 on the IO die?

They actually can continue that way, and it’s proven to be a good business model for now. The thing is, AMD is still strapped for cash compared to their competitors in both markets (and they are competing in both CPU and GPU markets, which is difficult). So, they are still building things up in the one that seems like it will have the best gains, while allowing the other to do ok and kinda keep up for certain things (they aren’t really trying for a high end market, they are fine if they can get server markets (the new GPUs they announced are for server workloads/machine learning, which are not meant for consumer markets), mid range a bit to keep things alive.

Navi may actually be designed for some other project (Ps5? Apple?) as they have a big buyer already for that stuff, which is what they seem to have decided to focus on from what I can tell (again, because they are short on money otherwise). That said, navi may be great, particularly at a certain price point. I’m not sure they will care much about beating Nvidia at the top end any time soon though, which is fine since mid-range is where more sales are anyway (and for me, where I could afford anyway).

Thats what i suspect, and a couple of different I/O chips for the 3 classes.

I can agree to a point… but its tough to swallow when Cray announce new supercomputer with EPYC CPU’s but have to goto NVidia for GPU’s. You have to think if Vega or Navi had been better products an entire AMD supercomputer solution would have been a huge money maker.

Do you really think there will be several different IO chips? The tooling for those chips is f-ing expensive!

Also, does that mean the next generation Ryzen CPUs needs different dies again, because they need to add L3 cache and IO? I am a bit confused how this is supposed to work form a cost/benefit point of view.

The reality of the ML market is still Cuda rules the roost. It’s not just a hardware problem. It’s a software ecosystem problem as well.

Al the cores wil be the same so 1x tooling on 7nm and i wonder if the I/O chip wil fit in a AM4 packet?, its al pure speculation at the moment.

just look at the sice of the I/O chip wil that fit on AM4?

Not sure that this is cost effective but you are right, we are all speculating right now. Interesting nonetheless.

It will probably fit, but it would be way overkill and therefore not make a lot of sense.

Any one have a AM4 proc to compare against a threadripper proc ?? to sice up if the I/O chip fits in it. @wendell perhaps?

I am trying to find numbers on Romes die sizes. The PCB should be the same size as current Epyc right?

Its a drop in replacement game at AMD, so it must fit.