That’s what I get for posting when I haven’t slept in 2 days.

I could have sworn you were trying to achieve 20M IOPs via RAID which is tough with NVMe. Went back and re-read the thread and see you’re talking about all out IOPs.

When you mention Jens I’m assuming this is Axboe you’re referring to correct? If so there are a couple things to keep in mind. This is not meant to take anything away from his accomplishments.

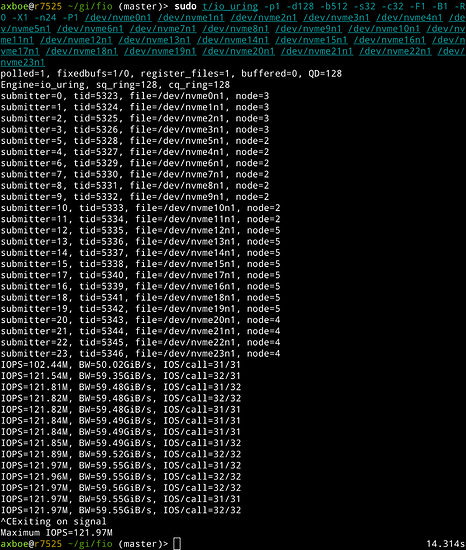

He’s using 24 Optane 5800X setup special. His version of an IOP isn’t what most of us would test against for real world use. I know unless it’s specially mentioned an IOP to me is 4K as that’s the standard unless otherwise specified.

The P5800X is rated at up to 1.55M Random 4K Read IOPs. The P5800X has a special mode that’s meant for meta-data that allows use of 512B and that provides 5M IOPs according to Intel literature.

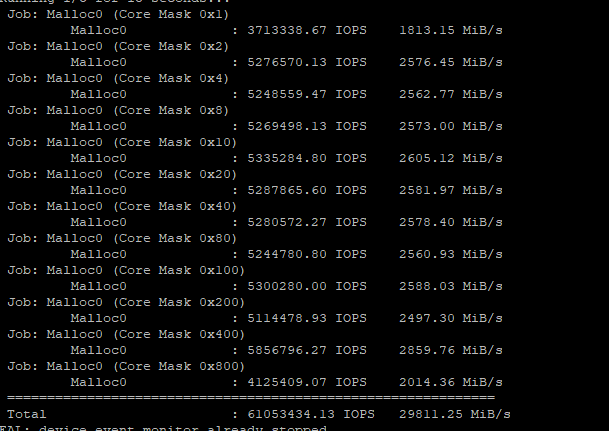

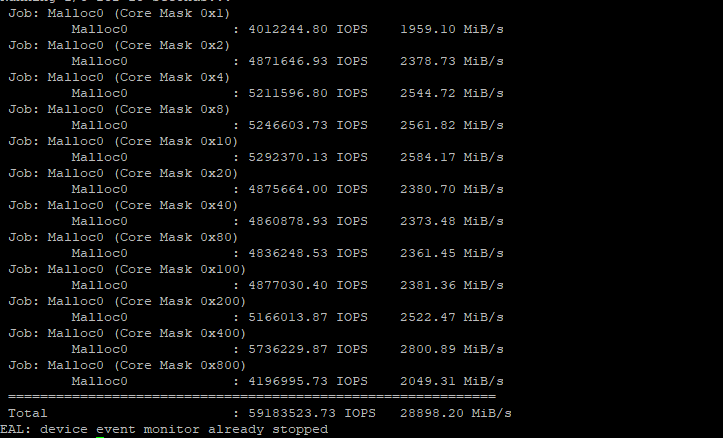

Back on Aug 1st Jens posted he’s using 24 drives each with 5080 IOPs and said the 122M IOPs was just linear scaling. https://twitter.com/axboe/status/1554115250588471297

24x5080=121,920 so that’s about a linear as you’ll get. The problem I have with the numbers is that each IOP is 1/8 what is typically used so there is a lot less bandwidth going on.

When he tested the same drives using 4K he got 31.8M IOPs which is more like we’re used to comparing IOPs. Don’t get me wrong that’s a super number in it’s own right but the test is essentially assigning different drives to different cores using enough cores to be able to read the data using polling. It’s followed by a No-Op so it’s only doing a small amount of IO compared to real usage such as loading the data into memory to be used or sent across the network to a client workstation or similar. It’s not representative of data base IOPs for example.

So I think that’s important to keep in mind if your trying to compare your own system IOPs from actual use to this testing. Again, not wanting to downplay the work he’s doing because it’s good stuff.

I mention this since I think you were doing 4K IOPs testing. If I’m correct on that, if you would have tested the same way using 512B sectors then you would have likely passed 20M IOPs pretty easily.

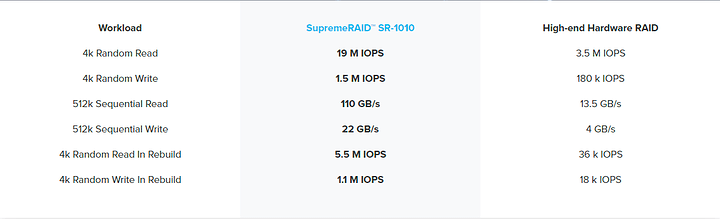

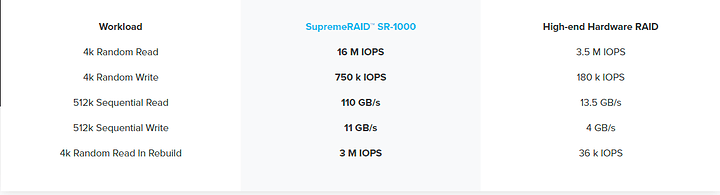

As touched on in my first message these IOPs from my understanding are just individual drives and core. The drives aren’t part of any pool or RAID group. This to me is a more fundamental issue. Right now there isn’t any decent solutions for high output RAID of NVMe using software or common tri-phase adapters. The ExtremeRAID board is the only device I’ve seen that does a decent job of giving us some good bandwidth and IOPs numbers when joining NVMe drives.

The hardware for the SR-1000 and SR-1010 appear to be normal Nvidia GPUs. The SR-1000 reports itself as being a PNY Quadro T1000. The SR-1010 appears to be a NVIDIA RTX A2000. Neither are very high end GPUs by todays standards.

It’s interesting that you’re buying a solution for close to $2500 that comes with a $300-$400 GPU and software raid. Of course they are offloading RAID calculations and other functions to the GPU. Storage review mentioned they are also using AI functions of the GPU.

I think it’s just a matter of time before we see something like this done open source. that could benefit equally benefit mdadm as well as ZFS.

I think they’ve shown GPU use to be worth doing.