Oh, that’s rather interesting. There are a few reasons, mostly including bugs and lack of some features.

We’re on OpenNebula 5.6.1. When you select some VMs in the web interface (or any of the checkboxes), even when you deselect them and it says that only 1 box is selected, it may or may not also apply the action you wanted (shutdown / terminate / undeploy etc.) to the other VMs that were previously selected. We have to enter in the VM setting in the web interface and select actions only from there, to make sure that no other VM was selected. That’s probably the only potential risky bug that we found (and it was pretty terrible, one DB was corrupted because it wasn’t stopped before the VM was terminated - thank god for backups).

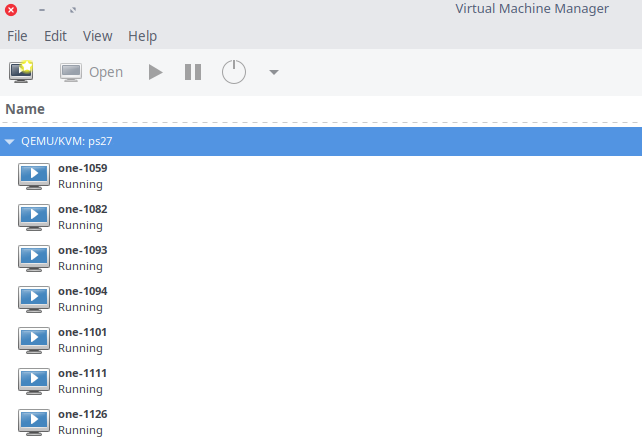

Anyway, both my colleagues worked with Proxmox, I went along with them because it’s KVM in the back, so I thought the migration would be pretty easy (I only used XenServer and HyperV before coming here, so obviously migrating to XS for example would have been more of a hassle).

Other neat things about Proxmox is the documentation that seems to be everywhere, while OpenNebula only has its docs as a good source of information. Also, OpenNebula is way harder to manage (requires more fiddling with the terminal - and while none of us are are afraid of the terminal, there is quite a lot to read about and the management is putting a little pressure on us to improve the infrastructure and the workflow, but without spending too much money - I know, typical management of the 21st century). Due to our requirements, it seemed that the Live Cloning of Proxmox would be a tremendously* helpful feature (there are requests for us to clone VMs and reconfigure them as they are, but doing that will require the VMs to be shutdown and downtime is not really acceptable).

Also, from what I remember (I didn’t study OpenNebula too much), If the main server that OpenNebula is configured on is going down (like unexpected crash or corruption or something), the VMs on the other hosts will be fine, but we don’t have a fallback / failover for the master server (the one that the web interface runs on), that means that we will have to recreate / import the machines on a reinstalled server / earlier backup. I might not be explaining too well, let me give an example:

- Main OpenNebula server is Server1

- Other hosts that have been added in OpenNebula are servers 2 to 11

- If Server1 fails, the KVMs on server 2 to 11 will be fine, but we won’t be able to manage them from a web interface, until we recreate Server1 or restore from a previous backup

I hope that does it.

From what I understood, if every host is running Proxmox, if Server1 crashes, the next server from the cluster will become the master server.

Another pro of Proxmox with the live cloning is due to our poor infrastructure. We got some SANs that don’t have hotswap (some older HP ProLiant MicroServers), and if one of our drives from a RAID fails, we have to shutdown all the hosts running on said SAN, power it off and replace the bad HDD, then power the SAN and the VMs back on. This wouldn’t be a problem if we had hotswap, but in case a drive fails, we can just live clone them to another storage and make the VMs run from there with a lot less downtime.

^This is a part of what I said in the OP with

Anyway, there are further neat things about Proxmox. I’m a newbie to both OpenNebula and Proxmox, so I can’t remember / don’t know what else there was. Anyway, depending on your infrastructure, OpenNebula might make sense. We are mainly migrating for some features and ease of administration.