Hi,

this is the first time using NVMe drives not directly connected to the CPU’s PCIe lanes so I hope I’m not making a rookie mistake. This setup is for experimentation only.

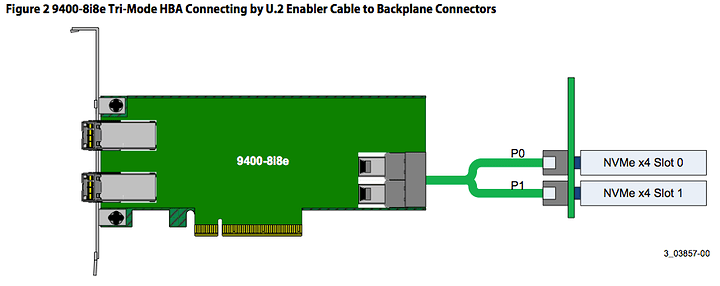

I got a Broadcom HBA 9400-8i8e Tri-Mode controller that supports SAS, SATA and NVMe drives (on internal connectors only).

The firmware is up-to-date (“Phase 11” firmware package consisting of 3 separate files, the main firmware file is the HBA_9400-8i8e_Mixed_Profile.bin, NOT the HBA_9400-8i8e_SAS_SATA_Profile.bin file for SAS/SATA-only use)

I use two 0.5 m cables (SFF-8639 to U.2) to connect to the Intel Optane 905Ps, unfortunately I do not have another system with U.2 ports at hand to cross-check the cables but they look “well” and two new defective cables should be rather unlikely.

The 905Ps work fine when connected to the motherboard directly (M.2 adapter bundled with the Optanes), using the same SATA power cables as with SFF-8639/U.2.

The HBA doesn’t see any devices when booting up the system so guess it is not related to the operating system (Win 10 Pro x64 1903, clean install from public ISO). The Optanes get warm to the touch as expected so at least they seem to get power.

The storcli64 command line tools also don’t recognize any devices connected to the controller.

Connecting SAS or SATA devices to the HBA’s internal connectors works just fine.

Is there any obvious mistake on my end?

I’ve tried…

- Reflashing the firmware

- Power cycles

- Switched cables between connectors

Thanks for any advice! FYI I don’t intend to use the Optanes with the HBA, but in the near future I wanted to get NVMe NAND drives for it since the intended system doesn’t have any PCIe lanes left, does not support PCIe bifurcation (X99) and the HBA is already in there for its external connectors.

Regards,

aBavarian Normie-Pleb