One other thing I’d suggest, but probably more applicable to racks with side panels - get a “wide” variant that has space for cable management down the sides. Because if you’re mixing servers and network stuff in the same rack, you’re going to run the cables up and down the edge one way or another. If it isn’t a “wide” rack then there’s nowhere to fit them neatly.

Less important with an open rack of course as you can just route them on the outside but…

I’d also personally go for 48 RU height instead of 42 because the cost difference is minimal and despite what the business says, you will most likely end up with a scenario where you are attempting to run “build generation 1” equipment along-side “build generation 2” equipment during a cutover phase. i.e., your rack space requirement inside of 3-5 years is likely to expand by say 50% over and above what it is today. At least, even considering only replacing your kit piecemeal when it goes EOL.

And/or you will need more equipment (for future applications) at some point than you think.

I’ve been dealing with small enterprise/local site deployments for about 20 years and it always happens… sure, this time “the cloud” might help, but the fact that they’re building their own room with NAS boxes today indicates to me they’re either not cloud focused or have a local workload that is unsuitable.

Definitely compare costs on your various options and if the difference is possible to bear, go large. You future self will thank you for it.

Because no one wants to de-rack and re-rack everything on a weekend, because you were a few RU short

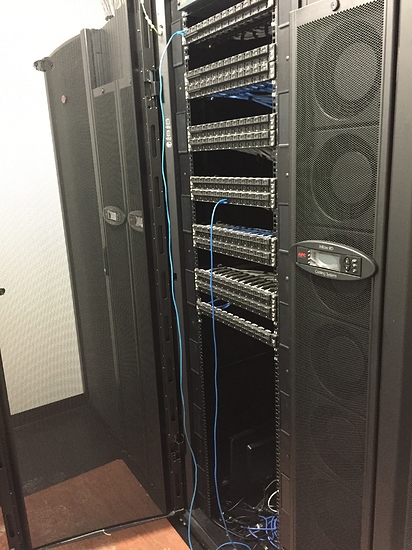

e.g., here’s my current setup (old photo i found) whilst being assembled…

(intended to somewhat show the wide rack with the holes/covers down the side for cable routing/etc. - there are cable guides fitted down the sides after this)

ALso pictured is the APC chiller units, basically i have cold aisle/hot-aisle setup and the hot side is sealed… air pumped out into the room and cooled by the chillers, and sucked back in via server/switch fans. sideways fan stuff (e.g. Cisco 4506/4507 side fans) get fed via an APC front to side routing fan/shroud.

edit:

oh yeah that’s only part of the room. there’s 9x48RU racks in there and 6 chillers…

3 for network distribution, 1 network core, 1 storage, 1 VM host, 2 for UPS and 1 for test/dev…