Oh, apple is… well, apple.

Most likely a license issue.

It’s a GPL compatible license. Should be fairly open…

Apple is allergic to GPL3. It’s why bash is still version 3 and they switched the default shell to zsh. Some other utilities are super old for that reason as well.

I mean, GPL3 is a pretty toxic license.

No better motivation than procrastination. I have a deadline on Thursday, but decided to do this most of the day instead:

So can I ditch Backblaze and switch to you?

How much space you need?

~200 GiB critical ← this is about ~$1.50 / month with BB.

~16 TiB non-critical ← only resides on local server. (media collection)

If my house burnt down I’d lose all that. Thinking about getting a safe deposit box with a couple hard drives in it.

I can definitely do that but ask me next year when I (hopefully) have all of this on solid ground.

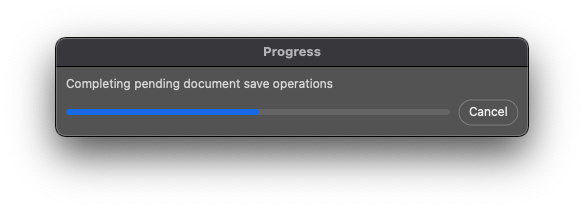

I think I can attribute literally thousands of dollars of income over the past decade to Photoshop’s inability to multitask during open and certain save tasks (when charging hourly).

Becoming good at using widely adopted, yet poorly designed software has been my Faustian path to meagre success.

@SgtAwesomesauce why no worky…

This dumps MAC addresses from dmesg as I would expect it to:

sudo dmesg | grep -o "\([0-9a-fA-F]\{2\}[:-]\)\{5\}[0-9a-fA-F]\{2\}"

However, this prints only the 5th portion of each MAC address:

- debug:

msg: "{{ dmesg_reg['stdout']

| regex_findall('([0-9a-fA-F]{2}[:-]){5}[0-9a-fA-F]{2}') }}"

TASK [debug] *********************************************************************************************************

ok: [localhost] => {

"msg": [

"18:",

...

What am I not understanding about python regex? It’s something with the parenthesis. If I expand out the (){5} it works. I tried throwing some back slashes in there but no dice.

regex_search works as expected also, but of course, only returns one value.

This fixes it but I don’t know what ?: actually does…

regex_findall('(?:[0-9a-fA-F]{2}[:-]){5}[0-9a-fA-F]{2}')

That will group multiple tokens together, without creating a group, which treats it as one match.

It looks like find all is only returning the final match, for some reason.

Ansible-ism?

I ran into a funny issue with Ansible where I had an absurd sequence of block rescue.

- block:

- block:

- block:

- block:

- block:

- stuff:

rescue:

- other stuff

rescue:

- more stuff

rescue:

- even more stuff

rescue:

- you get it

...

It worked, but the output was wonky. When I ran the role against multiple hosts in parallel and they were reporting under the wrong task.

TASK [not the task that's actually ran] ****************************

ok: [host]

I noticed it at first because I was getting assert tasks that were marked as changed which is impossible and then I realized I had hosts allegedly running tasks that the logic shouldn’t have allowed them to (a Rocky client identifying as FreeBSD for instance). BUT, the final results (actual effects on the hosts) indicated that the tasks all executed as they should.

Probably a bug, but I just refactored using when instead of block/rescue and all good now.

Also, fresh commits to my Ansible project, but I haven’t updated the readme yet, so official update on my thread for it is forthcoming.

This will bootstrap a host even if it doesn’t have Python installed and if Ansible is using root, it will create a randomish admin user (seed is based on hardware spec so it’s idempotent) and use that instead (can configure to use root if you want to).

Compatible with (although not completely tested):

- Arch

- Debian

- Ubuntu

- RHEL Family V7

- RHEL Family V8

- Fedora

- FreeBSD

- OpenBSD

- macOS

so… idk if it would work in your situation…

but i like to use includes to have my block looped until it works…

example…

bkgrnd:

main playbook will get to point where it will install patches…

had some trouble with katello-agent… and epel…

so setup this to get it to retry a few times…

# cat tasks/task-os-patching.yaml

- name: Block task OS patching with alt method

block:

- name: Set the retry count

set_fact:

retry_count: "{{ 0 if retry_count is undefined else retry_count|int + 1 }}"

- name: Lets check to see if there are updates available

shell: yum check-update

args:

warn: no

register: yum_result

- name: Just update all the things if exit code is 100

yum:

name: '*'

state: latest

when: yum_result.rc == 100

notify: RebootServer

- pause:

seconds: 3

- name: Run script that will generate patch audit files

shell: /home/oper/scripts/system_was_patched.sh

args:

register: patchaudit

- name: view the patch audit script results

debug:

var: patchaudit

- name: Force all notified handlers to run at this point, not waiting for normal sync points

meta: flush_handlers

- pause:

seconds: 3

rescue:

- fail:

msg: Ended after 3 retries

when: retry_count|int == 3

- debug:

msg: "Failed to get updates installed on first try... try alternate method. "

- pause:

seconds: 4

- name: remove known package that has conflict

yum:

name:

- qpid-proton-c

- katello-agent

state: absent

- name: Just update all the things

yum:

name: '*'

state: latest

notify: RebootServer

- pause:

seconds: 3

- name: reinstall the katello agent package

yum:

name: katello-agent

state: installed

- name: Run script that will generate patch audit files

shell: /home/oper/scripts/system_was_patched.sh

args:

register: patchaudit

- name: view the patch audit script results

debug:

var: patchaudit

- name: Force all notified handlers to run at this point, not waiting for normal sync points

meta: flush_handlers

- pause:

seconds: 3

- include_tasks: tasks/task-os-patching.yaml

- debug:

msg: alternate method completed....

and then i have another one that will retry until a vm reaches desired state…

While its waiting for vm to power on or off in esxi/vcenter

# cat task-wait-until-vm-state-desired.yaml

- name: Block task loop until vm power state reach desired

block:

- name: Set the retry count

set_fact:

retry_count: "{{ 0 if retry_count is undefined else retry_count|int + 1 }}"

- name: Look up the VM state called {{ vm_name_here }} in the inventory

include_tasks: task-lookup-all-vms.yaml

- name: Set fact info about vm state

set_fact:

my_vm_state: "{{ item.power_state }}"

with_items:

- "{{ all_vms.virtual_machines | to_json | from_json | json_query(query) }}"

vars:

query: "[?starts_with(guest_name, '{{ vm_name_here }}' )]"

query_old: "[?guest_name=='{{ vm_name_here }}']"

- debug:

var: my_vm_state

- pause:

seconds: 5

- name: see the vm state after attempted change via vmtoolsd

debug:

var: my_vm_state

failed_when: my_vm_state != desired_state

rescue:

- fail:

msg: Ended after 8 retries

when: retry_count|int == 8

- debug:

msg: "Failed to get desired VM state of {{ desired_state }} - Retrying..."

- pause:

seconds: 10

- include_tasks: task-wait-until-vm-state-desired.yaml

I haven’t run into any issues like this yet, but I’m sure I will. I’ll keep this in my back pocket for when I do.

There is a retry/until function in Ansible, but I’m not sure you can run it on a block or on an include_tasks, so not sure how useful it would be in your case?