I recently purchased two BD790i Motherboards from Minisforum. They both will not hold their CMOS settings for more than 5 min without ac power connected. Has anyone else seen this issue? I’ve tried multiple different psus, and I’ve take some voltage readings from the battery, the battery connection pins and the CMOS clear switch which all read the expected 3.3v so the battery isn’t bad. Any ideas? I contacted support but they just jumped to RMA thinking the CMOS battery is dead based on some Chinese I translated from their email. I don’t want to RMA these if this is a design flaw, I’d rather just return them outright.

Just joined to say I have the same issue with the board I got yesterday. Am worried about the stability of the board too, Its a shame as it is a unique pieve of tech. Low power and high performance. Am still testing it out but if anyone else could chime in with the same board and how it working for them.

This is interesting. I have a BD790i too, but my problem is different: mine doesn’t boot with an m.2 fitted - and once it’s removed the CMOS is reset. I have emailed them but cpnxp has just prompted me to translate the Chinese in the email…

oh ![]() I just got a ‘Thanks, sincerely Minisforum support’. So I guess they just forgot to switch to an English signature.

I just got a ‘Thanks, sincerely Minisforum support’. So I guess they just forgot to switch to an English signature.

Interesting, what kind of M.2? I’ve had two in mine and never had any issues. One was a Samsung PM9B1 256Gb for an OS drive and a SK hynix Platinum P41 2TB both work as normal and are both PCIe 4.0 x4. I also had a Connectx4 Lx 40Gb Nic in the 16x slot. As for the email I translated, they replied to the response from ‘engineering’ but changed the To field to my email, which they probably weren’t supposed to do, given they refused to elaborate on the issue when I pressed them.

I am using 3 different NVMe drives with mine, a 512GB Samsung 950pro, 2TB Sabrent (both for OS testing) and 4TB Crucial as the 2nd drive. All worked in variety of combos. But am finding inconsistent load times on the secondary (non OS, Crucial) drive. Something seems very ‘beta’ to me with this board, am seeing some odd behaviours between Win 10 and WIn 11, various things like when using UXTU for on-the-fly APU changes it sometimes just shuts down within a few seconds of setting adjustement (just small changes like max temp, so now use from BIOS to set max pwer draw etc)

So I’ve had a chance to try a few different things. It looks like the CMOS storage is a separate issue for me - with no PCIe or NVMe devices I get the behaviour of not saving settings over AC power removal - the CR2032 voltage is a healthy 3.3V.

I’ve successfully had a quad-gigabit card in the PCIe x16 slot so that seems fine for me. I don’t have anything that boots successfully in the NVMe slots. I’ve tried a Samsung 990 Pro and a Crucial P3 Plus - both individually (in each slot) and together.

I’m wondering if this is some kind of power configuration issue - one thing I tried was installing the PCIe card while the system was shutdown, but still AC connected - this resulted in the PSU hiccupping and refusing to start.

Feels like I’ve reach the point of no more easy debugging.

Hi there,

please forgive for latching onto this posting, but, I have a similar problem and thought it would fit nicely under the topic.

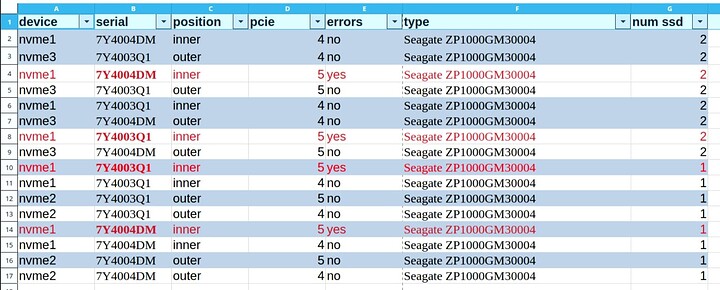

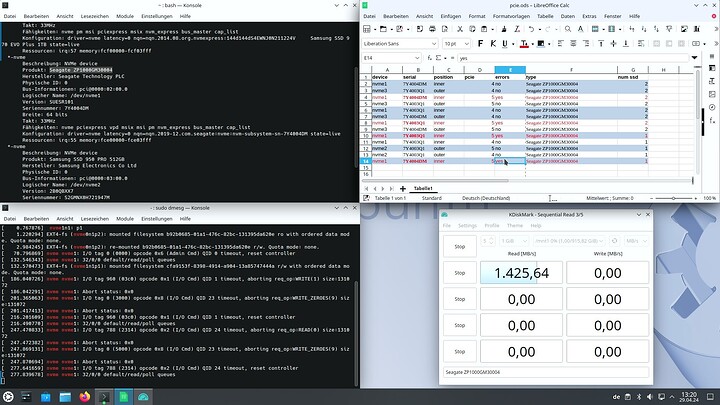

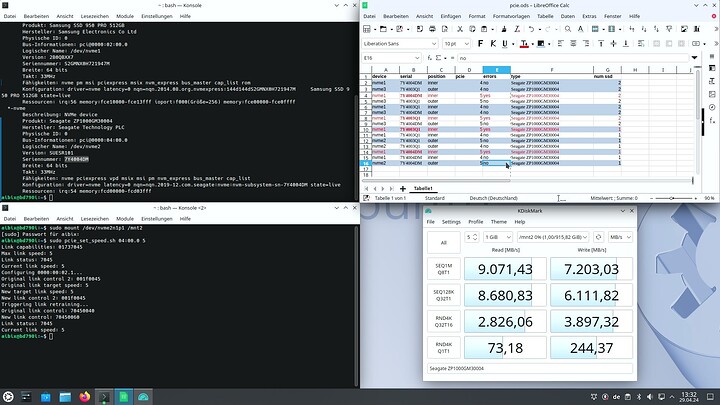

I also have a BD790i and was eager to try it with PCIe 5.0 SSDs. In my case these are two Seagate Firecuda 540 (1TB).

I have installed Proxmox with a ZFS RAID1 and started to notice weird slowdowns (unpacking packages from apt took ages, for example). This was not at all what I was expecting from blazing fast PCIe 5 SSDs … so I took a deeper look.

After some testing it was clear that the condition would always manifest at the “inner slot” (the one near the CPU, for lack of a better explanation):

As you can see from the spreadsheet, I’ve tested any of the two SSDs in any of the two slots, either with both slots populated or just a single slot. Every test was conducted at PCIe 4.0 and PCIe 5.0 LnkSpeed.

This led me to the conclusion, that the fault must be with the NVMe-slot, not the SSDs.

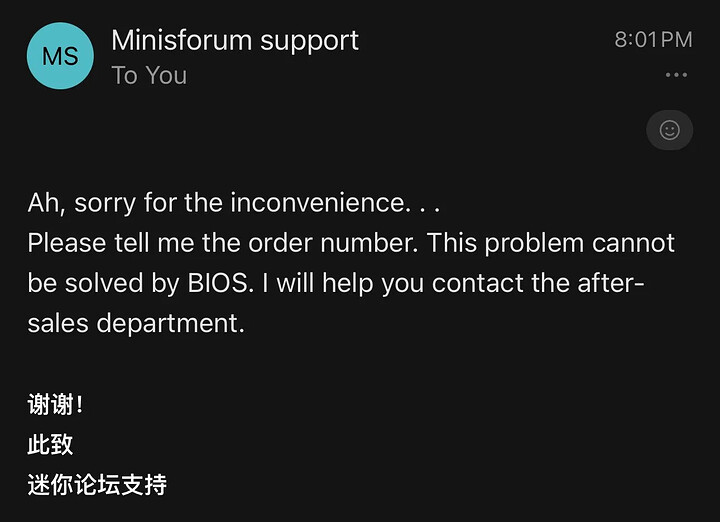

I have contacted Minisforum about it, so far they just suggested a BIOS update. The system already came with the suggested BIOS.

Here is an example of my testing and the errors I saw:

Same SSD (see serial column) at PCIe 5.0 LnkSpeed in the “outer” slot:

This is 100% reproducible at any time.

Did anyone else see similar effects? Maybe someone tested different SSDs and had better luck? Any input would be appreciated.

Support went quiet for about a week for me, so I emailed them again. They didn’t suggest any troubleshooting steps (for either the lack of BIOS settings persistence, or my NVMe problems). I’ve now been passed to, I guess, the returns team to process something - they haven’t said if they expect it to be a repair or a refund.

I don’t suppose anyone on this thread also has the BD770i and has noticed any differences between the two boards?

I had the BD770i for a short period of time. It didn’t have the BIOS persistence issues I’m having with the BD790i.

I only had the BD790i and that one had the BIOS persistence issue, although I was more concerned with the NVMe errors.

I was able to convince them that the board is faulty, as I clearly showed that it was always the inner slot that produced the errors at the advertised PCIe5 speeds.

The board went on its way today and I am not sure if they will replace/repair or refund it.

Repair seems not possible to my laymen’s understanding as it would seem that the silicon of the CPU ist faulty.

If not stipulated, they will just replace it. I had to be very clear and specific several times when I wanted a refund after my second defective UM790 Pro. And they take their sweet time to reply too.

Just registered to confirm the same thing - the CMOS battery on mine seems to be good itself (3,26v), the connector also seems fine… but the bios settings reset themselves after a minute or two without mains power. At this point im pretty sure its probably a first batch problem. Not a lot of people are gonna notice it if they’re running it stock… My main problem with it is that it resets the fan curve…

It also came with a horrible paste job but PTM seems to have fixed the temps quite a bit.

No problems with the M.2 slots with gen4 drives though.

Any luck with support, are they offerring just straight up replacements? Has anyone received a board that doesn’t reset the settings without power? I’m wondering if its worth it to try and exchange it(I already bought a license for it)…

edit: Yeah, also, upon PSU hiccup/reset it refuses to start and u have to disconnect mains for about 10-20 secs for it to start up again.

They just got back to me. It is in fact a bad batch. They advised returning it and not ordering again until July.

I got mine delivered same day in Japan a month ago and apart from the CMOS battery, it performs stellar - I re-pasted the die and it runs superfast for small power cost (Cyberpunk, 1440p Ray Tracing Ultra is around 115fps and sips just 50-55W max from the CPU. Cinebech R23 mulitcore ~32k … together with a 4070ti in low power mode its using ~170W for the system from the wall. I could have returned it but it will be months before able to get it again and likley a higher price. Hope it stays the distance!

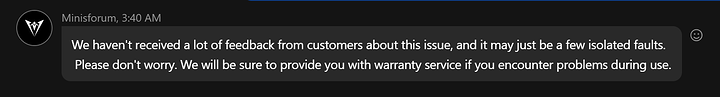

Minisforum claims the BIOS issue has been fixed.

I repeated #2 linking reddit 1b9qsiu (I am not allowed to link here but that’s enough to find it with Kagi/Google) , here’s the answer

I built a system on the first of May 2024 with the BD790i mainboard and will double-check to see if I have the BIOS retention problem; I had not noticed it. I do have an open issue with support regarding my two Crucial Gen5 T705 1GB SSDs reporting errors when installed in slot 0 closest to the CPU. Here is a sample of the IO Errors from “dmesg”.

`May 19 16:18:06 lab kernel: nvme nvme0: I/O tag 64 (7040) opcode 0x2 (I/O Cmd) QID 11 timeout, aborting req_op:READ(0) size:131072

May 19 16:18:06 lab kernel: nvme nvme0: Abort status: 0x0

May 19 16:18:36 lab kernel: nvme nvme0: I/O tag 64 (7040) opcode 0x2 (I/O Cmd) QID 11 timeout, reset controller

May 19 16:18:36 lab kernel: nvme nvme0: 32/0/0 default/read/poll queues

May 19 16:18:36 lab pvestatd[1978]: status update time (60.580 seconds)

May 19 16:19:26 lab kernel: nvme nvme0: I/O tag 896 (c380) opcode 0x2 (I/O Cmd) QID 20 timeout, aborting req_op:READ(0) size:131072

May 19 16:19:26 lab kernel: nvme nvme0: Abort status: 0x0

May 19 16:19:57 lab kernel: nvme nvme0: I/O tag 896 (c380) opcode 0x2 (I/O Cmd) QID 20 timeout, reset controller

May 19 16:19:57 lab kernel: nvme nvme0: 32/0/0 default/read/poll queues

May 19 16:19:57 lab pvestatd[1978]: status update time (60.849 seconds)

May 19 16:23:37 lab kernel: nvme nvme0: I/O tag 832 (e340) opcode 0x2 (I/O Cmd) QID 23 timeout, aborting req_op:READ(0) size:131072

May 19 16:23:37 lab kernel: nvme nvme0: Abort status: 0x0

May 19 16:24:07 lab kernel: nvme nvme0: I/O tag 832 (e340) opcode 0x2 (I/O Cmd) QID 23 timeout, reset controller

May 19 16:24:07 lab kernel: nvme nvme0: 32/0/0 default/read/poll queues`

My Build consisted of:

ProxMox v8.2.2

- ZFS over (6) 6TB Sata drives (Mirror, Mirror, Mirror)

- 64GB (2x32GB) Crucial DDR5 5200 M/s

- UGreen USB C to Thernet Adapter 2.5G

- (2) Crucial T705 1TB PCIe Gen 5 NVMe M.2 SSD, up to 13600 MB/s

- (1) 9220-8i 6Gbps SAS/SATA HBA FW: P20 9211-8i IT Mode : PCIe x8 Gen3

- Artic P12 Slim PWM PST - 120 mm Case Fan

Aside from temperatures being higher than I would like and the persistent NVMe I/O Errors, I’ve had no other problems with this build. Think NVME Gen5 might have been a bad idea, although the problem seems to be with slot 0 and not slot 1. Saga continues…

Overall, I am happy with this build.

James,

I have the bios not saving issue and also I tried the always on setting for auto power on but it is not working.

Would anyone please try this and feed back?

I have the bios 1.05 and looks the latest one in their website. If anyone has newer version, would you share it

Did you find a solution?

Having similar problems that it wont even power on if there is an nvme drive installed when using a 550w psu. If i use a 850w psu then it will boot but either not recognise the drive or lose connection to it after a few mins.

I’ve had the BIOS settings thing from the start with my BD790i. In the last week, it will now not even POST.

Does anyone have any details about the “bad batch” of boards?