Hi Everyone,

I have two Mellanox MHGH29-XTC InfiniBand cards that I would like to use to connect my ZFS file server running Debian, to my workstation running windows 10 using ipoib.

I have had the cards and the cable working on a spare disk running Debian connected to my workstation with full speeds using iperf, so I know the hardware works.

The issue is on windows I can not get the cards to show as anything other than network cable unplugged. The cable is plugged in and the static ip address has been setup, as well as opensm running on my server, this is the same as before the only thing that has changed is the OS.

I have tried everything I can think to try to get this to work but for what ever reason on windows I cant get it to show the link as up. I also cant seem to find a good way to troubleshoot what is going on as I cant simply look at the kernel log like I could under Linux .

Any ideas would be greatly appreciated as at this point I feel like I am going mad.

Kind Regards

LainShot

Okay I fixed the issue, but I am going answer explaining how so if anyone else has these issues they can fix it.

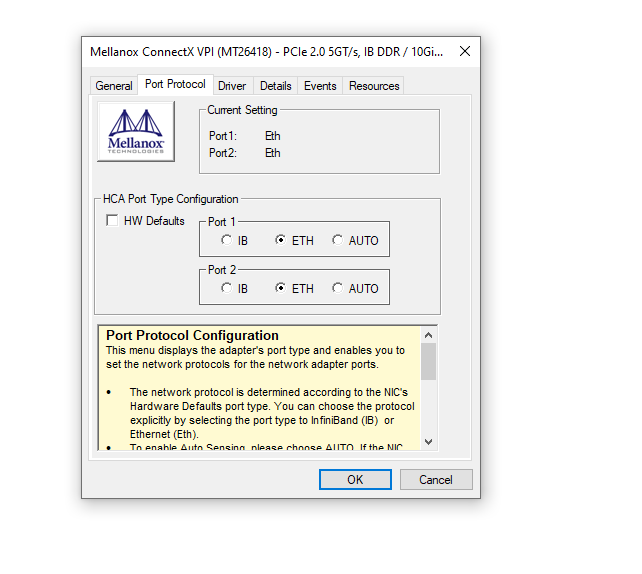

Infiniband mode seems to be broken, in the windows driver, I don’t know why. I also could never see the option to set the card to Ethernet mode.

Turns out if you find the device under system devices in devmgr you then have the option to set the card to Ethernet mode, you dont have this under the network adaptor in devmgr.

once this is set to Ethernet you need to do the same under Linux.

You do this by running connectx_port_config command and setting the port to Ethernet. Then you will see to new adaptors under IP addr, and you need to setup the static IP.

Once a static has been setup on both sides you need to bring up the interface using ifup, and then it should connect and start working

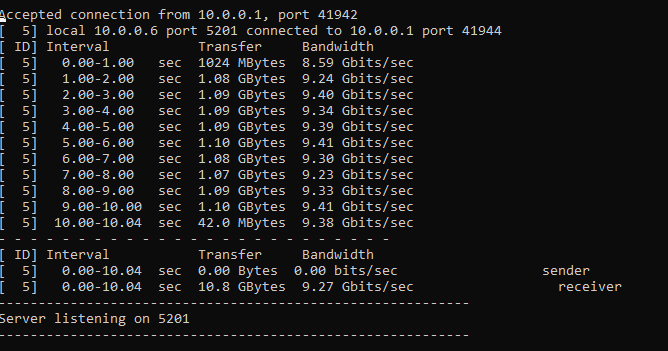

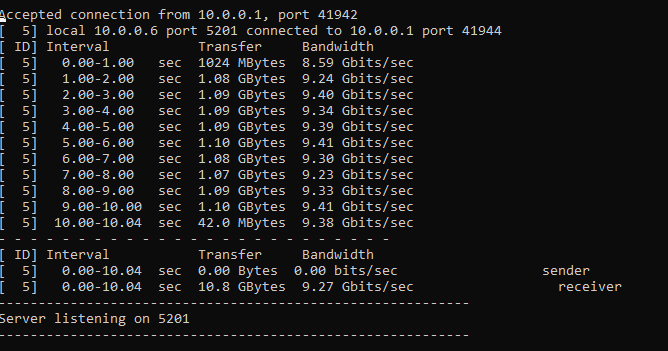

Using this mode I do not get as high speeds as I do using ipiob however it is working and that the main thing below you can see the iperf.

If you need a version of the drivers on Linux that works for the latest version of Debian I put it on my GitHub called LainShot under MLNX_OFED_LINUX-for-debian10.4

This works out very well now as the cards were only £8 each and the cable £10.

It always seems to be as soon as I make a post I fix the issue, but I thought I would leave the fix as the reply to hopefully help someone else.

I think Infiniband is based on RDMA (remote direct memory access), and RDMA is only available in windows 10 pro for workstations or higher, including windows server of course.

SMB direct also has to be enabled in windows features to get RDMA working.

1 Like

ah okay, if that is the case then that makes sense

Hi all,

if someone is still interested in figuring this out, DM me.

to be very brief infiniband is a software defined network, you need to run a subnet manager in order for it to operate. has nothing to do with RDMA

This is an older post, but he was running a subnet manager (OpenSM).

You’re absolutely correct about RDMA not being required, IPoIB should just work even without any RDMA support, you just leave quite a bit of performance on the table (more than most home users would know what to do with, more than likely, which doesn’t mean it’s not fun to play around with, of course).

Judging by what the documentation for this model turns up these are DDR adapters, which dates them to around 2005-2007. Driver support might not be in the greatest shape for those.

New here, and created an account so I could reply to your post.

I love these cards and cables.

I am running a setup, not getting the speeds you are but close…

I have these cards in Windows 10, Server 2019 and ESXI 6.7 (ESXI 7 EOL the card so im sad)

So I have these cards connected to an HP Procurve 6400CL switch. Then that switch connects to a Brocade FCX648S. The back of it has 2 cx4 style ports. Then the rest of my 1gb network is here as it is a 48 port switch.

totally digging it… all 10gb and cheap…

i still have like 8 cards, 15 cables. If I have a need for more, i could get another procurve switch and plug machines in to it as the Brocade still has 1 port left to bring 10gb in.

my next testing is to get windows 10 pro for workstations to see if that fixes my speed as im getting about 8.5Gb and Pro for Workstations has RDMA and see if that helps as servers have that…

Peace out!

**********edited

so iperf3 keeps goin all over the place… weird…

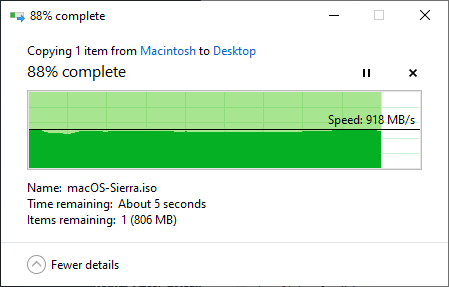

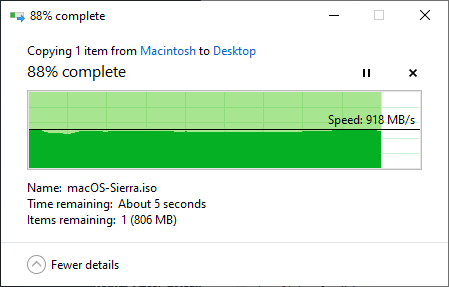

says 4gb then 5gb… etc… but these numbers of copy file is the meat