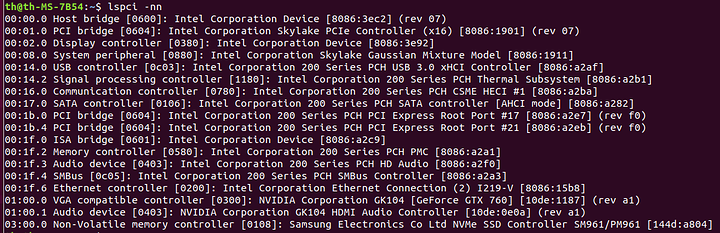

So I can see my GPU on the PCIE slot and I am going to use the built-in GPU for my host.

Next I need to check groups right? And isolate the GPU if it is not isolated.

lspci -nn just shows you the addresses and PCI IDs, it does not show anything about IOMMU. The following quote is from this page on the Arch wiki and it will show you your IOMMU groups(if they exist)

Ensuring that the groups are valid

The following script should allow you to see how your various PCI devices are mapped to IOMMU groups. If it does not return anything, you either have not enabled IOMMU support properly or your hardware does not support it.

#!/bin/bash

shopt -s nullglob

for d in /sys/kernel/iommu_groups/*/devices/*; do

n=${d#*/iommu_groups/*}; n=${n%%/*}

printf 'IOMMU Group %s ' "$n"

lspci -nns "${d##*/}"

done;

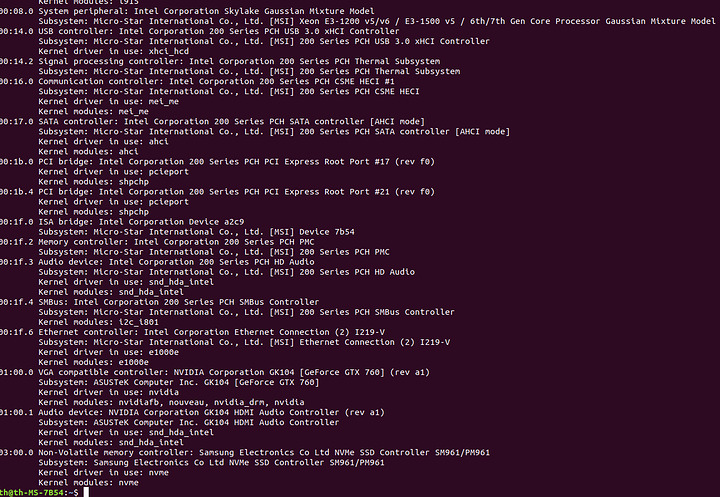

You can check if the GPU is isolated with lspci -k, if it is using VFIO then it is, if it is using Nvidia or Nouveau then it is not.

Thanks, I got this from lspci -k

So I need to specify the VFIO in the boot system (I read somewhere it has to bind the GPU before the drivers load).

If I do this will my screen turn black?

I have two GPUs, my GTX760 (which I want to use for the passthrough) and the Intel CPU/GPU.

Just a heads up, I edited the iommu script formatting in your post,

You can embed code inside a code block like so:

Some of this may be distro dependant, this example is for Debian.

Add vfio-pci.ids=ids # of your Nvdia GPU and Nvidia audio to the boot parameters in /etc/default/grub. Your IDs should be 10de:1187 and 10de:0e0a. Then update-grub.

Non Debian based distros have a different command to run to update grub, and some distros may have you put the IDs for vfio in a different place.

Also, thanks @catsay

Yeah I already added those to the grub and so far I have VFISO on the GPU audio only, while the GPU itself has bind to Nvidia drivers.

When I check the groups I have:

GPU group 1 — 01:00.0

GPU audio group 1 — 01:00.1

PCIE slot group 1 — 00:00.0

So I guess I have to remove the Nvidia driver somehow, or make the vfio-pci driver bind the GPU before the Nvidia driver.

Do I have to isolate the GPU/GPU audio as well? (isolate from PCIE slot)

Are you using the proprietary Nvidia driver?

If you are not planning to use the card on the main system, I would remove the proprietary driver, and if you are not using any Nvidia graphics card or devices on your host you can simply blacklist the nouveau driver if you cannot get vfio to bind the device first (it’s what I ended up doing).

To do this: sudo nano /etc/modprobe.d/blacklist.conf and then add blacklist nouveau. This can also be done by adding modprobe.blacklist=nouveau to the GRUB_CMDLINE_LINUX_DEFAULT line in /etc/default/grub.

The only devices in the IOMMU group is the GPU and the GPU Audio, you don’t have to do any more isolation, the 0:00.0 device is not an active PCIE device so libvirt shouldn’t have any problems with it.

Thanks, I removed the Nvidia proprietary drivers and blocked the nouveau drivers, so now I have a black screen when I boot. I guess that could be a sign that the vfio_pci has got a hold of the GPU (or not).

Now, I just need to get my host screen to the Intel onboard GPU, any suggestions?

Yes, you need to boot from the iGPU on your processor.

Make sure that your UEFI/BIOS is set to boot with the iGPU (internal graphics instead of discrete graphics) and then plug your monitor into the HDMI/DVI/VGA output of the motherboard.

Perfect!

Now we are getting somewhere…

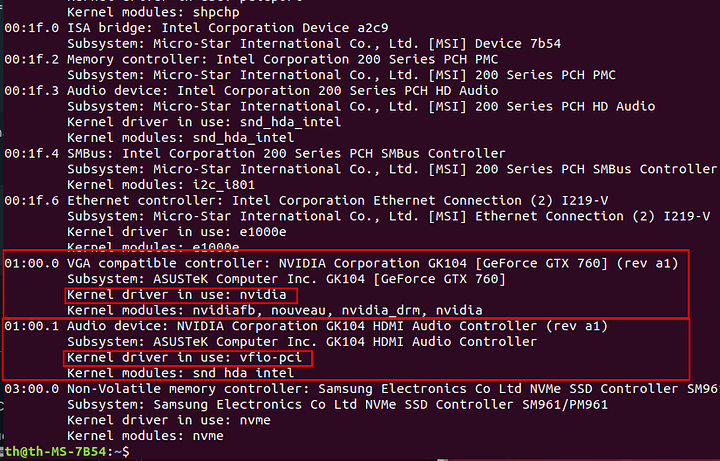

Yup, both the GPU and the GPU audio is now vfio_pci when I do “lspci -k”

Awesome, things are pretty easy from here on (except audio), make sure to hide that you are running in a VM from windows since you are running Nvidia graphics. (Nvidia blocks their driver from running on non quadro vm’s).

Yeah I got a VM up and running, switched to DVI (I only have one monitor, but with several inputs) and there was my boot menu.

Selected the CD-drive and Windows 7 started to boot, but it hangs after a few minutes. I think the SATA drive is not set up correctly.

I am going to try and download the Windows 10 ISO, I read somewhere that Windows 10 is more “compatible” with QEMU (or VMs in general I dunno).

Yeah I want as much stealth as possible in my VM for sure.

I am also going to use full VPN etc. I just want a windows shell running on Linux really, so that I can control everything that goes in and out and still be able to play games.

Are there options in Virt-manager that I can enable for the “hiding” you mention?

Are you using VirtIO for the drives and nic? Emulating SATA is very inefficient in comparison.

You need to edit the vm xml for hiding from windows that you are in a VM. Check the following link and scroll down a bit, it’s what I did and it works like a charm.

run sudo virsh YOURVM and do the following (excerpt from the above link):

Delete the first line and replace it with

<domain type='kvm' xmlns:qemu='http://libvirt.org/schemas/domain/qemu/1.0'>

Now go all the way to the botton right before the closing domain tag and add:

<qemu:commandline> <qemu:arg value='-cpu'/> <qemu:arg value='host,hv_time,kvm=off,hv_vendor_id=null'/> </qemu:commandline>

If you are using OVMF(it has the tiano core boot splash screen), then only windows 8 or higher is compatible. WIndows 7 does have some EFI booting capability but it does not work with OVMF. If you are using Seabios then windows 7 is fine but graphics passthrough is sometimes harder to get working.