I tried your configuration with having the first core of each CCX for linux and having the rest for the VM with ‘fifo’ specified. I also changed the boot parameters to isolate the cores.

isolcpus=2-7,10-15 nohz_full=2-7,10-15 rcu_nocbs=2-7,10-15

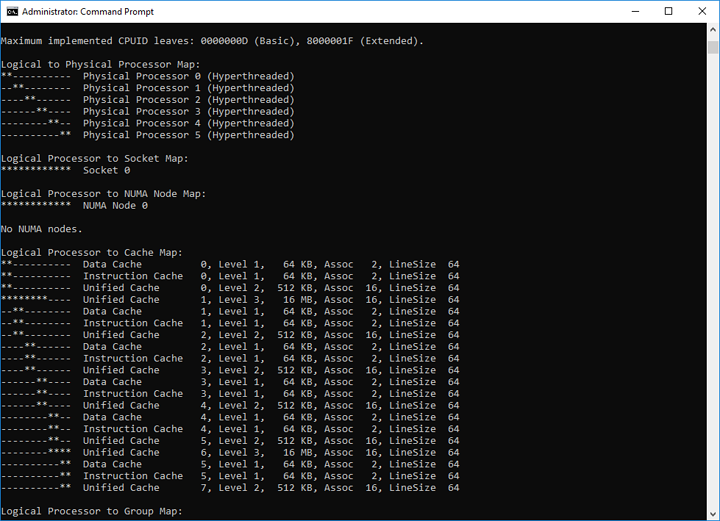

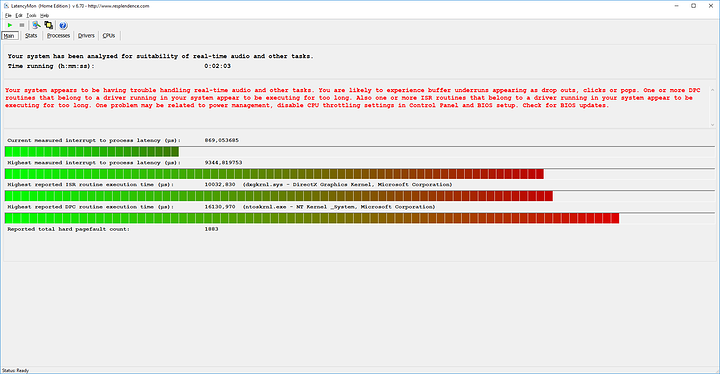

No changes. Stutter still happens under load. This configuration also causes problems with L3 cache as Windows doesn’t adjust what it sees. Screenshot to follow.

Summary

Windows hasn’t adjust which cores has a shared L3 cache, only that x amount of cores share the same. This means you’re forced to use the 2nd CCX as your first set of cores, with others to follow as you can’t isolate the core0 in linux. I’ve tried to correct the wrongly reported L2 and L3 cache, but this is a bug. If you don’t have feature policy='require' name='topoext'in your libvirt config, then Ryzen correctly reports the right amounts of L2/L3 cache.

Unfortunately you do want this as it enables SMT. What’s important is that Windows knows which core is paired with who and what shares what for optimal performance. Specifying topoext also seems to make topology changes irrelevant as they took no effect within the VM.

Something to note, vcpusched can safely be used on all cores if you aren’t using hyperthreading within the VM. There’s no stutters and things work great. It was a configuration I used until I learned how to compile qemu3.0 for SMT support. These stutter problems with fifo has arisen since I enabled SMT.

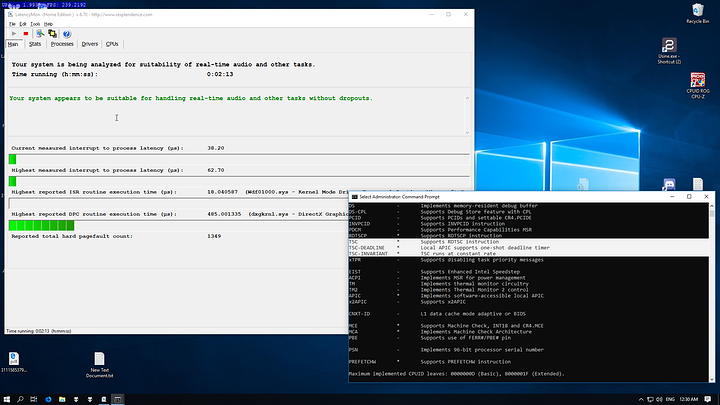

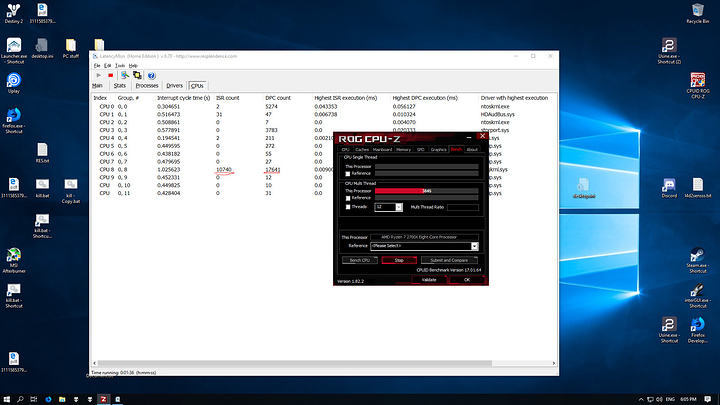

I also tested few other things and managed to pin down the source of the stutters. It was core 8. The first core that Windows sees in the ‘2nd’ CCX that shares the ‘2nd’ set of L3 cache. Windows seems to have pinned the driver for my mouse to that core, and having two sets of ‘fifo’ to the same physical core 'causes the stutters while under load. Screenshot to follow

Summary

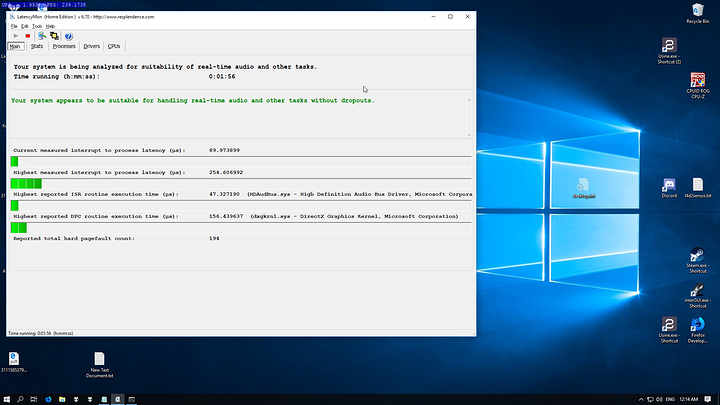

ISR and DPC count would only rise if I moved my mouse. Otherwise the counts would remain the same. Removing vcpusched fifo on the hyperthreaded core has fixed the issue. This does beg the question whether these stutters will happen on the other cores under high load, but Windows seems to place most of the interrupts on core0 and the others on the first core of the 2nd L3 cache. Everything seems to run great so I’ll leave it at that for now.

This also means, for others configurations - and I speak for only Ryzen, you have to make sure Windows has correctly identified which is the first core that has the 2nd set of L3 cache. While testing urmamasllama’s suggested config, LatencyMon still reported that the majority of interrupts were still between core0 and core 8. So do make sure, else performance seems to suffer. I did test a 4c/8t just to be sure, and sure enough, Windows just dumped it all on core0.

Overall I’m satisfied with the results: process interrupt has moved down to 9us at best with an average low of 13us under no load. Most often it jumps between 13us to 32us under the heaviest so I can’t complain.