So after we went through the guide on PCI passthrough, there is a question that was raised quite a bit: what exactly is the performance difference between running games in a VM vs running them natively on the host?

This question has a much broader attachment to it; how well can KVM actually run a guest OS? What is the performance difference between the host and a virtual machine?

I ran a slew of games and benchmarks to be able accurately represent a wide variety of performance measurements. The system used for testing is my own PC:

- Intel i7 4790k overclocked to 4.2 GHz

- 32 GB of G.Skill DDR3 RAM running on an XMP profile for a total speed of 2133 MHz

- EVGA GTX 980 Superclocked

- Gigabyte Z97MX Gaming 5 motherboard

- 256 GB Samsung 850 Pro M.2 SATA SSD connected directly to the motherboard’s onboard M.2 slot

I used Windows 8.1 Pro as a clean install with the pre-installed metro apps removed and no internet connection, this was the same setup for the virtual machine.

The host for the virtual machine was the same PC running Debian Stretch:

- Kernel 4.8

- OVMF for UEFI support

- Chipset i440FX

- 10 GB of RAM

- 4 cores and 2 threads for a total of 8 threads (to mirror the host CPU)

- disk format was qcow2 using writeback caching

- disk file itself was running off of the same M.2 SSD

- GTX 980 passed through to the VM

- Emulated with KVM and all Intel virtualization technologies enabled

All benchmarks and games were run with identical settings.

Here is the Google Docs spreadsheet with all my data and charts.

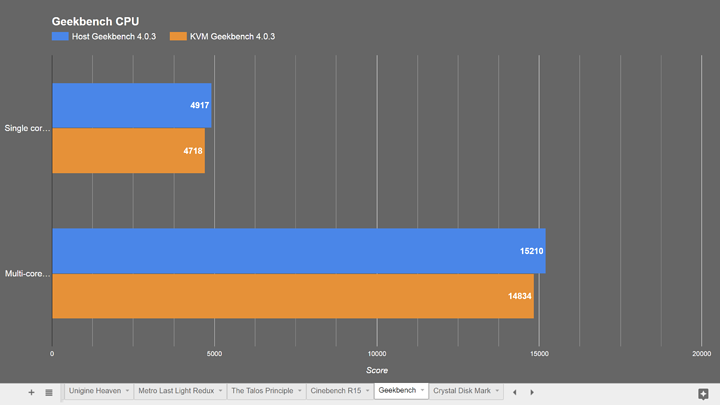

Let’s start with CPU performance.

CPU performance is amazingly almost the same between the host and the VM. In Geekbench the host scored 4,987 on single core and the VM scored 4,718, not bad. In both the single and multi core tests the VM’s performance was 4.5% lower than the host.

Cinebench yielded relatively the same result, the host scored 817, and the VM scored 775. This results in a performance difference of 5.14%. When you think about it, that’s insane. Emulating an OS in KVM with Intel’s virtualization acceleration can achieve 96% of the host’s CPU performance. This right here is why corporations are running everything in virtual machines.

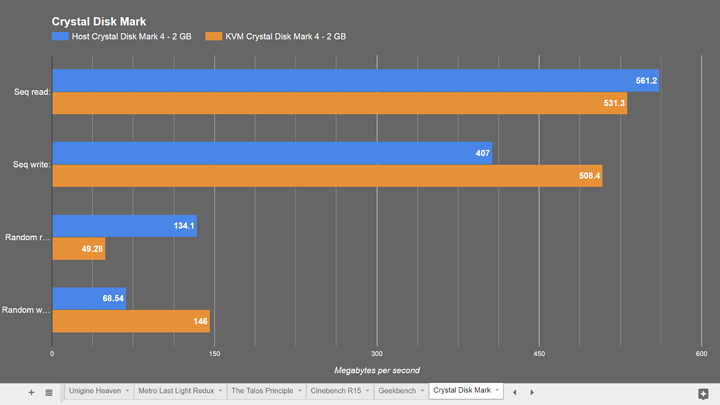

Next on to disk performance.

qcow2 is generally agreed to be the best storage format matching performance with compression. I ran Crystal Disk Mark with a test size of 2 GB. I tested both sequential read/write as well as random 4 kilobyte read/writes. The results for this are a bit skewed because of writeback caching on the host.

The sequential read speed on the host was 561 MBps, the VM was 531 MBps, the VM being about 5% slower than the host.

The opposite is true for write speeds however, the host was 407 MBps, the VM was 508, leading to the VM being about 25% faster on writes. Again this is probably due to some effects of writeback caching.

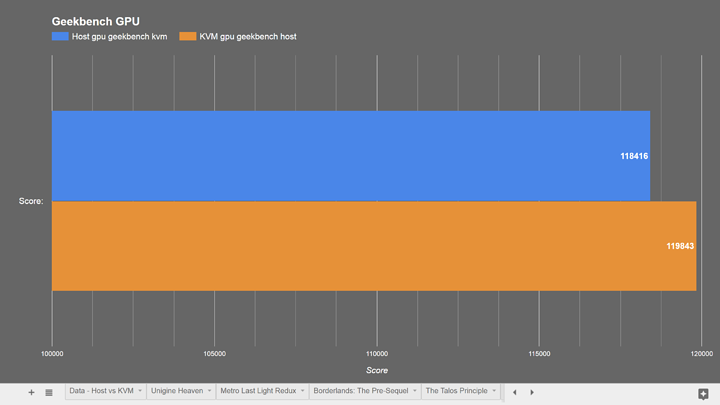

Now for the fun part, GPU performance.

This is not only the results of the real gaming performance, this is also relatively a measurement of the performance difference of the PCI bus itself. The difference in CPU performance wouldn’t have an impact here since none of these games are CPU intensive. The performance difference here is a result of PCI passthrough via KVM, the host handing off a PCIe device to a virtual machine.

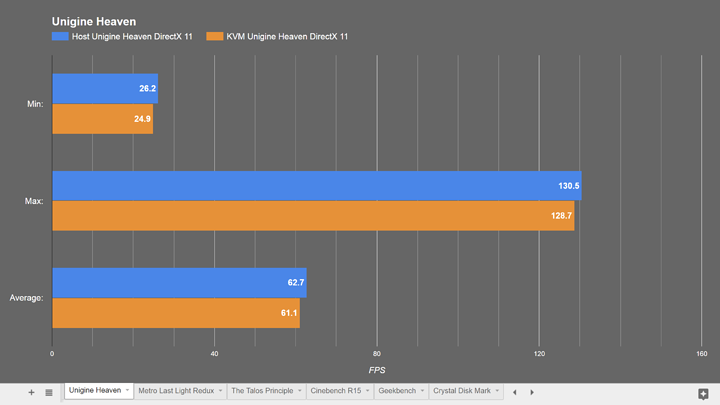

Starting with Unigine Heaven, this should be the most accurate representation of all the GPU tests. The benchmark runs exactly the same every time and reports specific data with each run.

In Unigine Heaven the host ran at an average of 62.7 FPS, the VM ran at an average of 61.1 FPS for a performance difference of 2.5%. This just like the CPU results is astonishing. KVM can run a guest OS at 95-98% of the host’s native performance.

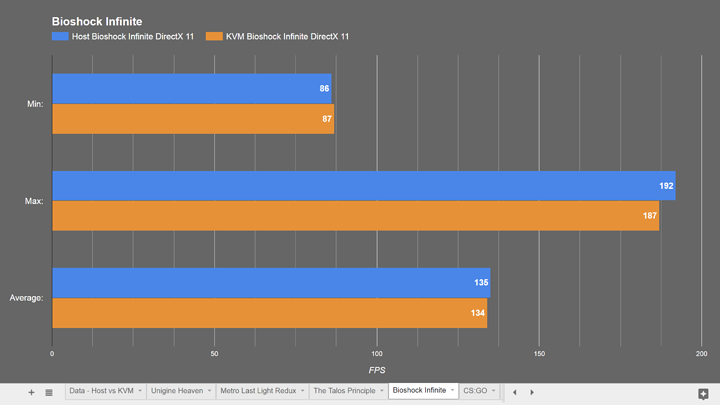

Bioshock Infinite only saw a 0.74% difference in performance,

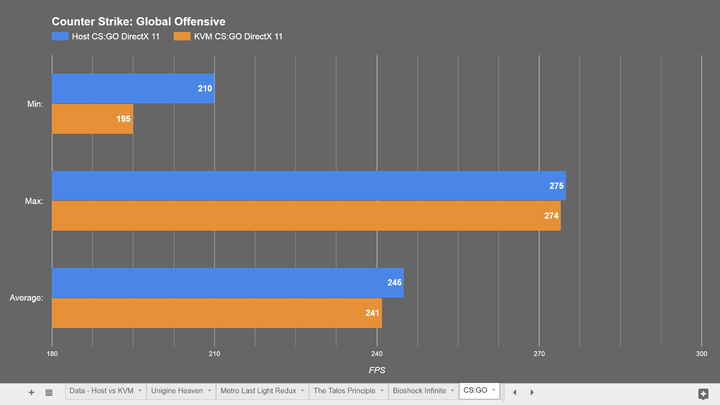

CS:GO only saw a 1.63% difference,

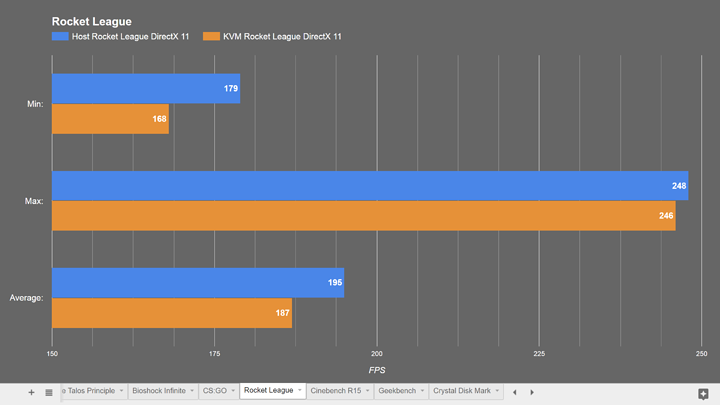

and Rocket League was only a 4% performance delta.

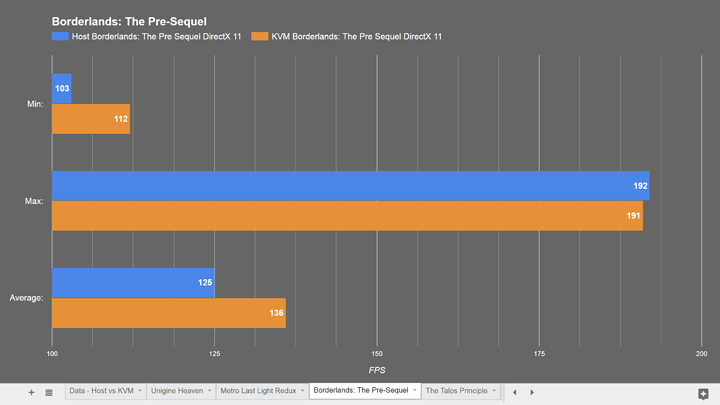

Some games actually ran better in the virtual machine, whether that was due to something related to the better disk read speeds in the VM or another anomaly I can’t say.

Borderlands: The Pre-Sequel ran 8.7% faster in the virtual machine

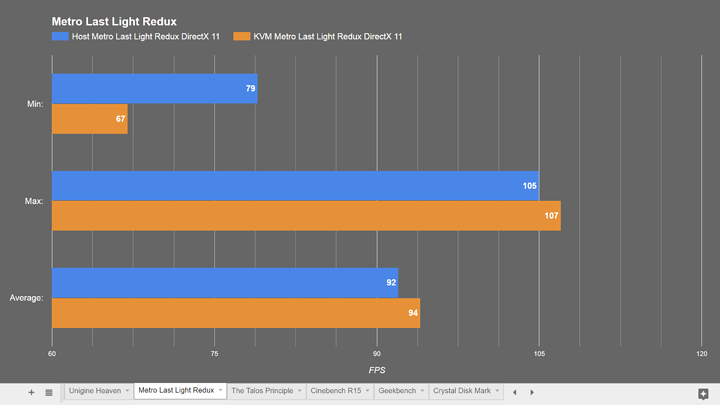

and Metro Last Light ran 2.2% faster.

For kicks I also ran Geekbench’s GPU test and found the VM to only be 1.19% slower than the host, yet again an amazing result.

KVM alongside Intel’s virtualization acceleration technologies have come a hell of a long way to achieve 95-98% of the performance of the host.

So TL:DR; don’t worry about performance in a virtual machine. If you have virtualization acceleration enabled the real world performance difference will be negligible with KVM or QEMU.

Thanks for reading/watching! If you have any ideas for future videos let me know.