It’s not true, its just a joke

I keep getting the urge to convert to rackmount and put it on wheels… idk why.

Since all this is the same brand… it should be compatible.

Supposed to be only one day left… until I get something I ordered 10-11 days ago… fucking newegg.

Once you go rack…

So last night I got to work.

- background

My low power storage system (think ASRock J3455-ITX) had 2x 500GB (2.5") configured as LVM. The intended file system was on a LV that was a lvm mirror.

So I replaced the 2x 500G drives with 8TB toshibas… One at a time allowing the lvm mirror time to resync.

The back up of my ZFS (zol) system has been running since about 10pm last night… (tiny files and 1Gbps LAN = slow)

Still about 1-2TB left to copy.Before I dive into the degraded pool issue.

So, I was trying to install Kubernetes on my new shiny Gentoo server, but I cannot get it to work… I think I’m just going to install two Fedora Server VMs and use the VMs for the cluster. I’ll keep using the Gentoo server as a VM host and distcc build server, but I’m losing some serious bragging rights here.

So the fedora systems are going to run as VMs inside your gentoo system??

did I read that correctly?

Yeah. I don’t want to reinstall the base system (Gentoo) but I want something easily supported by Kubeadm for the VMs (Fedora)

if you’re only using one machine, why not use plain old docker?

I just want to learn kubernetes. And I plan on having more machines once I get the moneys

You should probably start with minikube and get comfortable with kubernetes as a user of it, before you go and run your own cluster.

In a typical on prem kubernetes setup, you’ll usually have some admin host or two to help bootstrap a cluster, or you’ll be bootstrapping one cluster off of another. It’s also unlikely you’ll be able to actually experience what it takes to set it up, until you actually start having to deal with remote storage and overlay networks and gateways. Try minikube, get machiines, (could actually be 5-10 debian VMs if you have the ram and patience), and then look at setting it up from scratch docs. (start with an etcd cluster and so on).

I don’t believe you. I am going to try this and return to either shame you as a liar or thank you endlessly.

I have been wanting to try this for a while.

x2 on kubeadm, though. I love it.

I hate the samba/ldap integration way… it gives crazy uids and AD groups & OUs naming schemes werent designed ( by most orgs at the start) with linux integration in mind.

Heres a sample auth line I use in my kickstart scripts:

auth --enableshadow --passalgo=sha512 --krb5realm=EXAMPLE.ORG --krb5kdc=*,EXAMPLE.ORG --krb5adminserver=EXAMPLE.ORG --enablekrb5

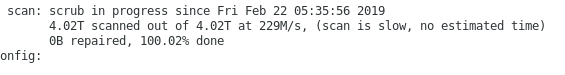

Assuming this scrub finishes without errors, my plan is to upgrade the box as is… then im not sure if I will try to upgrade directly to Fedora 28 or 29…

edit:

made it…

Of course zfs is broken after a distro upgrade…

modprobe zfs

modprobe: FATAL: Module zfs not found in directory /lib/modules/4.20.8-100.fc28.x86_64

dkms status

spl, 0.7.12, 4.20.8-100.fc28.x86_64, x86_64: installed

zfs, 0.7.12: added

Just found this in the install log…

Building initial module for 4.20.8-100.fc28.x86_64

Error! Bad return status for module build on kernel: 4.20.8-100.fc28.x86_64 (x86_64)

Consult /var/lib/dkms/zfs/0.7.12/build/make.log for more information.

warning: %post(zfs-dkms-0.7.12-1.fc28.noarch) scriptlet failed, exit status 10

Non-fatal POSTIN scriptlet failure in rpm package zfs-dkms

Non-fatal POSTIN scriptlet failure in rpm package zfs-dkms

Installing : zfs-0.7.12-1.fc28.x86_64

more errors in that make.log file…

r/lib/dkms/zfs/0.7.12/build/module/zfs/vdev_raidz_math_ssse3.o

CC [M] /var/lib/dkms/zfs/0.7.12/build/module/zfs/vdev_raidz_math_avx2.o

CC [M] /var/lib/dkms/zfs/0.7.12/build/module/zfs/vdev_raidz_math_avx512f.o

CC [M] /var/lib/dkms/zfs/0.7.12/build/module/zfs/vdev_raidz_math_avx512bw.o

LD [M] /var/lib/dkms/zfs/0.7.12/build/module/zfs/zfs.o

make[3]: *** [Makefile:1566: module/var/lib/dkms/zfs/0.7.12/build/module] Error 2

make[3]: Leaving directory ‘/usr/src/kernels/4.20.8-100.fc28.x86_64’

make[2]: *** [Makefile:27: modules] Error 2

make[2]: Leaving directory ‘/var/lib/dkms/zfs/0.7.12/build/module’

make[1]: *** [Makefile:739: all-recursive] Error 1

make[1]: Leaving directory ‘/var/lib/dkms/zfs/0.7.12/build’

make: *** [Makefile:608: all] Error 2

========

not going to mess with it too much. just going to download Fedora 29 directly and then install zfs again… then import my pool… then restore a few conf files

Any reason for not going with Ubuntu?

See my red hat?

I also have a lot of Fedora at home.

I can confirm those rails do fit on the L4500 case. I’ve got two of them. They’re pretty fiddly getting them mounted both on the case and in a rack, but they are nice high quality rails.

So after a fresh install… still errors…

[root@Storage ~]# zpool list

The ZFS modules are not loaded.

Try running ‘/sbin/modprobe zfs’ as root to load them.

[root@Storage ~]# modprobe zfs

modprobe: FATAL: Module zfs not found in directory /lib/modules/4.20.10-200.fc29.x86_64

Im going to bed… not fighting this mess anymore today.