I was using FIO to test locally for that exact purpose. I don’t think it’s NFS getting in the way. I can write a test case using sync with FIO that trashes it as well.

Other symptopms, “iowait” on the proxmox summary graph spikes to 50%, then lowers to 10% for several minutes after.

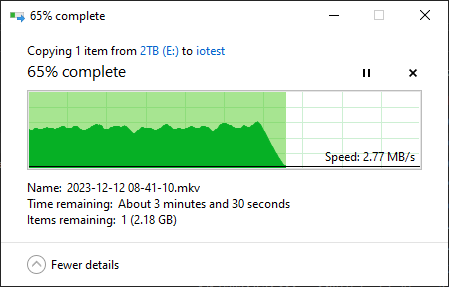

Here is some data if you want to squint at it. I appreciate the time. Really at my wits end.

FIO Testing

# fio --filename=/star/iostat --size=6g --runtime=5m --name=SEQ1MQ8T1 --sync=1 --bs=128k --iodepth=8 --numjobs=1 --rw=readwrite --name=SEQ1MQ1T1 --sync=1 --bs=1m --iodepth=1 --rw=readwrite --numjobs=1

SEQ1MQ8T1: (g=0): rw=rw, bs=(R) 128KiB-128KiB, (W) 128KiB-128KiB, (T) 128KiB-128KiB, ioengine=psync, iodepth=8

SEQ1MQ1T1: (g=0): rw=rw, bs=(R) 1024KiB-1024KiB, (W) 1024KiB-1024KiB, (T) 1024KiB-1024KiB, ioengine=psync, iodepth=1

fio-3.25

Starting 2 processes

SEQ1MQ8T1: (groupid=0, jobs=1): err= 0: pid=2617438: Sun Feb 18 22:16:27 2024

read: IOPS=32, BW=4189KiB/s (4290kB/s)(1227MiB/300004msec)

clat (nsec): min=8015, max=53330, avg=16833.47, stdev=5631.01

lat (nsec): min=8065, max=54092, avg=16999.47, stdev=5757.35

clat percentiles (nsec):

| 1.00th=[ 9536], 5.00th=[10048], 10.00th=[10560], 20.00th=[11456],

| 30.00th=[12224], 40.00th=[12992], 50.00th=[16512], 60.00th=[19072],

| 70.00th=[20608], 80.00th=[21888], 90.00th=[23424], 95.00th=[25728],

| 99.00th=[31616], 99.50th=[33536], 99.90th=[43264], 99.95th=[48896],

| 99.99th=[53504]

bw ( KiB/s): min= 256, max=11008, per=29.63%, avg=4267.59, stdev=1709.76, samples=588

iops : min= 2, max= 86, avg=33.34, stdev=13.35, samples=588

write: IOPS=32, BW=4183KiB/s (4283kB/s)(1225MiB/300004msec); 0 zone resets

clat (msec): min=11, max=5441, avg=30.58, stdev=57.17

lat (msec): min=11, max=5441, avg=30.58, stdev=57.17

clat percentiles (msec):

| 1.00th=[ 15], 5.00th=[ 18], 10.00th=[ 19], 20.00th=[ 21],

| 30.00th=[ 24], 40.00th=[ 26], 50.00th=[ 27], 60.00th=[ 29],

| 70.00th=[ 32], 80.00th=[ 36], 90.00th=[ 43], 95.00th=[ 51],

| 99.00th=[ 80], 99.50th=[ 106], 99.90th=[ 215], 99.95th=[ 321],

| 99.99th=[ 5470]

bw ( KiB/s): min= 256, max= 5632, per=28.45%, avg=4252.76, stdev=873.66, samples=589

iops : min= 2, max= 44, avg=33.22, stdev= 6.82, samples=589

lat (usec) : 10=2.18%, 20=30.54%, 50=17.31%, 100=0.02%

lat (msec) : 20=8.08%, 50=39.22%, 100=2.39%, 250=0.22%, 500=0.04%

lat (msec) : >=2000=0.01%

cpu : usr=0.02%, sys=0.36%, ctx=30307, majf=0, minf=14

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=9819,9803,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=8

SEQ1MQ1T1: (groupid=0, jobs=1): err= 0: pid=2617439: Sun Feb 18 22:16:27 2024

read: IOPS=20, BW=20.5MiB/s (21.5MB/s)(2991MiB/145578msec)

clat (usec): min=68, max=234, avg=107.80, stdev=22.81

lat (usec): min=68, max=235, avg=107.98, stdev=22.95

clat percentiles (usec):

| 1.00th=[ 74], 5.00th=[ 77], 10.00th=[ 80], 20.00th=[ 85],

| 30.00th=[ 88], 40.00th=[ 93], 50.00th=[ 115], 60.00th=[ 122],

| 70.00th=[ 126], 80.00th=[ 130], 90.00th=[ 135], 95.00th=[ 141],

| 99.00th=[ 155], 99.50th=[ 161], 99.90th=[ 194], 99.95th=[ 198],

| 99.99th=[ 235]

bw ( KiB/s): min= 2048, max=55296, per=100.00%, avg=22034.42, stdev=9818.67, samples=278

iops : min= 2, max= 54, avg=21.52, stdev= 9.59, samples=278

write: IOPS=21, BW=21.7MiB/s (22.7MB/s)(3153MiB/145578msec); 0 zone resets

clat (msec): min=22, max=5441, avg=46.05, stdev=98.78

lat (msec): min=22, max=5441, avg=46.07, stdev=98.78

clat percentiles (msec):

| 1.00th=[ 26], 5.00th=[ 28], 10.00th=[ 30], 20.00th=[ 33],

| 30.00th=[ 36], 40.00th=[ 39], 50.00th=[ 41], 60.00th=[ 43],

| 70.00th=[ 46], 80.00th=[ 52], 90.00th=[ 60], 95.00th=[ 71],

| 99.00th=[ 111], 99.50th=[ 199], 99.90th=[ 359], 99.95th=[ 481],

| 99.99th=[ 5470]

bw ( KiB/s): min= 2048, max=30720, per=100.00%, avg=22972.58, stdev=4569.25, samples=281

iops : min= 2, max= 30, avg=22.43, stdev= 4.46, samples=281

lat (usec) : 100=23.01%, 250=25.67%

lat (msec) : 50=39.93%, 100=10.71%, 250=0.55%, 500=0.11%, >=2000=0.02%

cpu : usr=0.04%, sys=0.86%, ctx=32281, majf=0, minf=15

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=2991,3153,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=1

Run status group 0 (all jobs):

READ: bw=14.1MiB/s (14.7MB/s), 4189KiB/s-20.5MiB/s (4290kB/s-21.5MB/s), io=4218MiB (4423MB), run=145578-300004msec

WRITE: bw=14.6MiB/s (15.3MB/s), 4183KiB/s-21.7MiB/s (4283kB/s-22.7MB/s), io=4378MiB (4591MB), run=145578-300004msec

# zpool iostat -qly 10

capacity operations bandwidth total_wait disk_wait syncq_wait asyncq_wait scrub trim syncq_read syncq_write asyncq_read asyncq_write scrubq_read trimq_write

pool alloc free read write read write read write read write read write read write wait wait pend activ pend activ pend activ pend activ pend activ pend activ

---------- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- -----

star 73.5T 145T 169 1.90K 2.29M 38.9M 17ms 2ms 11ms 2ms 149us 642ns 17ms - - - 0 1 0 0 0 0 0 0 0 0 0 0

star 73.5T 145T 149 1.90K 2.71M 38.4M 18ms 2ms 13ms 2ms 256us 660ns 10ms 144us - - 0 1 0 3 0 0 0 0 0 0 0 0

star 73.5T 145T 111 1.88K 894K 38.5M 11ms 2ms 11ms 2ms 837ns 634ns 463ns - - - 0 1 0 1 0 0 0 0 0 0 0 0

star 73.5T 145T 148 1.88K 1.81M 38.2M 14ms 2ms 12ms 2ms 807ns 635ns 4ms - - - 0 1 0 0 0 0 0 0 0 0 0 0

star 73.5T 145T 144 1.90K 2.86M 38.8M 19ms 2ms 13ms 2ms 516us 648ns 15ms - - - 0 1 0 1 0 0 0 0 0 0 0 0

star 73.5T 145T 150 1.92K 2.70M 39.3M 26ms 2ms 12ms 2ms 815us 644ns 39ms - - - 0 1 0 0 0 3 0 0 0 0 0 0

star 73.5T 145T 122 1.91K 1.72M 39.2M 16ms 2ms 13ms 2ms 807ns 633ns 13ms - - - 0 1 0 0 0 0 0 0 0 0 0 0

star 73.5T 145T 134 1.82K 1.84M 37.3M 17ms 2ms 12ms 2ms 769ns 643ns 15ms - - - 0 1 0 0 0 0 0 0 0 0 0 0

star 73.5T 145T 113 1.85K 1014K 37.9M 12ms 2ms 12ms 2ms 793ns 628ns 485us - - - 0 1 0 3 0 0 0 0 0 0 0 0

star 73.5T 145T 147 1.79K 2.52M 36.6M 19ms 2ms 12ms 2ms 822ns 630ns 16ms - - - 0 1 0 10 0 0 0 0 0 0 0 0

star 73.5T 145T 167 14.7K 3.67M 119M 21ms 5ms 12ms 2ms 126us 8us 16ms 2ms - - 0 1 0 0 0 0 0 0 0 0 0 0

star 73.5T 145T 148 1.81K 2.03M 37.0M 17ms 2ms 12ms 2ms 13us 642ns 18ms - - - 0 1 0 0 0 0 0 0 0 0 0 0

star 73.5T 145T 152 1.67K 1.71M 34.2M 13ms 2ms 11ms 2ms 824ns 643ns 4ms - - - 0 4 0 11 0 0 0 0 0 0 0 0

star 73.5T 145T 122 1.27K 1.12M 26.0M 12ms 1ms 11ms 1ms 805ns 647ns 6ms 661ns - - 0 1 0 0 0 0 0 0 0 0 0 0

star 73.5T 145T 149 413 2.42M 6.85M 17ms 463us 11ms 460us 25us 2us 17ms - - - 0 1 0 0 0 0 0 0 0 0 0 0

star 73.5T 145T 141 416 1.83M 6.79M 19ms 552us 12ms 551us 92us 531ns 30ms - - - 0 1 0 0 0 0 0 0 0 0 0 0

star 73.5T 145T 205 423 3.73M 6.99M 14ms 498us 10ms 497us 65us 521ns 10ms 490ns - - 0 1 0 0 0 0 0 0 0 0 0 0

star 73.5T 145T 157 430 2.92M 7.12M 17ms 434us 12ms 433us 868ns 476ns 16ms - - - 0 1 0 0 0 0 0 0 0 0 0 0

star 73.5T 145T 178 415 3.44M 6.88M 16ms 429us 11ms 427us 54us 578ns 11ms - - - 0 1 0 0 0 0 0 0 0 0 0 0

star 73.5T 145T 129 433 1.00M 7.15M 10ms 422us 10ms 421us 832ns 497ns 158us 394ns - - 0 1 0 0 0 0 0 0 0 0 0 0

star 73.5T 145T 165 425 2.23M 6.63M 16ms 540us 11ms 539us 96us 505ns 13ms - - - 0 6 0 0 0 0 0 0 0 0 0 0

star 73.5T 145T 293 435 3.34M 6.60M 11ms 617us 10ms 616us 695ns 559ns 4ms - - - 0 1 0 0 0 0 0 0 0 0 0 0

star 73.5T 145T 191 455 4.28M 6.83M 18ms 585us 11ms 584us 77us 492ns 14ms 554ns - - 0 1 0 0 0 0 0 0 0 0 0 0

Settings

# zpool get all

NAME PROPERTY VALUE SOURCE

star size 218T -

star capacity 33% -

star altroot - default

star health ONLINE -

star guid 14090505498423290915 -

star version - default

star bootfs - default

star delegation on default

star autoreplace off default

star cachefile - default

star failmode wait default

star listsnapshots off default

star autoexpand off default

star dedupratio 1.54x -

star free 145T -

star allocated 73.5T -

star readonly off -

star ashift 12 local

star comment - default

star expandsize - -

star freeing 0 -

star fragmentation 24% -

star leaked 0 -

star multihost off default

star checkpoint - -

star load_guid 7069352757609303911 -

star autotrim off default

star compatibility off default

star feature@async_destroy enabled local

star feature@empty_bpobj active local

star feature@lz4_compress active local

star feature@multi_vdev_crash_dump enabled local

star feature@spacemap_histogram active local

star feature@enabled_txg active local

star feature@hole_birth active local

star feature@extensible_dataset active local

star feature@embedded_data active local

star feature@bookmarks enabled local

star feature@filesystem_limits enabled local

star feature@large_blocks active local

star feature@large_dnode enabled local

star feature@sha512 enabled local

star feature@skein enabled local

star feature@edonr enabled local

star feature@userobj_accounting active local

star feature@encryption enabled local

star feature@project_quota active local

star feature@device_removal enabled local

star feature@obsolete_counts enabled local

star feature@zpool_checkpoint enabled local

star feature@spacemap_v2 active local

star feature@allocation_classes enabled local

star feature@resilver_defer enabled local

star feature@bookmark_v2 enabled local

star feature@redaction_bookmarks enabled local

star feature@redacted_datasets enabled local

star feature@bookmark_written enabled local

star feature@log_spacemap active local

star feature@livelist enabled local

star feature@device_rebuild enabled local

star feature@zstd_compress enabled local

star feature@draid enabled local

# arc_summary | grep active

zfs_vdev_async_read_max_active 3

zfs_vdev_async_read_min_active 1

zfs_vdev_async_write_active_max_dirty_percent 60

zfs_vdev_async_write_active_min_dirty_percent 30

zfs_vdev_async_write_max_active 10

zfs_vdev_async_write_min_active 2

zfs_vdev_initializing_max_active 1

zfs_vdev_initializing_min_active 1

zfs_vdev_max_active 1000

zfs_vdev_rebuild_max_active 3

zfs_vdev_rebuild_min_active 1

zfs_vdev_removal_max_active 2

zfs_vdev_removal_min_active 1

zfs_vdev_scrub_max_active 3

zfs_vdev_scrub_min_active 1

zfs_vdev_sync_read_max_active 10

zfs_vdev_sync_read_min_active 10

zfs_vdev_sync_write_max_active 10

zfs_vdev_sync_write_min_active 10

zfs_vdev_trim_max_active 2

zfs_vdev_trim_min_active 1