I’m working on a machine learning project and I’m having trouble scaling my training to multiple GPUs. Even with heroic efforts, I’m seeing less than 40% throughput increase moving to 2 GPUs.

I’ve run lambda’s benchmark and saw a 34% throughput increase with 2 GPUs versus 1. In their blog post, they claim an 80% speed up for 2 gpus.

A few years ago, I was able to get ~linear scaling with multiple GPUs. Since then, I’ve changed GPUs (2080 tis → 3070 tis), and my motherboard (x399m taichi → x399 Phantom Gaming 6).

I’m concerned that one of the things that made the replacement motherboard so cheap was some part of the PCIe system that limited slot to slot bandwidth.

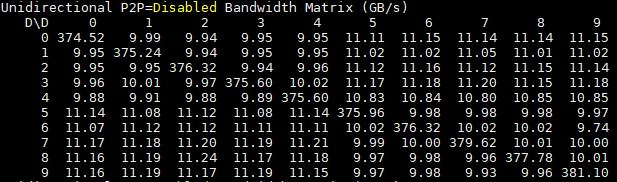

I ran the cuda BusGrind tool, and I’m seeing card to card bandwidth of 3.32 GB/s:

P2P Bandwidth Matrix (GB/s) - Unidirectional, P2P=Enabled

D\D 0 1

0 257.84 3.32

1 3.32 263.90

P2P Bandwidth Matrix (GB/s) - Bidirectional, P2P=Enabled

D\D 0 1

0 270.60 4.88

1 4.98 270.33

What is the expected slot to slot bandwidth for 2 Gen 3 16x lanes?