Memory timings on the ROMED8U-2T

I finally got around to fiddling with the memory speed settings on my ASRock Rack ROMED8U-2T. I did not try any literal overclock, as the default 3200MT/s are matched to the IF of my 7443p, and I don’t foresee myself wanting to run the sticks at higher clocks in any realistic scenario (unless convinced otherwise). But I did play around with timings, mostly to find out whether it would work at all. I’m a total noob on memory tweaking, so I tried only some basic stuff.

Caveat - no way to verify running timings

First, my ROMED8U-2T has a problem that I didn’t see on my H12SSL - it seems impossible to read the SPD info from the memory modules. I could not get them with decode-dimms in Linux, neither with MemTest86 - the latter only reports the numbers as missing.

Therefore the only way for me to verify a change of clocks is to run MLC and look for a performance difference.

Anyway, I have essentially the same sticks as @s-m-e , M393A2K43DB3-CWE, 16Gb 3200MT/s Dual Rank. My memory from the other board where I could read the SPD, is that they run at CL22-22-22-?? where ?? might have been 52 (did not manage to google it).

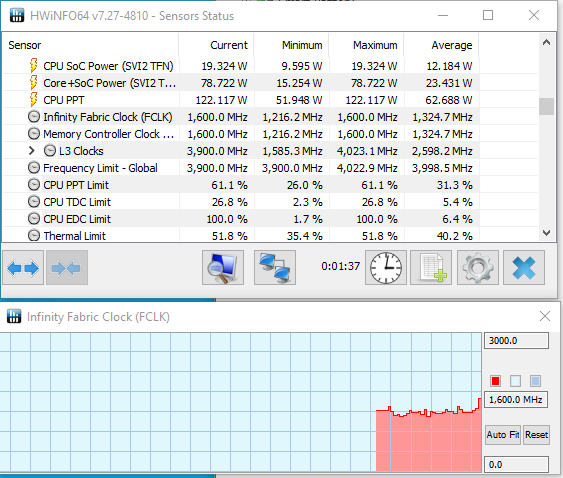

Edit: Hwinfo64 in Windows could read the timings despite SPD information being unavailable: they are reported as 24-22-22-52.

Test procedure

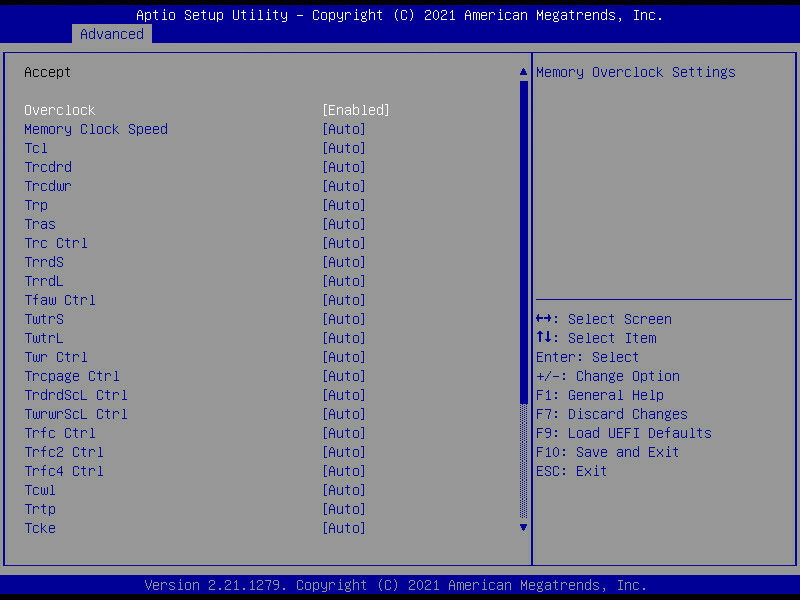

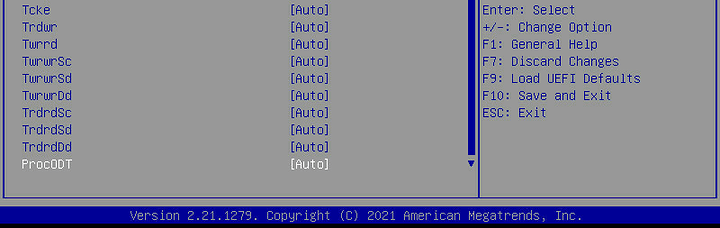

Here are my previous screenshots of the memory timing options:

BIOS menu

I first lowered the CAS Latency (Tcl) in steps of two, down to CL16. Then I got a message at POST that two of the dimms did not pass the self-test. Which means that 6 of them did, suggesting CL16 is not impossible with the right set of dimms.

Then I went up to CL18, and started to change the other primary timings. I settled for 18-18-18-36, wich I’m running now. Every change I did gave rise to a stable difference in MLC results, so I assume that changing the settings worked.

Stability

Passed:

- 4 rounds of MemTest86 (freeware version)

- One full round of MemTest86+ (FOSS)

- About 1hr of mprime (prime95), mixed FFT sizes

- No ECC errors reported after 2 days

Performance

MLC results only.

Default (3200MT/s, 24-22-22-52)

Intel(R) Memory Latency Checker - v3.9

Measuring idle latencies (in ns)...

Numa node

Numa node 0

0 94.8

Measuring Peak Injection Memory Bandwidths for the system

Bandwidths are in MB/sec (1 MB/sec = 1,000,000 Bytes/sec)

Using all the threads from each core if Hyper-threading is enabled

Using traffic with the following read-write ratios

ALL Reads : 144877.1

3:1 Reads-Writes : 134949.7

2:1 Reads-Writes : 136703.5

1:1 Reads-Writes : 137479.7

Stream-triad like: 140258.9

Measuring Memory Bandwidths between nodes within system

Bandwidths are in MB/sec (1 MB/sec = 1,000,000 Bytes/sec)

Using all the threads from each core if Hyper-threading is enabled

Using Read-only traffic type

Numa node

Numa node 0

0 144865.2

Measuring Loaded Latencies for the system

Using all the threads from each core if Hyper-threading is enabled

Using Read-only traffic type

Inject Latency Bandwidth

Delay (ns) MB/sec

==========================

00000 555.29 144735.1

00002 552.16 144774.0

00008 551.47 145408.6

00015 545.08 145517.4

00050 504.37 145663.3

00100 387.87 146326.6

00200 133.94 100345.0

00300 124.09 69892.2

00400 119.85 53663.7

00500 117.53 43612.7

00700 115.41 31760.8

01000 113.95 22645.1

01300 112.08 17668.3

01700 106.92 13725.5

02500 106.73 9578.5

03500 105.94 7037.3

05000 105.78 5121.1

09000 105.23 3125.4

20000 104.99 1745.6

Measuring cache-to-cache transfer latency (in ns)...

Local Socket L2->L2 HIT latency 22.6

Local Socket L2->L2 HITM latency 23.7

Tweaked (3200MT/s, 18-18-18-34)

Intel(R) Memory Latency Checker - v3.9

Measuring idle latencies (in ns)...

Numa node

Numa node 0

0 89.9

Measuring Peak Injection Memory Bandwidths for the system

Bandwidths are in MB/sec (1 MB/sec = 1,000,000 Bytes/sec)

Using all the threads from each core if Hyper-threading is enabled

Using traffic with the following read-write ratios

ALL Reads : 147305.1

3:1 Reads-Writes : 138777.5

2:1 Reads-Writes : 140462.3

1:1 Reads-Writes : 141285.1

Stream-triad like: 143622.2

Measuring Memory Bandwidths between nodes within system

Bandwidths are in MB/sec (1 MB/sec = 1,000,000 Bytes/sec)

Using all the threads from each core if Hyper-threading is enabled

Using Read-only traffic type

Numa node

Numa node 0

0 147259.7

Measuring Loaded Latencies for the system

Using all the threads from each core if Hyper-threading is enabled

Using Read-only traffic type

Inject Latency Bandwidth

Delay (ns) MB/sec

==========================

00000 523.11 147208.4

00002 521.15 147108.9

00008 532.44 146583.0

00015 530.16 146652.3

00050 492.74 147099.2

00100 347.49 149592.6

00200 128.43 100264.8

00300 118.34 69817.3

00400 114.41 53619.9

00500 112.13 43582.8

00700 110.17 31723.3

01000 108.72 22626.7

01300 107.31 17668.1

01700 101.82 13739.7

02500 102.47 9599.2

03500 101.95 7059.3

05000 101.69 5144.6

09000 103.32 3134.0

20000 101.01 1768.0

Measuring cache-to-cache transfer latency (in ns)...

Local Socket L2->L2 HIT latency 22.7

Local Socket L2->L2 HITM latency 23.8

About a 5% decrease in latency.

Conclusions

It’s possible to lower the timings on ASRock ROMED8U-2T without the system complaining just for the sake of it. What I haven’t tried are any subtimings, as my understanding of memory timing tweaking is quite shallow. But I’m happy for suggestions!

I don’t have concrete workloads which I know of where these settings would make a difference. It’s fun to optimize for the OCD of it, and I would probably run lower-than-spec timings if I keep this board for my main work machine, “because I can”. However there are still some aspects of it that are less optimal (e.g. the lack of a VRM temp sensor) and might lead me to put it in my server instead.

Btw:

If I were to check this, how could I verify the resulting FCLK on Linux?