TL;DR: Windows 10 “optimize drive” on a thinly provisioned drive (qcow2 file backed VM) makes the size on disk grow. Expected behaviour would be shrinking due to trim, however trim seems to happen independently of optimization, already when deleting data. The growth is a concern since it seems like the result of something fishy, and this would be Windows’ default behaviour unless action is taken to prevent it.

I’m benchmarking different storage models for hosting VMs under Linux/KVM (ubuntu 20.04.2). This revealed something unexpected (to me) in how Windows 10 handles sparse files. It seems like while Linux guests behave as expected, Win10 guests do something weird when trying to “optimize” the drive.

I have two qcow2 files that store VMs, one with ubuntu20.04 and one with Win10 (installed recently from ISO 21H1). They both have libvirt XML entries equivalent to this:

<disk type="file" device="disk">

<driver name="qemu" type="qcow2" cache="none" io="native" discard="unmap" detect_zeroes="unmap"/>

<source file="/flash/vmbacking/win_root.qcow2" index="2"/>

<backingStore/>

<target dev="sda" bus="scsi"/>

<boot order="1"/>

<alias name="scsi0-0-0-0"/>

<address type="drive" controller="0" bus="0" target="0" unit="0"/>

</disk>

...

<controller type="scsi" index="0" model="virtio-scsi">

<alias name="scsi0"/>

<address type="pci" domain="0x0000" bus="0x04" slot="0x01" function="0x0"/>

</controller>

They both live on ZFS on top of a single NVMe drive. Here’s the output of ls -lsh:

1,5G -rw-r--r-- 1 libvirt-qemu kvm 3,8G maj 31 22:45 buntu.qcow2

11G -rw-r--r-- 1 libvirt-qemu kvm 23G maj 31 23:06 win_root.qcow2

The leftmost number is the size on disk, which I take is what matters. Now, when I create a 1Gb file on each drive, the numbers to the left grow with 1Gb. Expected. When I remove the file on Linux, the number stays the same until I run “fstrim /”, then it shrinks with the same amount. Also expected (no “discard” flag in fstab).

However, when I remove the file on Win10, it shrinks automatically after a couple of seconds. OK fine, maybe Win10 implements some kind of autotrim? No problem.

The weird thing is the “Optimize Drives” dialogue in Win10. If I nevertheless try to analyze or optimize the drive, it takes a huge amount of time (sometimes more than 1h for optimize), and the file size grows again:

# before removing 1Gb file

> ls -ls

11741509 -rw-r--r-- 1 libvirt-qemu kvm 24267128832 maj 30 09:47 win_root.qcow2

# before "optimize":

> ls -ls

10723573 -rw-r--r-- 1 libvirt-qemu kvm 24267259904 maj 30 18:08 win_root.qcow2

# after "optimize":

> ls -ls

11446869 -rw-r--r-- 1 libvirt-qemu kvm 24267456512 maj 30 20:19 win_root.qcow2

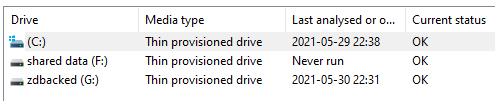

I.e. the file gains 700Mb (after having lost 1Gb) when trying to “optimize” from within Windows. Windows seems to detect it correctly as a “thin provisioned drive”:

C: is the drive in question. I get the same behaviour for G: which is backed by a sparse ZVOL (created with -s), however here the growth after “optimize” is much smaller, about 15Mb (though G is empty while C is also the boot drive, so that probably explains the difference). However, what I don’t get are the following:

- Since Windows clearly trims immediately when a file is deleted, why does it also try to optimize?

- Why is the fs growing due to “optimizing”?

- Does “Optimize” actually try to defrag the drive?

I assume that I can turn off the automatic optimization of drives, but since Windows should understand sparse storage by now, I don’t get why it even tries. In my past experience Windows has handled sparse drives correctly, trimming rather than trying to defrag. It seems like a source of trouble down the road if Windows’ default behaviour implies doing something weird and unneeded. Or am I totally mistaken about how Windows should handle sparse drives?