that’s awesome!! what kinda perf you seeing? tokens/sec on mistral 70b for example

Would be good to get TFlop numbers for flash-attention

I have almost exactly the same setup as the video waiting for me to replace my home server hardware (A2000/5700/B450D4U) with for the last few months,

RTX 4000 Ada

Ryzen 7900

ASrockRack B650D4U-2L2T motherboard

Added a 80+ titanium power supply (one with specifically high idle efficiency as this is not really included in the 80+ rating)

A Coral Dual Edge TPU for running models for Frigate

A Crucial T705 4TB PCIe 5 SSD (only one m.2 slot on the board)

And a 1.5TB Optane driver for the write heavy stuff

Poor thing is gathering dust waiting for me to find time ![]()

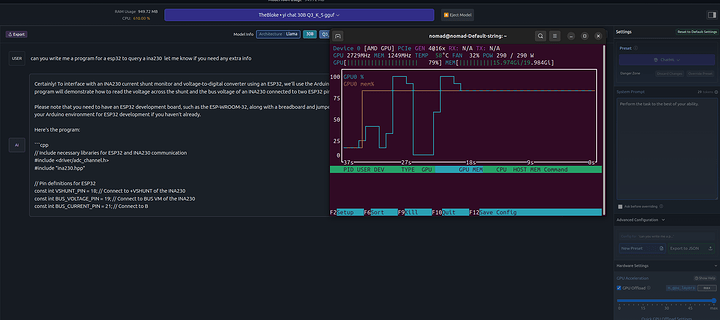

i have around eval rate: 6.21 tokens/s with my RTX a4500 with an wizard-vicuna-uncensored:30b Model running on Unraid. Is that good or not? ![]() i mean it feels runnable.

i mean it feels runnable.

that’s around the speed you get on cpu only/7900 +/-

hm ok i tested with llama2:13b-chat-q6_K --verbose that went fully into VRam and i have about 34.38 tokens/s, so the bigger the model and it swapps to my DDR4 the more it uses CPU, i see.

iam downloading some 30b in the sub 20GB range and test them.

It looks like that for me, with only a RTX A2000 6 GB to play with, that’s nowhere CLOSE to having enough VRAM/powerful enough to be able to play with the 70b model.

![]()

Bummer.

edit

In the latest video, @wendell mentioned about using a WebUI for Automatic 1111 (GitHub - AbdBarho/stable-diffusion-webui-docker: Easy Docker setup for Stable Diffusion with user-friendly UI)

but the error message that I am getting is:

ubuntu@nvidia-ai:~/stable-diffusion-webui-docker$ sudo docker compose --profile download up --build

[sudo] password for ubuntu:

WARN[0000] /home/ubuntu/stable-diffusion-webui-docker/docker-compose.yml: `version` is obsolete

[+] Building 0.8s (6/8) docker:default

=> [download internal] load build definition from Dockerfile 0.0s

[+] Building 0.8s (6/8) docker:default

=> [download internal] load build definition from Dockerfile 0.0s

=> => transferring dockerfile: 185B 0.0s

=> [download internal] load metadata for docker.io/library/bash:alpine3.19 0.4s

=> [download internal] load .dockerignore 0.0s

=> => transferring context: 2B 0.0s

=> CACHED [download 1/4] FROM docker.io/library/bash:alpine3.19@sha256:5353512b79d2963e92a2b97d9cb52df72d32f94661aa825fcfa 0.0s

=> [download internal] load build context 0.0s

=> => transferring context: 128B 0.0s

=> ERROR [download 2/4] RUN apk update && apk add parallel aria2 0.4s

------

> [download 2/4] RUN apk update && apk add parallel aria2:

0.248 runc run failed: unable to start container process: error during container init: unable to apply apparmor profile: apparmor failed to apply profile: write /proc/self/attr/apparmor/exec: no such file or directory

------

failed to solve: process "/bin/sh -c apk update && apk add parallel aria2" did not complete successfully: exit code: 1

Apparmor, in my Ubuntu 22.04 LTS privileged LXC container is already set to unconfined in my <<CTID>>.conf in Proxmox 7.4-17.

My RTX A2000 6 GB has been successfully passed through to the LXC container and I’ve also got the Nvidia Container Toolkit installed successfully and the sample workload of running nvidia-smi also ran successfully as well.

I used LM Studio and just picked the model that had the most parameters that my GPU was capable of running.

I have a 9700TX 20GB other than hanging sometimes at the start its quick.

it glitches out pretty bad sometimes and just starts putting out trash but otherwise its for sure chatting away.