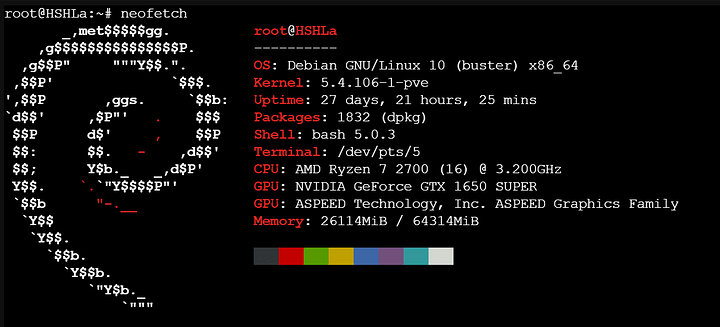

WOW, sweet…cant see you needing to upgrade soon! Nice choices. Mine is similar but way less powered.

I ran my storage on proxmox via ZFS with a guide from here

I also then ran Plex in a Debian LXC container with a GPU passthrough that has been working out well for me so far. I may move to TrueNAS to make it a little easier to monitor my ZFS pools. It may be the easy way…but I can mess around more once I have some more knowledge.

I do pi-hole, with recursive DNS…I’m not sure what this is but I made it work. Im learning though.

Not nearly as powerful as your rig (which is awesome btw), but the CPU is up for a upgrade… I just have to decide if I want to put the 3700x, or the cheap 3900XT in it. I may do 3700x and use 3900x in my big rig with new gpu.

I do have the same goals as you. Im exploring the posts by @PhaseLockedLoop in this series he did.