Hello,

I am a linux beginner(only have a ubuntu NAS with universalmediaplayer and a network share running) and i want to build a new NAS in the future.

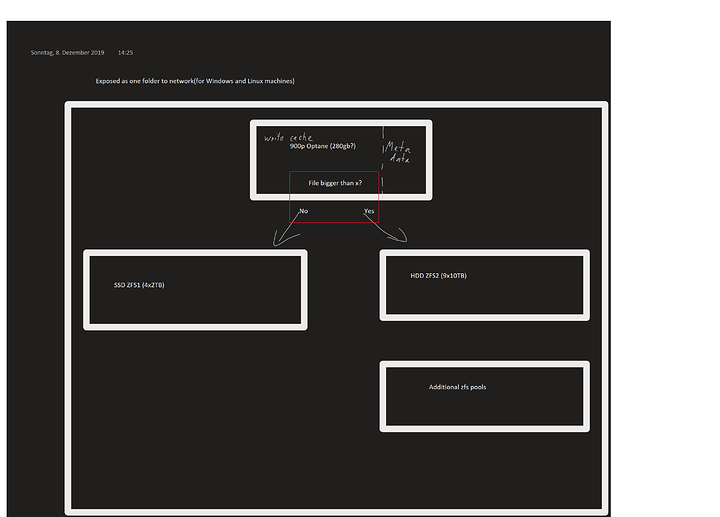

Well, the plan is to run 4x2TB Intel 600p(or 605p when they come out) and 9x10TB Seagate enterprise HDD´s and probably a 900p as write cache.

All of them should appear as one network drive(so far so good).

But i want to sort the files by size automatically and store them to either SSD or HDD because the NAS will store a LOT(1million+) of small files (5kb -5mb) and then a good amount <50k large files (100mb+).

Well, i made a picture to illustrate this idea

Well, is there a solution to this? Having a network folder only write to the cache-drive and then move the files accordingly to SSD or HDD pools and still let them appear as the same folder structure?

I am capable of a bit python and could write a script to move files from one folder to another (would probably be a 10-liner) but i have no idea if/how i could do this on a disk/logical volume level.

Edit1:

I don´t care which operation system is used if you have an idea(as long as it is free or one-time purchase(unraid would be ok))