What I suspect happened to you is that pipewire got installed and screwed over your defaults. Pipewire will be the standard going forward and I see no reason not to make the jump today if possible, but yes, the more exotic your setup the greater the chance you will need to tweak some things.

This is the latest configuration docs: Config PipeWire · Wiki · PipeWire / pipewire · GitLab

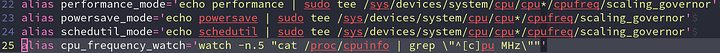

The TLDR: Copy /usr/share/pipewire/pipewire.conf to either /etc/pipewire/pipewire.conf (for system wide config) or ~/config/pipewire/pipewire.conf (for user specific configuration). Then set these options as follows, make sure they are uncommented. This should take care of the first part of your problem.

default.clock.rate = 192000

default.clock.allowed-rates = [ 192000 ]

default.clock.min-quantum = 32

default.clock.max-quantum = 8192

default.clock.quantum = 1024

Make sure to restart pipewire to make the changes take effect (systemctl restart pipewire).

For the 24 bit part and from my understanding, pipewire should handle this natively, as in, if a sink gets a 24 bit sample but can only handle 16 bit, then this will get downcast to 16 bit. You can’t ever get better quality than your source provides you, even if your sink is capable of higher bitrates. It’s just like trying to enlarge a 1080p picture to a 1440p resolution - It will always be a bit janky no matter the amount of FSR and DLSS you throw at it.

Ah, let me try to explain these terms briefly, it will do you good in the long term regardless, if you are going to do any kind of live audio editing you need to know these things. There are five terms that are used when discussing sound: Bit depth, Frequency, Latency, Sample and Buffer.

A sound from the computer is simply a number of different samples played at a specific frequency. If your frequency (aka bit depth) is 44 kHz, then all samples are 1/44000 of a second long. For 192 kHz, it’s 1/192000 of a second long.

Latency is the time it takes the computer to process a sound, so the time from when it is received to when it actually plays. This obviously varies with how busy the PC is, OS scheduling and so on. Suffice to say sound latency is usually 10-25 milliseconds, often longer.

If we were to play a sound and have no buffer, any stutter in the computer would be noticeable - and the computer has tons of microstutters. Every 0.01s, for instance, the Linux kernel scheduler steps in and decides who gets to use the available CPUs the next 0.01 second. Add to that button presses, mouse clicks and network package interrupts, and you can see our stream would be interrupted rather rudely quite often. That’s why we have a sample buffer, which in this instance is a plain old FIFO pipe (aka queue).

The sample buffer is usually big enough to carry one full round trip of latency (e.g. 10-25 ms). But since the buffer is a FIFO, it will start at the front and go back - so the queue itself will guarantee a number of ms of latency. This guaranteed latency needs to be bigger than the processing latency, but not so big as to completely displace the sound.

Now to the million dollar question; if the buffer queue has 100 samples, on a frequency of 192 kHz, how much guaranteed latency will that be? Why, that would be 100/192000 seconds of latency. What is the best buffer size? Well, certainly not 64 000, as that would be 1/3 seconds of guaranteed latency - but not 1 either, as that would mean sound comes out completely chopped up and garbled. So, the buffer size is merely how many samples to store ahead, usually we want a couple of ms of buffer so 8 000 might be a good idea on a 192 kHz system.

That just about concludes the quick crash course about PC sound design. “Wait”, I hear you say, “What about Bit depth?” Well, that’s just how large each sample is. There are four common variants, 8, 16, 24 and 32. 8 is NES grade sound, 16 is CD music sound, 24 is what you usually use in professional editing and 32 is, well, past the diminishing returns, although could be interesting for some scientific use cases. As far as the PC goes, higher bit rate → slightly higher latency and much larger buffer size.

I understand if you want to wait for now, however I still strongly recommend you read up on it as soon as possible, if you do anything even remotely close to audio processing on Linux. Pipewire will kill off PulseAudio completely by 2024, and will probably come as default as soon as Ubuntu 22.10 - maybe even 22.04, though that looks less likely right now.

The fact that it can handle everything required for remote input/output streams and screen sharing makes it the perfect companion to Wayland and libinput.