As someone who’s interested in computer graphics I have been asking for hardware accelerated raytracing for years. As y’all know Nvidia has recently announced that their overpriced, uhm I meant new cards will be able to do just that.

IMO Nvidia has done a really bad job in showing what the technology can do. A certain shill article wasn’t particularly helpful either. In this post I’ll show the limits of current game engines and what raytracing can do to remove them.

Reflections

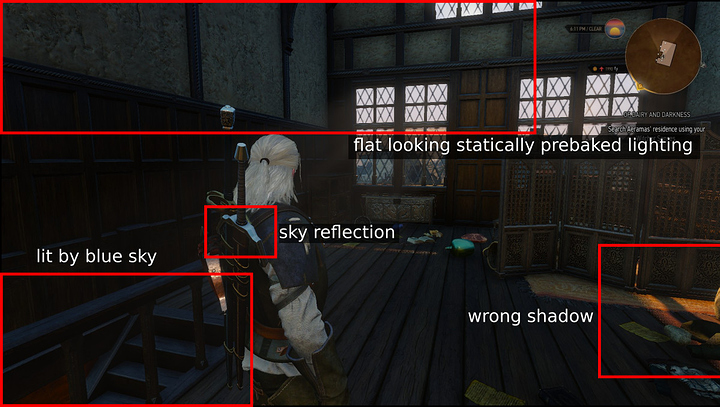

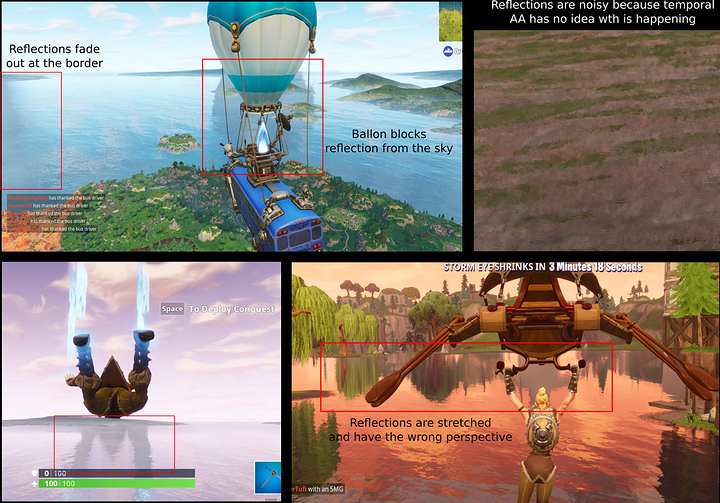

Reflections are the most obvious example. Current technology can only reflect objects that are on screen or captured in light probes. This causes obvious artifacting:

Raytracers don’t care where an object is. If it’s in the scene it can be reflected. Future games can use the current technology where possible and raytracing only for those pixels where conventional methods fail. This way the performance impact would be less than tracing everything while still delivering good results.

Dynamic lighting

Because calculating the influence of all lamps at runtime is expensive many games use light probes. Light probes essentially store the light at a point in the map. The GPU then blends between nearby probes to approximate the light.

Thing is, light probes are often spaced far apart because it is not feasible to pack the entire map with them. Because of this the resulting light is only a gross approximation. In Witcher 3 for example you can often see a tree in reflections even in indoor scenes.

With ray tracing the light probes can be calculated while the game is running. Instead of having a couple of them spread throughout the map the game can create and update them as needed. Thus there is always a high density of probes around the player, even if the environment changes.

Ambient Occlusion

Ever noticed how promotion pictures for games will often contain a strong light source? That’s in part because engines are having trouble rendering indoor graphics because they are dominated by indirect light and ambient occlusion. Both of these effects are hard and expensive to get right with a rasterizer.

Level of Detail transitions

Ever seen this?

Games have to switch to lower resolution models for far away objects to keep the framerate up. That’s because rasterizing n triangles takes n + some more time.

Raytracing scales logarithmically with the number of triangles: Tracing n triangles only takes log(n) time. This makes rendering more triangles cheap.

Moreover the LOD can be varied per ray. Instead of changing the entire model at once different pixels can switch as needed. This makes the transition less noticeable.

Most excitingly: The unknown

If there’s one thing computer graphics has taught me that’s that programmers are creative and always find ways to utilize technology for things it was never intended to do.

For example transform feedback was meant to cache animated meshes. In reality it was instantly abused to perform general purpose compute, animate particle systems and add post processing filters even before compute shaders were a thing.

Some things, like signed distance fields, cannot be rasterized at all. Unreal engine tried to support them but ultimately decided they are too slow on rasterizin hardware. If future GPUs add raymarching support this would open a whole new world for computer graphics.

Not all of these uses have been demonstrated in practice. The point is that raytracing gives game engines a new tool to work with and that is a good thing.

In my opinion raytracing is the biggest advancement in realtime computer graphics since physically based rendering. Let’s not kill this awesome technology because of one greedy vendor.