Welcome to the semiconductor manufacturing thread.

Here we will discuss foundries, manufacturing technology and post news and info.

With the recent news of GloFo dropping 7nm we have three foundries left battling it out in the nanometer race; TSMC, Intel and Samsung.

The state of the foundries:

Intel

Intel is currently producing the bulk of their chips on their 14nm node, the third iteration of this, the 14nm++ is considered to be the highest performing node in current high volume production.

They are also manufacturing a small volume on their first Gen 10nm node, these chips are however considered to have lower performance than their 14nm++ node.

High volume production of their 10nm node is scheduled for Q4’19, according to some reports this is expected to be the 10nm+ process.

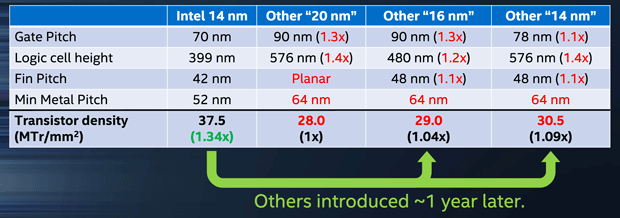

Here is a chart Intel themselves released comparing their 14nm to competing foundries.

Further reading on Intels 14nm process:

https://www.anandtech.com/show/8367/intels-14nm-technology-in-detail

TSMC

TSMC currently has two main high end nodes; the 10nm and 16nm (including 12nm FFN).

Their 10nm node is mostly used for smaller chips like smartphone SoCs, it is believed to be similar to or denser than Intels 14nm, but not suited for high volume production of larger chips.

Their 16nm process is what is used for producing high performance GPUs and CPUs, it has been reported that this process is similar to their previous 20nm node, but with finfets added.

TSMC is expected to be the first foundry to hit high volume production of the 7nm node, this will dethrone Intels long reign as the leading semiconductor Foundry, and it is speculated that this node is denser than Intels 10nm node.

Further reading on TSMC roadmaps

https://www.anandtech.com/show/11337/samsung-and-tsmc-roadmaps-12-nm-8-nm-and-6-nm-added

Samsung

Samsung semiconductor currently has two high end nodes in production, 10nm and 14nm.

Like TSMC their 10nm node is mostly used for smaller chips and is very similar in density and performance.

Their 14nm node is what is used for higher preforming chips like CPUs and GPUs, like TSMC it is said to be based on the previous 20nm node but with finfets added.

Next on the map for Samsung is their 8nm node which is an improvement on their 10nm technology.

Their 7nm node is set for 2H’19.

Further reading on Samsung nodes:

https://www.anandtech.com/show/11337/samsung-and-tsmc-roadmaps-12-nm-8-nm-and-6-nm-added/3