Many comments, a lot to unpack…

Single superbuild

Pros:

- potential to learn about virtualization, resource splitting and a few skills related to this type of job;

- in theory, less waste, both in power and other idle resources, as you’d have one system be in-use at an average rate, compared to many systems idling;

- scaling up means that potentially all tasks will finish faster when they demand resources;

- everything is at your fingertips, no need to connect stuff to other boxes for setup or troubleshoot;

Cons:

- more expensive and potentially devastating on cash-flow;

- if you go with old enterprise hardware, it’s going to be a powerhog even at idle;

- something breaks, then everything breaks;

Multiple PCs

Pros:

- something breaks, just that thing breaks;

- potential for power sipping both at idle and during workloads;

- big advantage when it comes to actually powering off those things that you don’t need and starting them on demand;

- good on cash-flow, buy one now, buy another one later, you don’t have to have a big saving on-hand;

Cons:

- tasks may take longer to complete;

- with resources not being properly distributed, one PC could work a lot, while others idle;

- scaling out is not linear and your workloads need to support it;

- a bit more of a hassle to troubleshoot, but not a deal breaker;

I always found it cheaper to go multi-low-end than one big box, especially given the advantage of buying one now, then buying another one later. I had 2x Asrock J3455M and a Pentium G4560 PCs, all for probably less than a single 6 core Ryzen box, or maybe around the same price, but I couldn’t do a single beefy build at the time. And my 3 PCs were using less power than one Xeon X3450 that I had for free. I used to keep all of them powered on all the time, but I started powering off the chungus, because it was using so much power at idle, it was ridiculous. And it only had a 500W PSU, but my power bill doubled at the time and this was around when the coof started.

I sometimes find a middleground, but one thing I despise is superbuilds that contain the router. The forbidden router that Wendell does is a horrifying thought for me. Knowing that my system could break and lose internet access, thus lose the ability to research how to solve the problem is something I cannot live with.

I usually recommend people do a router build with small boxes like Mintbox 2 or Protectli or just keep a router around, then do a build for everything else.

I used to have a VFIO build on the aforementioned pentium and it worked fine, but Windows was choking a bit and I had other issues with it, mostly because Manjaro was such a thorn in my side.

I am biased towards multiple builds if you have the space for them, but even if you don’t, doing a rack or stacking them somehow is still better than a chungus build. I’m not against superbuilds, I’m just against using those for everything.

One example I give people is that their home routers aren’t their PCs. And imagine having to keep their PCs on to access the internet on their phones. It’s wasteful! And I am arguing the same for NAS. I believe people should have a NAS in their homes. Nobody should be storing their most important files on their PCs. Do a small NAS, store your files there and encrypt and send them to a cloud or somewhere. Bonus points is that you can access your files from any devices and migration on a new PC is eased.

Now here comes the even worse part of my ramble. I believe one should have a PC build for their own consumption, even a VFIO build good enough for just 2 OS and have a second build that stays on 24/7. The second one should be spec’ed per needs. It can serve as a NAS, a small hypervisor for services like Jellyfin or whatnot, and even a forbidden router if one has a backup AIO router that they can use to connect to the internet in case that one goes poof.

I somehow managed to derail the thread from a 1 superbuild vs many small builds, to “how much power does a build need to have and how many builds should there be?” It is slightly related, but it is outside of the topic.

My current infrastructure consists of:

- a RPi 3 router (WiFi WAN);

- a RPi 4 8GB - daily driver PC;

- a powered off RPi 2 that I may use as a temporary tftp for netboot;

- a powered off Pine64 RockPro64 to be a router, once I figure out how to run OpenBSD on it, and if that fails, FreeBSD;

- an Odroid N2+ currently used as a makeshift NAS, will be a LXD container host;

- an Odroid HC4 that I haven’t figured out yet, will be my NAS;

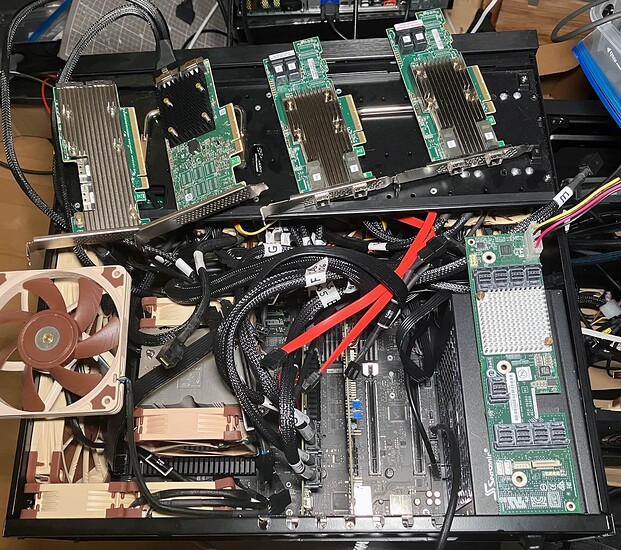

- an unfinished Threadripper 1950x build, I need to order ECC RAM - this will be a VFIO build for Windows and Linux gaming (in case stuff doesn’t work on Linux, I will power on Windows) and a testing ground for other stuff that I don’t plan to run in LXD, like say, a test virtual Ceph cluster for learning, a virtual cloud infrastructure (not for sale or rent) and an automation zone, spinning up different VMs, testing stuff, updating them, customizing, purging and so on;

The plan for the SBC infrastructure is to make it redundant, by adding another switch and doubling the NAS, router and LXD host. I plan on automating some tasks, like updating, but I don’t know what alerting system I’m going to use. I’ve used Centreon in the past, but I don’t like its hacky nagios roots, I’d rather use nagios if that’s the case, but I’m in-between Zabbix and Grafana + Prometheus + Alert Manager, I need something that can serve as an automatic response: updates needed → do an update; restart needed → send me a notification.

The reason I went with SBCs is because I want to eventually power them via solar panels and have them run 24/7 and I want redundancy. I could have had a 3 cluster Intel NUCs and should have been easier, but at the cost of probably more power use and it would have been more expensive, although it opens the gate for all the x86 compatibility, although I probably don’t need that, as everything I run is open source, so I can just compile them.