Solution:

In case you are running into the same problems as me, here is what helped me out in the end.

The goal: Configure a Linux KVM host to allow NFS traffic over an isolated, virtual network.

1. Creating the network

Expand

- Start

Virt-Managerand open the network settings - Create a new virtual network:

- Name: isonet0

- Autostart on boot

- Network 192.168.100.0/24

- DHCP range: 192.168.100.2 - 192.168.100.254

- IPv6 disabled

- Check

ip link, it should look like this:- Ignore

virbr0-nic

- Ignore

3: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN mode DEFAULT group default qlen 1000

link/ether 52:54:00:b0:52:0e brd ff:ff:ff:ff:ff:ff

4: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc fq_codel master virbr0 state DOWN mode DEFAULT group default qlen 1000

link/ether 52:54:00:b0:52:0e brd ff:ff:ff:ff:ff:ff2. Firewall settings

Expand

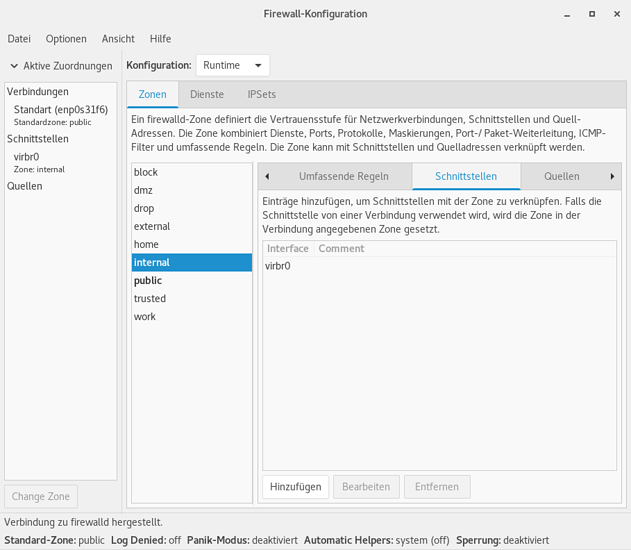

I am using firewalld for configuration. Please refer to your firewall's documentation if you are using a different one.

- Open the GUI of firewalld ( i.e. type

Firewallin Gnome) - Add a new interface and name it like the network we previously created

- In this example:

virbr0

- In this example:

- Apply an existing zone to it or create a new one

- Make sure it's different from your physical network (public)

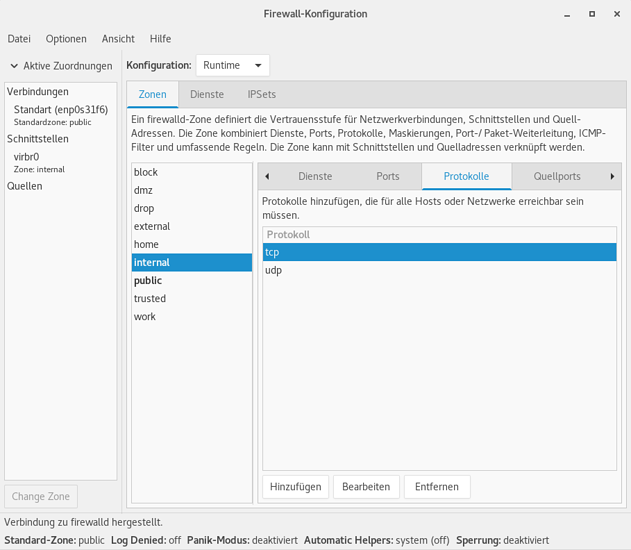

- In that zone, allow all TCP and UDP traffic

- Save your settings permanently so they don't reset on boot

3. Enable Jumbo Frames (Optional)

Expand

This will reduce overhead and increase NFS's overall performance. Only activate this if you know that all attached guests will operate with an MTU of 9000! This is the the case as long as you are using VirtIO driver and have no physical device bridged to this network (or if you explicitly know your NIC supports jumbo frames).

- In Virt-Manager, stop

isonet0 - In a terminal, type

sudo EDITOR=nano virsh net-edit isonet0- Add

<mtu size='9000'/> - Save and close

- Add

- Restart

isonet0 - (Windows guest only!) Open the network settings

- Open the Device settings of the NIC attached to the isolated network

- Adjust the MTU value to

9000 - Save and close all windows

- To verify, open

cmd.exe- Run

ping 192.168.100.1 -f -l 8972(9000 - 28 (TCP/IP header)) - If ping fails with packet loss, try restarting your Windows guest

- Run

Example

<network>

<name>isonet0</name>

<uuid>0ce6e7bf-f8a5-461c-aacb-beaa9bbbe80b</uuid>

<bridge name='virbr0' stp='on' delay='0'/>

<mtu size='9000'/>

<mac address='52:54:00:b0:52:0e'/>

<domain name='isonet0'/>

<ip address='192.168.100.1' netmask='255.255.255.0'>

<dhcp>

<range start='192.168.100.100' end='192.168.100.254'/>

</dhcp>

</ip>

</network>- Terminal,

ip link:

3: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 9000 qdisc noqueue state DOWN mode DEFAULT group default qlen 1000

link/ether 52:54:00:b0:52:0e brd ff:ff:ff:ff:ff:ff

4: virbr0-nic: <BROADCAST,MULTICAST> mtu 9000 qdisc fq_codel master virbr0 state DOWN mode DEFAULT group default qlen 1000

link/ether 52:54:00:b0:52:0e brd ff:ff:ff:ff:ff:ffOriginal post:

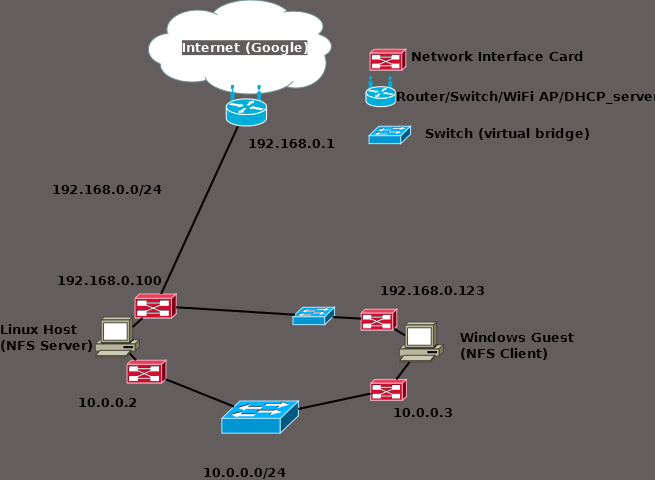

I am stuck getting NFS to work for KVM guests. They are provided with 2 two NICs, one being a macvtap directly attached to my Intel ethernet port, the second being a virtual bridge for isolated communication over which I want to share files over NFS.

My networking expertise in Linux is rather fresh and I can't get a working connection between my host OS and the Windows 10 guest I would like to share data with.

The virtual bridge is on the network 10.0.0.0/24, where I can ping 10.0.0.1 (gateway). However I cannot get a response when pinging 10.0.0.2 which is my Windows guest. There is no DHCP running, the guest recognizes the network and idicates "no internet access" (obviously).

NFS is working from what I can tell the exports of showmount -e show /home * which seems correct. Connecting on the host by using nfs://localhost/home works fine.

So, the isolated network virbr0 I created with Virt-Manager shows up when typing ip link in terminal, i can ping the gateway but none of the guests connecting to it. Am I missing anything in my configuration, maybe firewall settings? I need your help.

The Libvirt documentation tells that the isolated network is capable of communicating to both the guests and the host.

).

).