I forgot how much I hate this thing.

Success!

Back in NY. I’ll post a proper ending later after I’ve slept. For now, please enjoy this ball mouse.

Was that on the DC’s crash cart?

Yessir

Ah that sounds like the life. One time i spent a month away with work, i took an HDMI cable, xbox controller and played Fallout 4 on the tv in the hotel/apartment during some of the downtime. Hard life.

Are you some kind of traveling server repair person?

I do contract IT for some small businesses as a side job. This equipment is all mine. It’s been sort of my lab but after this refresh I’m more confident in providing production services with it.

It’s an hours drive from where I live. The main obstacle is that I don’t own a car, so I have to rent one to go work on it. I managed to do everything remotely for 1.5 years so this trip was basically a backlog of things I had to do in person.

Well, I managed to crash the Edgerouter Pro for the first time in its 4 years of life.

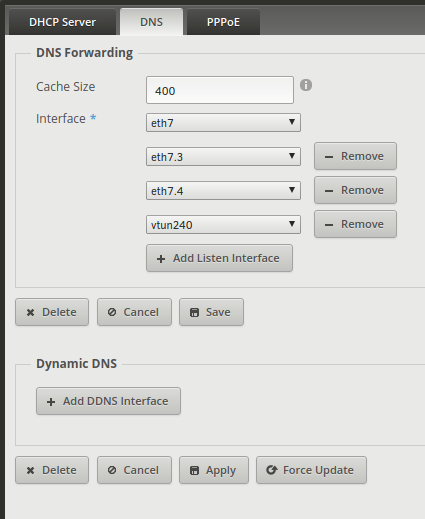

In this window, I added the vtun240 interface to DNS Forwarding and accidentally clicked apply under Dynamic DNS. I instantly realized my mistake and clicked Save instead (while it was still processing the apply) and I immediately lost all connectivity.

The tech at the DC graciously rebooted it for me and it came back up 100% ok, even with the vtun240 DNS config saved.

It’s worth noting that reboot-on-panic is set to true but was not triggered.

Let this be a reminder to all of us to always schedule a future reboot when remotely configuring network equipment.

Cleaning up the media tv situation.

IR sensor and keyboard usb dongle hidden behind a picture frame.

I’m trying out zfs dedupe on a dataset for OS ISOs. First time ever attempting dedupe. I’m guessing that there will be a lot of identical blocks between different Linux ISOs (curious to see how many).

This is relatively low risk since I can always destroy the dataset and go redownload the ISOs if dedupe crushes the system.

Spent a good portion of yesterday playing with Samba AD configurations to use with macOS, Windows clients. The setup is still an experiment, but it’s working well so far. I’m going to jot down some things here that took me a while to figure out. If it all goes well, maybe I’ll put together a step-by-step later.

I decided to use Zentyal because afaik, it is the only Linux/Samba based appliance geared primarily at being a Samba4 AD. The commercial version charges per user which is a nonstarter for me, so I am running 2 instances of the dev version and snapshotting before any upgrades. Time will tell if that will be tenable or not. Could go either way. I am not using the exchange mail server or anything else besides AD rn. At most, I will try out RADIUS if things go well.

Anyway, back when FreeNAS Corral was a thing, FreeNAS was going to pivot it’s AD DC solution to a Zentyal VM, so I figured I’d give that a shot for the intial config and then add a secondary DC in a VM on another machine so I could reboot the NAS. As you can imagine, having a machine bound to an AD DC that was in a VM running on said machine would create a chicken/egg problem at boot (I tried it, and it will eventually get through errors and boot, but not ideal).

Anyway, onto the minutia…

I wanted to use a keytab. It had been a while since I had messed with whole kerberos/samba/keytab situation, but what I ended up doing is:

Created a new domain admin user for the NAS to use for binding

samba-tool domain exportkeytab nasadmin.keytab --principal=nasadmin on the dc

Uploaded the keytab to the nas via gui

Set the AD config as follows in FreeNAS gui (leaving out obvious fields)

4a. recovery attempts 0

4b. check everything except trusted domains

4c. pick anything from kerberos principal (comes from the keytab)

4d. idmap backend ad

4e nss info rfc2307

4f. sasl seal

EDIT IDMAP

4a. range low 2500 <— this is the part that threw me off for quite a while

4b. rfc2307

Took hours before I realized that the DC was giving domain groups relatively low ids starting at 2500 and if the idmap range didn’t include those ids, using ad for the idmap backend wouldn’t work (wbinfo worked but gentent/id did not). From my past experience, I thought samba just ignored the idmap range if it was using rfc2307 but this doesn’t appear to be the case.

So that works and I have persistent uids and gids which was the goal. I can apply permissions with the domain accounts and log into smb shares. All the stuff you’d expect.

I set up a secondary Zentyal DC on an ESXi host and joined it. As always, it is very picky about dns and domain names, but if all of that is in place it will join and changes made on either DC propagate everywhere pretty quickly.

Couple things to note. I added some spns and added additional keytabs to freenas, but I’m not sure if anything is happening with them. kinit was only showing the binding tickets, and after rebooting, kinit shows nothing, yet I am still connected to the domain, so that’s confusing (even tested adding a user and everything). I wonder if it will survive a ticket renewal in that state.

If that is all hunky dory in a week or so, I’ll try to kerberize smb/afp/nfs, and maybe add a certificate (can you use letsencrypt for domain controller certificates?).

I hope it all works out. I’ve maintained Samba DC’s that I compiled and configured manually and I’m really not a fan of doing that. Zentyal also let’s me offload some basic user management admin stuff onto someone else with virtually no learning curve.

Looking at Zentyal closer, I found the quotaon service was failing. It appears that it would rather not use systemd at all, so:

sudo systemctl stop quotaon

sudo systemctl disable quotaon

sudo quotaoff /

sudo quotacheck -cogm /

sudo quotaon /

Also, sudo zs restart dns says it failed, but I can’t find any evidence of why. All the domain stuff is resolving and the forwards are working and I’m not seeing any related error messages in the logs, so maybe false positive there. I did make some manual changes to the config file in /etc/zentyal/ but it was all in spec.

Also discovered the all-servers option in dnsmasq which allowed me to define the ad domain there without creating conflicts with the dc dns. Really hate how fragile ad dns is. I would really rather forward to it instead of the other way around, but joins fail that way for reasons I’m not entirely sure of.

Quick update.

Nope.

I also discovered through this that on Ubiquiti Edgerouter, if you have expand-hosts set in dnsmasq and leave the domain field blank in a dhcp server instance, it will assign clients the router’s domain as the search domain. It refused to provide no search domain.

In this case, I just send static addresses for the Zentyal DC’s (don’t forget to set the default gateway which is on a separate config screen), but it was an interesting quirk in EdgeOS/Vyatta.

Don’t check unix extensions. From what I understood, in AD unix extensions implied rfc2307, but for whatever reason, in this case, it implies sssd… Idk tbh, but when it’s checked, sssd tries to init during boot and fails, and everything works fine in idmap ad without it, so keep it off I guess.

I would actually prefer to use sssd but the documentation is scarce and from quickly looking through the FreeNAS sssd tickets, I can’t really figure out if it’s something they’re actively trying to support or not…

Anyway, Zentyal automatically fills in the uid/gids, eliminating the admin overhead usually associated with rfc2307, and ad backend is working great, so why mess with success?

Another compelling reason for not pursuing sssd is that using a keytab for binding has mysteriously broken for me. I get a generic gssapi error now even though it worked before… when keytabs did work before, nfs was broken and now it isn’t. Very mysterious. I did try regenerating the keytab to no avail.

Had a few large transfers going on the FreeNAS box last night and managed to crash it. According to the litany of netdata emails I woke up to, it thinks it ran out of space… but it didn’t actually run out of space. If anyone understands what’s going on here, I’d love to know. I rebooted it via IPMI and restarted the transfers.

I was restructuring the dataset hierarchy after experimenting a bit with dedupe. I was simply copying folders from old datasets/snapshots to new datasets in some tmux sessions.

As you can see. No shortage on space after reboot:

If anyone is wondering if it’s possible to eke out any additional compression with gzip on media files, the answer is no. They don’t call it incompressible for nothing.

![]()

Something is fishy…

I am running the previously mentioned cp -av from a folder on one dataset into another and it is killing this system. If I run it with my one bhyve VM turned on, everything hangs. When i shutdown the VM, it doesn’t hang but netdata is in and out (process is paused every few seconds for a few seconds), and the system appears to be under a lot of load (see below, 2X Xeon X5670s, so 12/24 at 3.06/3.46ghz).

Memory is full, but of course that’s all ARC. Nothing is swapping. Checked dedupe:

![]()

Check my math, but I figure the dedupe table at about 5GB. System has 192GB of RAM.

What the hell?

The only slightly weird thing is that I’m copying from a case sensitive datastore to a case insensitive one… dedupe is not on for either dataset. Compression on both is lz4.

Setting up a Fat Twin tonight. Stay tuned.

Spoke too soon. It didn’t show. Maybe tomorrow.

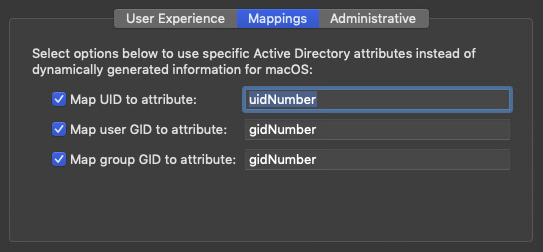

If anyone ever wondered what config to use to bind a macOS client to a Zentyal Samba AD using rfc2307, this is the mapping:

I thought fan stacking was bullshit, but I guess not? I know static pressure can be increased with counter rotating fans, but these all look identical… I’ll found out tonight. Going to try to replace this fan array with NF-A14 industrialPPC’s. Afaik, 45drives uses some 140mm Noctuas for intake which gives me hope.

The fans in these boxes are meant to cool high RPM enterprise drives under load 24/7, so there’s definitely room to tone down the cooling. I’m also open to not filling all the bays. The box was just very cheap.

It’s not going to be close to anyone, but it is in a ventilated rack where people walk. My threshold is about 70db.

Worst case, it’s intended for an archive and daily backup, so it could be on a power schedule where it’s only on after hours. Decent argument for doing that anyway.

If I disable cores on the CPU, will I lose DIMM slots? Not sure how that works…

It’s somewhat borderline. 2x E52630Ls got up to non-critical (but high) temps after being under heavy, but not synthetic load for about 20 minutes.

I have now turned off turbo, hyperthreading, enabled energy efficient power management and would like to disable some cores. The 6 8TB drives in the front never broke 41 degrees.

Disabling 2 cores (each) made a noticeable difference in temps. Going to put it in the rack now and see if that changes anything. It’s pretty cool in here, so want to take that into account.

I actually have some E52630Lv2’s that were intended for this system. Anyone have any idea if those would run hotter or cooler? With the faster base clock, I wouldn’t mind running that at 2 cores each (and maybe turn hyperthreading back on).