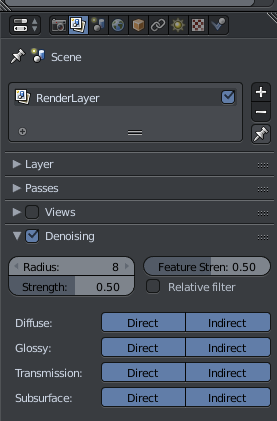

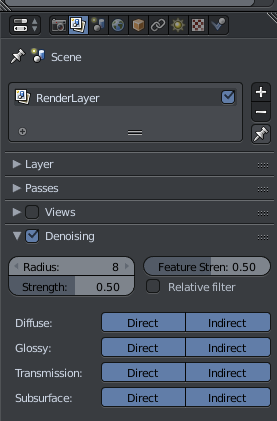

Turn on denoising, might help. Might make it blurry and washed out. Play with the radius in latter case.

Turn on denoising, might help. Might make it blurry and washed out. Play with the radius in latter case.

denoising doesn’t play well with emitters. Tends to make the entire light source look super aliased.

Once you get used it, it’s actually pretty efficient. It’s just not something most users are used to. I find Blender’s UX to be actually good. Well I do use vim, so…

you might want to flatten the shading some on the rest of the scene to match the background, just my .02 dollars

i think it’s great for modelling and procedural animation

not for NLE

Going to be adding a sky and fog in the back in post, so it isn’t going to be nearly as flat in the background as it is now.

fair enough

keep us posted

maybe, I didn’t do that much editing on it, so yeah. Speaking more for modelling.

even ignoring the lack of OFX support on blender and kdenlive, there’s no way I could perform my editing work as fast or as consistently on these options. blender because it isn’t actually an NLE, kdenlive because it can’t handle 1080p or 4k log 4:4:4 lossless because MLT is very badly optimized. (that and the interface breaks if you interact with it wrong)

you keep saying things I don’t know. I think it’s safe to assume I know pretty much nothing about video editing and tech that goes into that. Slow down, pal.

it’s the framework most modern OSS video editors use (shotcut, kdenlive, etc)

it can’t do gpu acceleration for plugins (or if it can no one’s bothered to actually implement it in their editor) the realtime processing seems to be single threaded, and whatever db structure it uses for the timeline is incredibly fragile.

the only upshot of kdenlive is that it just renders by piping frames to ffmpeg, so your storage and single thread performance are the only bottlenecks (for rendering) if you configure it right.

Yeah, I use a pre-made script that I pulled from Github and edited for my own use in Blender. I’ve heard there were issues with the multi-threaded rendering in Kdenlive, but that was quite a while ago. Maybe I should check that out too. Video editors on Linux have their own unique flaws and quirks in one way or another. I do realize that the VSE in Blender is meant for compositing small clips together. But It does work for larger projects, and I do like how customable the interface is. It is just one of those things that I just got use to because there were no better alternatives at the time that I started using it as a video editor.

I don’t want to get too deep in the video editor talk. Because this is a modeling thread. But I have been getting into traditional animation on my own free time, and Blender may still be useful for additional effects and creating models for rotoscoping.

it works 100%, if you do literally nothing to the source footage before hitting render.

the rendering is ffmpeg, so most codecs are heavily multithreaded, but MLT processing is cpu bound and single threaded. this means any effects shots, color grading, masking, etc decrease the upper bound of rendering speed for each pass.

the way I see it, blender doesn’t exist in a vacuum. 3d tools are meant to be used with compositors, video and image editors, production equipment and the like. Part of learning blender is how it stands in the ecosystem and interacts with other parts of it.

For example, if you only use blender, you may not know about OCIO/ACES, colorspaces, referencing your workflow to the screen vs the source for grading and matching, etc.

all of these are valuable skills for VFX and 3d

Great point.

No, I generally don’t worry about the colorspace, linearspace and grading when video editing, as I don’t use it enough, nor is it my profession. I get what you are saying on a professional level. Also, colour management is important for creating a realistic looking render. It can be the one thing that can go from convincing to blah if not used correctly. Same with image composition.

yep. Speaking of which, everyone here should be using filmic blender.

Good thing I’ve got two different monitors with completely different white balances and color spaces to make sure my work is accurate and flawless…

/s

Filmic is love, Filmic is life.

just grab a colormeter, get the icc profiles worked out, then return it

free standardization and profile matching

I borrowed one, my AOC monitor has some sort of LED degridation and genuinely can’t reproduce what my Acer monitor can.

that’s fun

usually you can get them pretty close tho