Ah, idk about a server apu. I wouldn’t know what the use case for it is. Usually servers don’t need that sort of processing balance.

Semi Accurate does not really like the announcement:

That’s Charlie. Always the skeptic.

There is a lot missing from this, so the more I read about this, the more skeptical I get that these will be usable units. We struggle with power density in modern datacenters as it is, I can’t imagine these cpu will be any less than 300w, meaning we will have to rethink rack design in order to make efficient utilization of DC space.

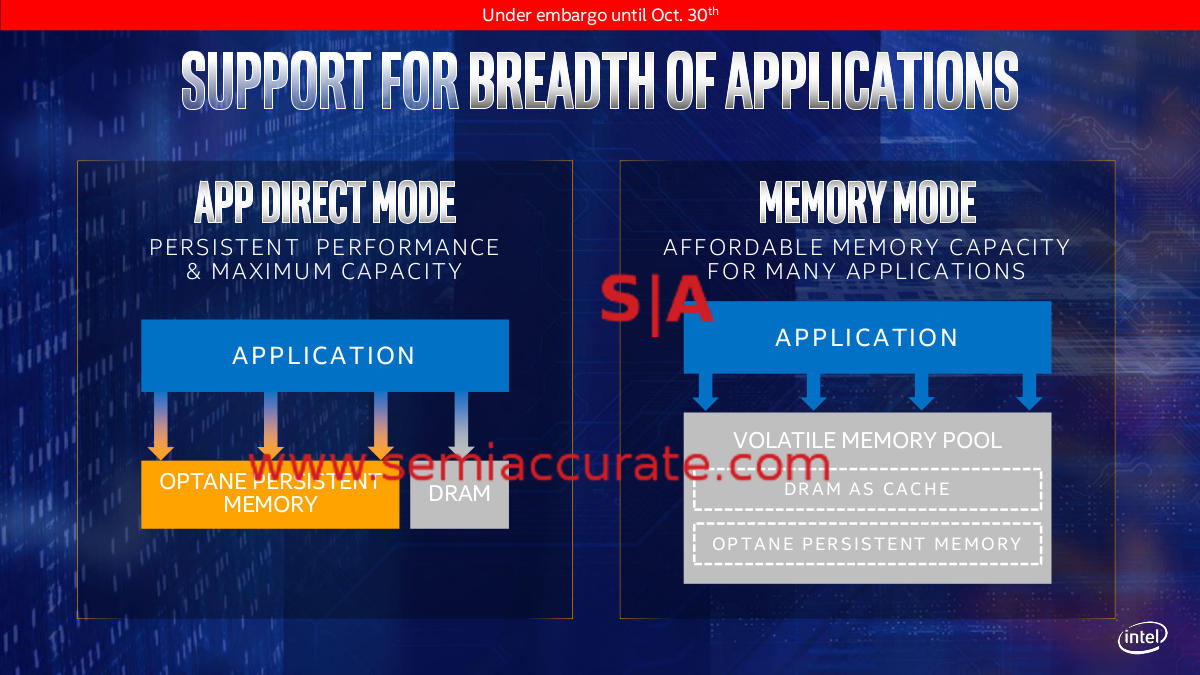

yep, you have not heard of that? It’s actually a very good idea for certain applications. Not to mention that the possible memory capacity is a lot higher than what you can get with DRAM. (also it’s non volatile, which can be very useful for some high availability applications)

I really hope the implementation is good. (Ideally there would be an open standard for this kind of technology)

It is a terrible idea!

You would have to redirect memory accesses in order to balance wear on the cells (latency goes bye bye) or half the stick would wear out in a year.

As I said …

It’ won’t replace DRAM but there are applications where huge amounts of reasonably fast memory can have huge benefits/cost savings. I am thinking about certain database scenarios and veeery big datasets in scientific compute or engineering applications.

You probably can mitigate that with a reasonably smart controller and not exposing all the memory (or not filling it all the way).

Nah. The CPU is redirecting memory addresses all the time without killing latency. Every single access to RAM has to be translated from a logical addresses to a physical one after all. Wear leveling should be much simpler (and faster) than that.

Whilst i agree, intel have a massive amount of bad things they have done that they have not been held accountable for to any significant degree. Its actually amazing AMD survived the 90s and 00s.

So, the pitchfork treatment is pretty much deserved, IMHO.

And this just reeks of hypocrisy after their hit piece on EPYC from last year.

That said, better intel CPUs = win win. AMD will need to keep the pace up to remain competitive and we all get better stuff.

edit:

that said, i don’t expect intel to be competitive with EPYC 7nm for some time. Their previous fab advantage is no longer a thing, and AMD have access to the best fabs available now. Plus i think their multi-chip architecture is ahead of where intel are with that.

Yes, however this “processing” step before Xpoint would be another layer after turning logical to physical address.

Guess why Intel has so many memory chips on their Optane drives vs how many chips Samsung has.

I’m just saying there are legitimate use cases for it, of course ideally there would be non volatile DRAM with the speed of L1 cache and without wear, in the capacity of hard drives but I guess we can’t have everything.

Both can be done simultaneously. When a program requests memory the OS has to decide which physical memory region to allocate. In the simplest case all the OS has to do is to assign the least frequently used page. This incurs no overhead at all when memory is accessed.

If Jim’s speculation is correct. Intel is going to get some shit for releasing a 48 core 48 thread chip. Ever since the new i7 was non-HT. I figured more flaws where coming.

As with every flaw the new flaw is “AMD is suspected to have this” and we have to wait for AMD to release a statement.

Time will tell.

next up…

intel to switch off ht at the compiler level to even the playing ground on a thread basis

…cause ‘security’

joke

If any of these people would actually offer tangible proof when they point fingers at AMD I would gladly accept it.

What I cant stand is the ‘suspicion’ with no proof given, not even months later.

Most of them claim they will do future testing on AMD processors but I never see anything materialize and its starting to annoy me.

But if it’s for security purposes, wouldn’t they need to force-disable HT on every CPU sold in the past 7 years? or am I missing something, and has this SKU only been produced for the single specific use case in which HT’s insecurity matters? /s

Sure and I am sure some server equipment has it turned off as an option.

If you watch the Adord video. Jim is basically saying at 14nm the parts are very power hungry. An answer to this would be intel to sell a “secure” server chip which is saving power because no HT. Also higher clock speeds. That’s just the speculation he made.

Thing is, not every workload will be exposed. So if Intel does do 48c48t. It will be against AMD’s parts which may be 64c128t. Most likely less power because 7nm. At the very minimum there will be 48c96t AMD chips.

This all stinks of the 28 core 5GHz workstation I am still waiting on. A nothing burger to distract from AMD’s launch.

To clarify, the HT security bug only realistically is a problem for servers. But you are talking a near 40% loss of performance at the flip of a switch. So they are not going to do it unless they absolutely have too.

Desperation driven marketing.

or may be worse: “Desperation driven engineering.”