So we know how Wifi and radio works a bit from my previous post. Intro to Radio and Wi-Fi Theory (Prelude to more advance topics)

So let us now talk about the advanced settings within your router that seem a bit esoteric for the common person.

Beacon Interval

Starting with the most commonly tweaked setting on wifi routers. My god, I could go on a massive rant about how much useless misinformation there is out there on this setting. The setting is the Beacon Interval. Beacons aka Beacon frames contain all the information about the network needed to identify it. Beacon frames are transmitted periodically and are affected by a couple different settings in the access point. They serve to announce the presence of a wifi network or any other wireless LAN for that matter regardless of frequency to synchronize the members of the network or service set. Beacon frames are transmitted by the access point (AP) which take up part of the usable bandwidth in the airspace. There is also a small time lag between the clients seeing the beacon which is dependent on the relative distance from the transmitter. Unexpectedly Wikipedia has a rather crude demonstration of this:

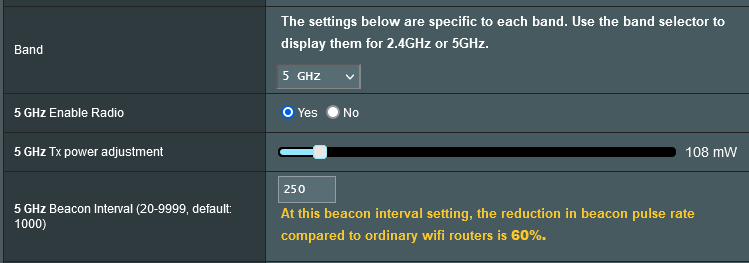

Now that you know about beacons, The beacon interval is the time interval between beacon transmissions. The time at which a node must send a beacon is actually called the Target Beacon Transmission Time. I used the term node here because it applies to any kind of network infrastructure wirelessly speaking. Also, the beacon interval is actually a relative quantization of the TBTT. Beacon interval is expressed in a Time Unit. It is a configurable parameter in the AP and is typically set to 100. Most routers will say this is 100 ms or 100 milliseconds. This is actually incorrect because the actual time varies +/- 3 ms and usually is about 102.4 ms on most consumer and prosumer wifi systems. Minor detail so I can see why engineers kept it simple for admins I have no problem with that.

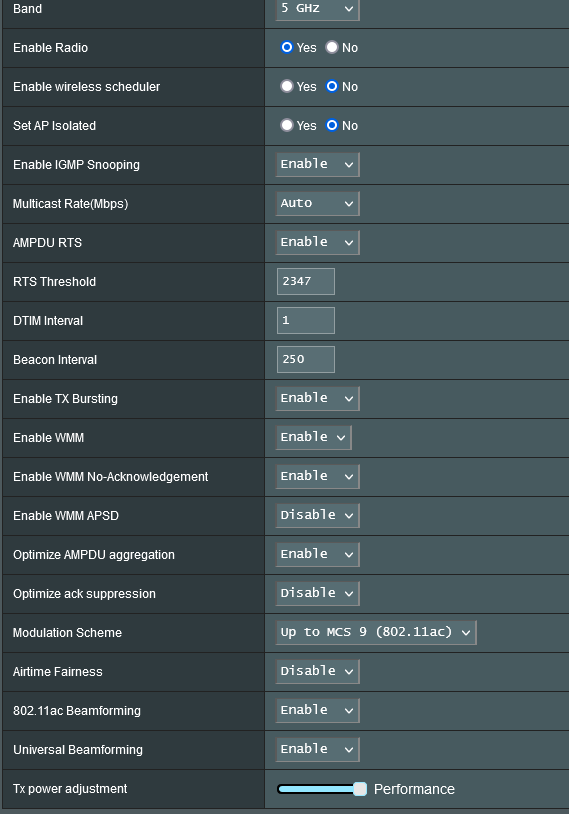

That being said what happens when we change the setting? It varies. Believe it or not sending a beacon at the lowest time interval DOES NOT improve wifi reception magically. If you are too far from the access point it just is not going to happen. Refer back to my previous post for why. That being said if you find you have spotty connectivity relatively close to access point or at least in an area of expected reception and you notice this setting is very high then perhaps lowering this number slightly will aid. Generally speaking IGNORE almost every guide that says to lower the value. These guides have no evidence rooted in any sort of fact. The fact of the matter is the more time your access point spends clearing the airwaves to send a beacon the less time you have for useful transmission. I recommend a value anywhere between 1000 ms and 10,000 ms though 1000 is a safe bet and best for general purpose applications 99 percent of the time. Lowering it to 100 or 500 would improve extremely time-sensitive applications and since most of us do not use those (NO I am not speaking of games) we do not need it this low. Manufacturers set it here to maximize use case compatibility which is very understandable. Set it to 1000-10000 after thorough testing that everything is working. I suggest maximizing the value with some testing. It will aid throughput and gaming performance.

DTIM Interval

Another setting the routers have is called the DTIM. Delivery traffic indication map or DTIM Interval goes hand in hand with the Beacon interval setting. The DTIM period value is a number that determines how often a beacon frame includes a Delivery Traffic Indication Message, and this number is included in each beacon frame. This is the synchronization part of the beacon frame itself. Remember how I said beacons allow you to see the network? They also sync the network. That’s why you do not want to exceed 10000 ms between synchronizations.

Depending on the timing set for your router, the router will buffer broadcast and multicast data and let your devices or clients know when to come out of power save mode to receive the data. The more often that DTIM is transmitted the more often that your radio devices wake up and the more battery that it uses due to a lack of entering its powersave mode. By setting a low value of DTIM and beacon interval you could keep your devices awake indefinitely so they never go into PS mode when idling though this would be rather useless dont you think? Depending on use case this setup can use up to 10~20% additional power consumption which is not good for battery powered devices. The setting can be set from 1-255 as its a calculated 8 bit value in the firmware. Router settings typically let you setup the “DTIM Interval” where you can calculate the DTIM period. However, some wifi routers may also let you setup the period directly but that is rare because of the level of complication this would require and is only found on professional radios typically. So what does this value do to your network? Ever wonder why routers keep pushing higher and higher amounts of routers contain ever larger buffer memory sizes well guess what. 99 percent of wifi devices automatically use the power save feature so it is necessary to buffer data for sometimes long periods of time if the data is not requiring ACK or acknowledgement. DTIM period or interval is activated whenever one of the device within your network uses power save mode. When one devices enable power save mode, the AP will use DTIM for all the devices connected to that particular SSID and buffer the data messages until its necessary to send. This is usually determined by a time out bit in the data headers. In most home use cases such as gaming or general internet use, the default value set by your router manufacturer is sufficient. Merlin firmware for ASUS routers typically has a DTIM of 3 and a beacon interval of 1000. Why these values? Well Rmerlin like many other people that have worked with WIFI enough know how to optimize performance with little sacrifice for the general and niche needs. These values are actually best. The DTIM is sufficiently low to allow VOIP and SIP applications not to stutter and lose performance and the beacon is long enough to sufficiently open the airwaves for good throughput. So for connected devices synchronization times come from multiplying the DTIM with the beacon interval. In Rmerlins firmware case 3*1000=3000ms or roughly every 3071 ms a beacon resynchronizes devices You can see how 3 seconds is better then 0.1 seconds of useful transmission time. (0.1 comes from DTIM=1 BI=100ms) which is what most guides say to do. Not to mention the benefit in longer sleep times for devices. Some poorly coded or small buffer routers may not be able to buffer a long holding period, which can potentially crash the router or cause data to be lost. You may also want to lower the value if you notice frequent drop in signal connection this can start to occur at higher DTIM and BI. Some devices drop the signal when they do not receive transmission from the beacon or miss several data transmission. Hence why too high and too low are bad settings. So my recommendation is DTIM=3 BI=1000 or you can experiment with the alternative DTIM=1 BI=3000 which will have the same functionality. So now you know what these settings do. Do not blindly follow guides. Test them for yourself.

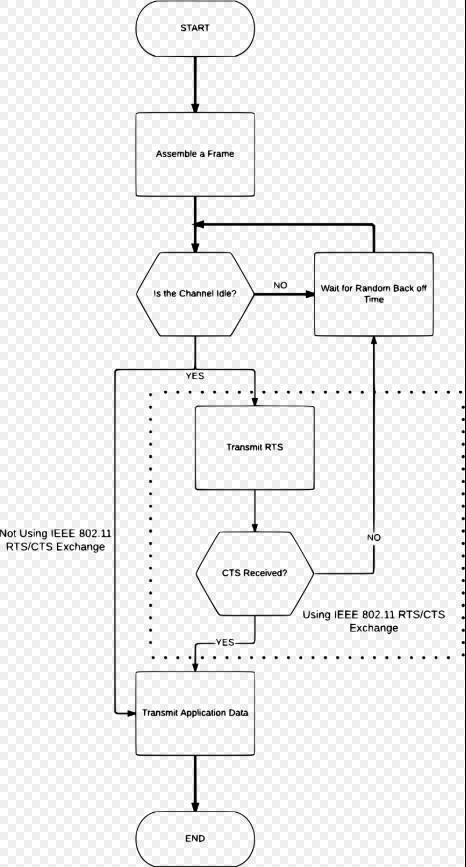

They both for the technically oriented are a part of Carrier-sense multiple access with collision avoidance which is used in many different IEEE communications systems… However this flow chart summarizes nicely

Without getting into this as its a whole new topic. Which also would speak of the hidden node dilemma.

Moving on because diving deeper into that requires talking about modulation. Which is actually really fun but another time

WMM Support

Wireless MultiMedia Options or Extensions. I think it is pretty obvious what they do. Keep them on simple as that now let us talk about them. After some time the IEEE needed a method to provide a certain quality of service to clients from a STA or AP (Station or Access Point). Multimedia and streaming became an ever growing booming market during this time and wireless needed to keep up. So among the billion other triggers that a Router needs to send data and a synchronizing beacon out this is one of them. Given the myriad of power saving mechanisms and different ways an incorrect setup could provide a not so nice wireless experience the 802.11 group.

WMM provides basic set of QOS features to IEEE 802.11 networks. WMM prioritizes traffic according to four Access Categories: voice, video, best effort, and background. However, it does not provide guaranteed throughput which allows it to work simultaneously with other QoS Services. It is suitable for well-defined applications that require QoS, such as Voice over IP on Wi-Fi phones or certain streaming applications. WMM replaces the Wi-Fi distributed coordination function for CSMA/CA wireless frame transmission with Enhanced Distributed Coordination Function. Wow did you get through all that! They really need to stop engineering fancy names for data control procedures. So EDCF according to IEEE 802.11 v1.1 of the WMM specifications set forth by the well known Wi-Fi Alliance, defines the labels in the headers as AC_VO, AC_VI, AC_BE, and AC_BK for the channel access parameters that are used by a WMM-enabled station or network to control how long it sets its Transmission Opportunity according to the header data. Its a method to prioritize stuff that needs prioritizing. This is where the DTIM and BI also somewhat play an effect on how this setting is then set. See with those two settings previously discussed we were talking about the entire networks useful transmission time. It would be inefficient to use that blindly. So WMM is used to then allocated that useful tranmission time by delegating which devices are receiving certain kinds of packets that need more air time or sometimes immediate air time. However this setting cannot override the DTIM and BI. This same set of wifi extensions leads to APSD. More on that later.

Subset – Ack Suppression or No Acknowledgement

In summary, Acknowledgement is a verification signal that your wireless client transmits back to the router or access point to let them know that the data is correct and whether or not the device will enter Power Save again. The ACK packet is an TCP error checking mechanism that takes bandwidth and airtime since it is a three step process. With normal wifi conditions occuring your wireless router sends data to your devices after the DTIM is flagged and the network is synchronized, the devices confirm to the router that the data is received (ACK), then your wireless router will either proceed to send more data or resend the data if there were any problems. It may also send a Power Save Delivery Packet to send the client back into power save mode via APSD.

Generally keep this setting disabled. The option does have its purposes at the right setting. You may consider turning it on if your wireless router is serving multiple devices that do not handle sensitive data. No Ack setting can reduce the amount of airtime that each devices need, since there is no more air waiting time dedicated to data acknowledgement. However in most cases this potential benefit does not outweigh the losess via data errors.

Optimize AMPDU Aggregation

AMPDU or Aggregated MAC (MEDIA ACCESS CONTROL) Protocol Data Unit is used to improve data transmission by grouping together several MPDU chunks. You may have also heard the term AMSDU during your research when googling to find not so much information. The only thing that you should know is that they are completely different settings that aggregate different parts of the transmission for a completely different set of data and this setting is often not available to modify so I am ignoring it. PM me for an explanation of it.

Due to certain technical issues with the mechanism the AMPDU option offers a few advantages and of course some disadvantages. AMPDU is less efficient in terms of process and speed if used standalone. AMPDU does improve overall network performance by reducing overhead during transmition. AMPDU improves error checking ability because of its additional Block ACK feature. It has very specific use cases. One such application would be if your wireless air space is very crowded. This will aid in the error detecting and correction part of transmissions in a noisy environment. If you have a dense network with so many connected devices this will increase capacity of the network by freeing up overhead. However if you game or use VOIP its best to leave this off. Also turn it off if you have strong signal or fewer then about 7-10 devices. Some guides say 3 but with the spectrum efficiency of 802.11 AC routers can handle many devices. Ever wonder why your skype or games lag on a public network. BINGO this setting affects it and its actually a good thing because it is to a certain degree allowing to use the wifi network with minimal issues involving congestion.

AP Isolation

Isolates all devices from crosstalking with one another. Simple as that. Do not turn it on in a home environment. Its best used in a public network as a security mechanism. Enough said.

IP Flood Detection

An IP Flood is a type of Denial of Service attack wherein the system is flooded with information thereby using up all available bandwidth further preventing legitimate users from access. Basically, one device could say fuck you all the bandwidth is mine and usually, this is done to isolate the network and a few computers the attacker may maliciously acquire as its target. When IP Flood Detection is enabled, your router has the ability to block malicious devices that are attempting to flood devices. At home it isn’t needed in a public setup this a useful security feature. It’s generally a standard you will find it on almost all routers and if you don’t it’s probably embedded and already enabled.

Multicast Rate – (DONT misconfigure this)

Let’s clear up all the misinformation on this setting. First off, the multicast rate option by itself WILL NOT AFFECT the actual range of your wireless network. For example, if you have 1 device that is outside the range of your router, having lower multicast rate WILL NOT extend the wireless range of your router. The reverse is also true, having higher multicast rate WILL NOT shrink the range of your wireless network. It can however affect the useful range in subtle ways. Second off, the multicast rate WILL NOT affect the noise floor or how the router deals with interference, your wireless signal strength, quality, maximum speed, minimum speed, or pretty much everything else you see out there on home made Wikis and Forums. Changing multicast setting will not affect any of those wireless network characteristics. I think I have beat the dead horse long enough. This is what multicast rate does, IT CAN improve the overall performance of your network when used in the right manor. A high multicast rate will “lower” the effective area coverage of your wireless network when the router is serving multiple devices simultaneously. The same is true in reverse. These are however the edge of the network cases. This setting at the core is part of the IP Multicast datagram. It groups together really big amounts of transmissions and codes in what part of the data goes to which device. Its almost like multiplexing. Its a point to multipoint transmission type allowing one-to-many or many-to-many realtime communication over a wifi network. Its used to improve overall efficiency. This tranmission type falls between unicast and broadcast. Uni going to one device from one place. Broadcast is one to all and Multi is one to some or many. Hence, rather than sending thousands of copies of a stream, the server instead streams a single flow that is then directed by routers on the network to the hosts that have specified that they need to get the stream. This removes the requirement to send redundant traffic over the network and also be likely to reduce CPU load on systems, which are not using the multicast system, yielding important enhancement to efficiency for both server and network.

Where is the relation? Well the rate to do this is significantly lower then the rest of the networks unicast rate. You can find your unicast or broadcast rates written as “link speed” in most applications. This can be in upwards of gigabits. The multicast however requires processing and modultion and certain headers and simultaneous communication so the network has to slow down to a synchronized rate on the wifi network (This differs from internet and wired multicast). typically the rate is set to the highest supported rate of the farthest device in the group being sent to.

To optimize this setting you should disable IGMP Snooping and set the multicast rate to be fixed at the lowest value possible setting. Some routers have the lowest value as 1mb, 2mb, or 5mb with the appropriate modulation setting automatically being used. Here are two setups: say your router is located in your living room and a) You have a single laptop in your bedroom. and b) You have multiple tablets and mobile devices streaming music, plus AppleTV or Chromecast streaming Netflix in your living room. In this case, the scenario b will benefit from higher multicast setting which will require higher modulation LOWERING the overall effective range of your network. However, too high of a multicast rate can hinder the performance of your laptop inside the bedroom, as the multicast will take away the available bandwidth measured in mbps. The only time you should optimize and test different multicast rate settings is when you run multiple streaming devices simultaneously in your home.

Preamble Type

Long or Short no texans bigger is not better and no anime fans the loli (short) part of the setting isnt better either. It highly depends on your network setup. So what is preamble? Once again its another error checking setting however the preamble has to do with channel aging and pilot tones. Long has 8 and Short has 4. It gives us the characteristics of the channel we are transmitting on. There are alot of these and to understand why so many are needed a wireless setting will require expansion on the theory side of this stuff. It adds some additional data headers to check for wifi data transmission errors. Short Preambles uses shorter data strings that are in use for error checking which means that it can and usually is much faster than a long type preamble and has less overhead. Long Preambles uses longer data headers which allow for better error checking. This takes away from the useful data areas however it should be used in cases where errors are occuring or noisy environments. It should also be used on large networks. Even though it slows down the network, errors also slow down and take up extra bandwidth to resend a packet so you can see the trade-off. At home go short, In public or large networks or long distance, transmissions keep it long. It’s really that simple.

Transmission Bursting // Packet Bursting

Sounds awesome in the name. It is actually kind of boring in practice. Basically without getting too far into the programming its a method to comb and aggregate the useful data to be sent and removing what is not needed then bursting multiple packets in rapid succession in the form of a queue. Once finished the access point will tell the client the burst is over. This can only be used in a unicast mode and it really has been deprecated by faster networks. This allows 54 mbps 802.11g networks to seem like they were running at double the rate intermittently aka 108 mbps. You may have heard of afterburner or turbo mode these are all the same thing but proprietary and require all the same manufacturers to make use of them. Safely disabled if you no longer use networking technologies older then Wireless N.

WMM APSD

WMM APSD stands for Wi-Fi Multimedia (WMM) Automatic Power Save Delivery. Basically it is a feature that allows your mobile devices to save battery while connected to your wifi network. It does so by allowing your mobile devices to enter a standby mode conserving battery. The APSD setting allows a smooth transition in and out of sleep mode by allowing the mobile devices to signal the router of its current status and of course vice versa. Remember how the beacon interval and DTIM period work together to conserve your device’s power usage and increase throughput? When a wireless adapter enters a power saving mode or sleep state, your router or access point can buffer the data and hold it for each device with the WMM APSD function enabled. There are two types of APSD that fall under this specification.

-

U-APSD (Unscheduled Automatic Power Save Delivery): Your client devices signal the router to transmit any buffered data and then the router sends a sleep signal back to the device when finished. This is controlled by the device and it is the master

-

S-APSD (scheduled Automatic Power Save Delivery), the Access Point will send buffered data based on a predetermined schedule known to the power-saving device without any signal from the station device other then the DTIM to synchronize the network. Thus allowing devices to ignore beacons that are unrelated to it.

I would keep this option on. Do not turn it off unless you have connectivity issues or turn it on if its disabled by default.

Ive decided from this point on I will take packet type and protocol related explanations in the comments ie IGMP snooping as its just too much to get into.

BEAMFORMING

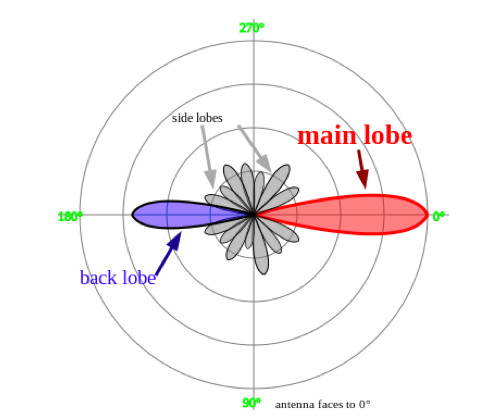

Finally some radio related stuff. Okay so you have multiple antennas forming an array. Well if you configured your antennas like i said in my last post then beamforming does some interesting stuff. However, I cannot speak of it all due to the fact that it would require a post by itself. Let us summarize it basically you remember the radiation pattern of an antenna. Well beamforming or at least in the case of IMPLICIT beamforming steers this based on the signal quality of each antenna dependant on the phase change of the signal. Here is a visiual demonstration. Keep in mind that this will also increase radiation 180 degrees from the direction of the client. That is why implicit helps networks laterally setup in the same direction.

Implicit beamforming does not require devices to support beamforming so Its useful one directionally. Additionally, this is more of a router based function, the station is able to estimate the direction of the data sent and received, and makes adjustments to its internal antenna or external antenna to boost the downlink speed only. To improve uplink you need explicit beamforming which SHOULD only be enabled if all devices support beamforming and the same beamforming technology. Beamforming can be achieved in many different ways not only by adjusting certain power parameters. It can change the phase and modulation as well. This is a topic that is too big to be anything other than a summary here. If demand is high enough I will make a post on it.

Roaming Assist

Simply put if the received signal strength or RSSI is low enough then it will handoff the device to a closer router. Usually found on enterprise systems its best used in wifi mesh networks and multiple AP systems that can work together to determine the best AP to connect to. Why is it related to zero in a negative manor? This is because its a relative unit to the 0 point which is the router and NO you will never get close enough to ever have this come close to zero because there is a minimum distance from the antenna you need in order to receive the signal properly wave propagation wise.

RTS Threshold

Fixes Hidden node issue. Measured in octlets. Leave it alone. Dont touch it. Its for 802.11g networks. This protocol was designed under the assumption that all nodes have the same transmission ranges, and does not solve the hidden terminal problem. The protocol can cause a new issue called the exposed terminal problem in which a wireless node that is nearby but is associated with another access point. The RTS is then overheard and then is signaled to back off and cease transmitting for the time specified in the RTS. This causes interference between older networks within range of each other. OOPS, RTS and CTS are very much no longer needed in my personal opinion. If someone knows more about it feel free to chime in.

If there are any I forgot comment below Ill explain them