@aBav.Normie-Pleb

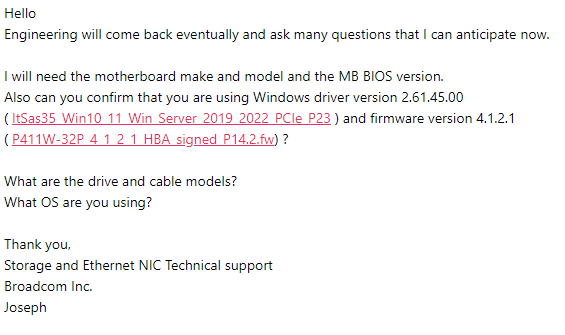

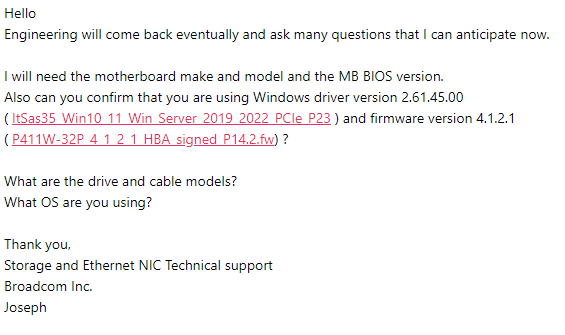

I asked on your behalf:

“When used in workstations & the system is put to sleep and then awoken, a BSOD occurs when writes are directed towards devices attached to the P411W-32P.”

I received this in return:

@aBav.Normie-Pleb

I asked on your behalf:

“When used in workstations & the system is put to sleep and then awoken, a BSOD occurs when writes are directed towards devices attached to the P411W-32P.”

I received this in return:

Anyone bought/tested the Lenovo 4C57A65446 retimer card?

Any reason this wouldnt work in Asus WRX80 SAGE Motherboard? Considering that card or the linkreal LRNV9F24 retimer card:

Shenzhen Lianrui Information Technology Co., Ltd. Has anyone tested this one and had success with Gen4 PCIe U.2 Drives?

I have a really right build and the supermicro card is just a touch too long or I would jump on that.

@Apophis

Welcome to the community!

Unfortunately not myself, the only active regular PCIe slot AICs I have tried are ones with PCIe Switches.

If it matters to you, be aware that with simple ReDriver/ReTimer AICs you might not be able to hot-plug drives but have to do a complete power cycle of the whole system.

If you check out that AIC please write a short comment here about your experiences.

Maybe I should start a PayPal/Patreon for PCIe adapter experimentation then I might be less hesitant to check out stuff I haven’t looked at in this thread yet.

Thanks!

Hot Plug is not much of a concern for me but appreciate the heads up. If no one can confirm if it works I will assume the role of guinea pig and I will try and get hold of the Linkreal adapter and report back. No need for the Patreon.

This has been a very useful thread to help plan my build and has probably saved me from more than a few expensive mistakes…

From Youtube comment section:

Reto Gysi

I run my 2 P5800x via Adaptec HBA Ultra 1200P-32i PCIe card. The drives are then presented as scsi devices to the OS.

aBavarian Normie Pleb

Can you run benchmarks to differentiate the emulated SCSI protocol compared to a direct connection with native NVMe?

I don’t have the connector that Wendell presented in the video so I can’t test direct PCIe connection.

Here’s my try at benchmarking ![]()

I can’t test the raw device, as the drive is in use, so I have to create an LVM volume.

Device #0

Device is a Hard drive

State : Raw (Pass Through)

Drive has stale RIS data : False

Disk Name : /dev/sdi

Block Size : 512 Bytes

Physical Block Size : 512 Bytes

Transfer Speed : PCIe 4.0 (16.0 GT/s)

Reported Channel,Device(T:L) : 0,24(24:0)

Reported Location : Direct Attached, Slot 0(Connector 3:CN3)

Vendor : NVME

Model : INTEL SSDPF21Q400GB

Firmware : L0310200

World-wide name : 0000000000000000

Reserved Size : 32768 KB

Used Size : 0 MB

Unused Size : 381522 MB

Total Size : 381554 MB

Write Cache : Unknown

S.M.A.R.T. : No

S.M.A.R.T. warnings : 0

SSD : Yes

Boot Type : None

Current Temperature : 32 deg C

Maximum Temperature : 36 deg C

Threshold Temperature : 70 deg C

PHY Count : 4

Drive Configuration Type : HBA

Mount Point(s) : Not Mounted

Drive Exposed to OS : True

Sanitize Erase Support : True

Sanitize Lock Freeze Support : False

Sanitize Lock Anti-Freeze Support : False

Sanitize Lock Setting : None

Usage Remaining : 0 percent

Estimated Life Remaining : Not Applicable

SSD Smart Trip Wearout : False

56 Day Warning Present : False

Drive SKU Number : Not Applicable

Drive Part Number : Not Applicable

Last Failure Reason : No Failure

----------------------------------------------------------------

Device Phy Information

----------------------------------------------------------------

Phy #0

Negotiated Physical Link Rate : PCIe 4.0 (16.0 GT/s)

Negotiated Logical Link Rate : PCIe 4.0 (16.0 GT/s)

Maximum Link Rate : PCIe 4.0 (16.0 GT/s)

Phy #1

Negotiated Physical Link Rate : PCIe 4.0 (16.0 GT/s)

Negotiated Logical Link Rate : PCIe 4.0 (16.0 GT/s)

Maximum Link Rate : PCIe 4.0 (16.0 GT/s)

Phy #2

Negotiated Physical Link Rate : PCIe 4.0 (16.0 GT/s)

Negotiated Logical Link Rate : PCIe 4.0 (16.0 GT/s)

Maximum Link Rate : PCIe 4.0 (16.0 GT/s)

Phy #3

Negotiated Physical Link Rate : PCIe 4.0 (16.0 GT/s)

Negotiated Logical Link Rate : PCIe 4.0 (16.0 GT/s)

Maximum Link Rate : PCIe 4.0 (16.0 GT/s)

----------------------------------------------------------------

Device Error Counters

----------------------------------------------------------------

Aborted Commands : 0

Bad Target Errors : 0

Ecc Recovered Read Errors : 0

Failed Read Recovers : 0

Failed Write Recovers : 0

Format Errors : 0

Hardware Errors : 0

Hard Read Errors : 0

Hard Write Errors : 0

Hot Plug Count : 0

Media Failures : 0

Not Ready Errors : 0

Other Time Out Errors : 0

Predictive Failures : 0

Retry Recovered Read Errors : 0

Retry Recovered Write Errors : 0

Scsi Bus Faults : 0

Sectors Reads : 0

Sectors Written : 0

Service Hours : 11

Command completed successfully.

root@zephir:~# lvcreate -L 20G -n benchmark optane /dev/sdi

Logical volume “benchmark” created.

With Linux buffering etc.

root@zephir:~# fio --filename /dev/optane/benchmark --ioengine libaio --group_reporting --time_based --runtime 30s --size 20G --stonewall --name read-SEQ1T8 --rw read --iodepth 1 --numjobs 8 --bs 1M --name write-SEQ1T8 --rw write --iodepth 1 --numjobs 8 --bs 1M --name read-SEQ1T1 --rw read --iodepth 1 --numjobs 1 --bs 1M --name write-SEQ1T1 --rw write --iodepth 1 --numjobs 1 --bs 1M --name read-RND4KQ1T8 --rw randread --iodepth 1 --numjobs 8 --bs 4k --name write-RND4KQ1T8 --rw randwrite --iodepth 1 --numjobs 8 --bs 4k --name read-RND4KQ1T1 --rw randread --iodepth 1 --numjobs 1 --bs 4k --name write-RND4KQ1T1 --rw randwrite --iodepth 1 --numjobs 1 --bs 4k

read-SEQ1T8: (g=0): rw=read, bs=(R) 1024KiB-1024KiB, (W) 1024KiB-1024KiB, (T) 1024KiB-1024KiB, ioengine=libaio, iodepth=1

...

write-SEQ1T8: (g=1): rw=write, bs=(R) 1024KiB-1024KiB, (W) 1024KiB-1024KiB, (T) 1024KiB-1024KiB, ioengine=libaio, iodepth=1

...

read-SEQ1T1: (g=2): rw=read, bs=(R) 1024KiB-1024KiB, (W) 1024KiB-1024KiB, (T) 1024KiB-1024KiB, ioengine=libaio, iodepth=1

write-SEQ1T1: (g=3): rw=write, bs=(R) 1024KiB-1024KiB, (W) 1024KiB-1024KiB, (T) 1024KiB-1024KiB, ioengine=libaio, iodepth=1

read-RND4KQ1T8: (g=4): rw=randread, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 4096B-4096B, ioengine=libaio, iodepth=1

...

write-RND4KQ1T8: (g=5): rw=randwrite, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 4096B-4096B, ioengine=libaio, iodepth=1

...

read-RND4KQ1T1: (g=6): rw=randread, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 4096B-4096B, ioengine=libaio, iodepth=1

write-RND4KQ1T1: (g=7): rw=randwrite, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 4096B-4096B, ioengine=libaio, iodepth=1

fio-3.25

Starting 36 processes

Jobs: 1 (f=1): [_(35),f(1)][100.0%][eta 00m:00s]

read-SEQ1T8: (groupid=0, jobs=8): err= 0: pid=981783: Thu Mar 9 22:44:56 2023

read: IOPS=11.1k, BW=10.9GiB/s (11.7GB/s)(326GiB/30001msec)

slat (usec): min=83, max=2434, avg=555.32, stdev=118.67

clat (nsec): min=381, max=26299, avg=644.13, stdev=284.18

lat (usec): min=83, max=2437, avg=556.20, stdev=118.71

clat percentiles (nsec):

| 1.00th=[ 410], 5.00th=[ 430], 10.00th=[ 442], 20.00th=[ 470],

| 30.00th=[ 510], 40.00th=[ 548], 50.00th=[ 588], 60.00th=[ 628],

| 70.00th=[ 692], 80.00th=[ 764], 90.00th=[ 892], 95.00th=[ 1048],

| 99.00th=[ 1416], 99.50th=[ 1640], 99.90th=[ 2704], 99.95th=[ 4384],

| 99.99th=[ 9152]

bw ( MiB/s): min= 656, max=14848, per=100.00%, avg=13628.09, stdev=366.89, samples=384

iops : min= 656, max=14848, avg=13627.50, stdev=366.90, samples=384

lat (nsec) : 500=27.16%, 750=50.94%, 1000=15.72%

lat (usec) : 2=5.98%, 4=0.14%, 10=0.05%, 20=0.01%, 50=0.01%

cpu : usr=0.20%, sys=60.72%, ctx=631911, majf=0, minf=11768

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=333656,0,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=1

write-SEQ1T8: (groupid=1, jobs=8): err= 0: pid=983909: Thu Mar 9 22:44:56 2023

write: IOPS=5494, BW=5495MiB/s (5762MB/s)(161GiB/30001msec); 0 zone resets

slat (usec): min=190, max=8984, avg=1083.18, stdev=134.11

clat (nsec): min=380, max=36479, avg=705.12, stdev=276.24

lat (usec): min=191, max=8987, avg=1084.05, stdev=134.14

clat percentiles (nsec):

| 1.00th=[ 524], 5.00th=[ 564], 10.00th=[ 580], 20.00th=[ 604],

| 30.00th=[ 620], 40.00th=[ 636], 50.00th=[ 652], 60.00th=[ 668],

| 70.00th=[ 700], 80.00th=[ 764], 90.00th=[ 892], 95.00th=[ 1020],

| 99.00th=[ 1400], 99.50th=[ 1592], 99.90th=[ 2864], 99.95th=[ 4384],

| 99.99th=[11456]

bw ( MiB/s): min= 1344, max= 8409, per=100.00%, avg=7229.60, stdev=121.49, samples=360

iops : min= 1344, max= 8408, avg=7228.80, stdev=121.47, samples=360

lat (nsec) : 500=0.46%, 750=77.96%, 1000=15.98%

lat (usec) : 2=5.37%, 4=0.16%, 10=0.05%, 20=0.01%, 50=0.01%

cpu : usr=1.80%, sys=70.55%, ctx=18799569, majf=0, minf=5990

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=0,164848,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=1

read-SEQ1T1: (groupid=2, jobs=1): err= 0: pid=985687: Thu Mar 9 22:44:56 2023

read: IOPS=2265, BW=2266MiB/s (2376MB/s)(66.4GiB/30001msec)

slat (usec): min=210, max=4748, avg=376.93, stdev=43.97

clat (nsec): min=440, max=10710, avg=613.38, stdev=162.16

lat (usec): min=211, max=4750, avg=377.75, stdev=44.03

clat percentiles (nsec):

| 1.00th=[ 490], 5.00th=[ 510], 10.00th=[ 524], 20.00th=[ 540],

| 30.00th=[ 548], 40.00th=[ 564], 50.00th=[ 572], 60.00th=[ 588],

| 70.00th=[ 612], 80.00th=[ 652], 90.00th=[ 748], 95.00th=[ 844],

| 99.00th=[ 1160], 99.50th=[ 1352], 99.90th=[ 1848], 99.95th=[ 2096],

| 99.99th=[ 5600]

bw ( MiB/s): min= 288, max= 2698, per=100.00%, avg=2515.37, stdev=490.03, samples=53

iops : min= 288, max= 2698, avg=2515.32, stdev=490.01, samples=53

lat (nsec) : 500=1.99%, 750=87.64%, 1000=8.16%

lat (usec) : 2=2.16%, 4=0.04%, 10=0.02%, 20=0.01%

cpu : usr=0.38%, sys=77.08%, ctx=101924, majf=0, minf=545

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=67968,0,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=1

write-SEQ1T1: (groupid=3, jobs=1): err= 0: pid=985959: Thu Mar 9 22:44:56 2023

write: IOPS=1642, BW=1642MiB/s (1722MB/s)(48.1GiB/30001msec); 0 zone resets

slat (usec): min=373, max=3373, avg=469.22, stdev=143.78

clat (nsec): min=421, max=14667, avg=582.02, stdev=167.27

lat (usec): min=374, max=3374, avg=469.93, stdev=143.86

clat percentiles (nsec):

| 1.00th=[ 462], 5.00th=[ 482], 10.00th=[ 490], 20.00th=[ 510],

| 30.00th=[ 524], 40.00th=[ 532], 50.00th=[ 540], 60.00th=[ 548],

| 70.00th=[ 572], 80.00th=[ 628], 90.00th=[ 732], 95.00th=[ 812],

| 99.00th=[ 1112], 99.50th=[ 1240], 99.90th=[ 1576], 99.95th=[ 1880],

| 99.99th=[ 7392]

bw ( MiB/s): min= 286, max= 2264, per=100.00%, avg=2050.25, stdev=333.13, samples=47

iops : min= 286, max= 2264, avg=2050.21, stdev=333.18, samples=47

lat (nsec) : 500=12.06%, 750=79.12%, 1000=7.27%

lat (usec) : 2=1.51%, 4=0.02%, 10=0.02%, 20=0.01%

cpu : usr=3.63%, sys=93.98%, ctx=372799, majf=0, minf=285

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=0,49270,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=1

read-RND4KQ1T8: (groupid=4, jobs=8): err= 0: pid=986341: Thu Mar 9 22:44:56 2023

read: IOPS=1452k, BW=5672MiB/s (5948MB/s)(166GiB/30001msec)

slat (nsec): min=791, max=1817.7k, avg=4300.97, stdev=6545.25

clat (nsec): min=230, max=236615, avg=283.11, stdev=115.22

lat (nsec): min=1102, max=1820.1k, avg=4622.57, stdev=6598.98

clat percentiles (nsec):

| 1.00th=[ 251], 5.00th=[ 251], 10.00th=[ 251], 20.00th=[ 262],

| 30.00th=[ 262], 40.00th=[ 262], 50.00th=[ 262], 60.00th=[ 270],

| 70.00th=[ 270], 80.00th=[ 310], 90.00th=[ 330], 95.00th=[ 362],

| 99.00th=[ 548], 99.50th=[ 604], 99.90th=[ 684], 99.95th=[ 732],

| 99.99th=[ 1464]

bw ( MiB/s): min= 485, max=15714, per=100.00%, avg=6357.36, stdev=667.16, samples=426

iops : min=124308, max=4022922, avg=1627484.93, stdev=170792.61, samples=426

lat (nsec) : 250=0.57%, 500=97.95%, 750=1.44%, 1000=0.02%

lat (usec) : 2=0.01%, 4=0.01%, 10=0.01%, 20=0.01%, 50=0.01%

lat (usec) : 100=0.01%, 250=0.01%

cpu : usr=10.44%, sys=58.60%, ctx=6672678, majf=0, minf=4006

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=43565049,0,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=1

write-RND4KQ1T8: (groupid=5, jobs=8): err= 0: pid=986699: Thu Mar 9 22:44:56 2023

write: IOPS=1046k, BW=4086MiB/s (4284MB/s)(160GiB/40100msec); 0 zone resets

slat (nsec): min=791, max=40480k, avg=4605.42, stdev=212785.38

clat (nsec): min=231, max=186020, avg=271.79, stdev=93.97

lat (nsec): min=1092, max=40482k, avg=4909.55, stdev=212802.38

clat percentiles (nsec):

| 1.00th=[ 251], 5.00th=[ 251], 10.00th=[ 262], 20.00th=[ 262],

| 30.00th=[ 262], 40.00th=[ 262], 50.00th=[ 270], 60.00th=[ 270],

| 70.00th=[ 270], 80.00th=[ 270], 90.00th=[ 282], 95.00th=[ 302],

| 99.00th=[ 382], 99.50th=[ 410], 99.90th=[ 482], 99.95th=[ 548],

| 99.99th=[ 1720]

bw ( MiB/s): min= 1806, max= 7529, per=100.00%, avg=5937.94, stdev=154.52, samples=440

iops : min=462568, max=1927663, avg=1520112.31, stdev=39556.34, samples=440

lat (nsec) : 250=0.06%, 500=99.86%, 750=0.05%, 1000=0.02%

lat (usec) : 2=0.01%, 4=0.01%, 10=0.01%, 20=0.01%, 50=0.01%

lat (usec) : 100=0.01%, 250=0.01%

cpu : usr=7.48%, sys=60.90%, ctx=5811320, majf=0, minf=3320

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=0,41943048,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=1

read-RND4KQ1T1: (groupid=6, jobs=1): err= 0: pid=987102: Thu Mar 9 22:44:56 2023

read: IOPS=48.8k, BW=191MiB/s (200MB/s)(5720MiB/30001msec)

slat (usec): min=13, max=2223, avg=19.73, stdev= 2.89

clat (nsec): min=290, max=49122, avg=340.18, stdev=86.89

lat (usec): min=14, max=2228, avg=20.17, stdev= 2.91

clat percentiles (nsec):

| 1.00th=[ 310], 5.00th=[ 310], 10.00th=[ 322], 20.00th=[ 322],

| 30.00th=[ 322], 40.00th=[ 330], 50.00th=[ 330], 60.00th=[ 330],

| 70.00th=[ 342], 80.00th=[ 350], 90.00th=[ 362], 95.00th=[ 402],

| 99.00th=[ 532], 99.50th=[ 556], 99.90th=[ 692], 99.95th=[ 1096],

| 99.99th=[ 2256]

bw ( KiB/s): min=190304, max=198152, per=100.00%, avg=195253.64, stdev=1819.73, samples=59

iops : min=47576, max=49538, avg=48813.37, stdev=454.93, samples=59

lat (nsec) : 500=98.21%, 750=1.70%, 1000=0.03%

lat (usec) : 2=0.05%, 4=0.01%, 10=0.01%, 20=0.01%, 50=0.01%

cpu : usr=4.63%, sys=26.85%, ctx=1464245, majf=0, minf=372

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=1464222,0,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=1

write-RND4KQ1T1: (groupid=7, jobs=1): err= 0: pid=987431: Thu Mar 9 22:44:56 2023

write: IOPS=254k, BW=993MiB/s (1041MB/s)(29.1GiB/30001msec); 0 zone resets

slat (nsec): min=1613, max=3241.8k, avg=2531.99, stdev=12494.18

clat (nsec): min=250, max=636787, avg=280.58, stdev=247.13

lat (nsec): min=1924, max=3243.6k, avg=2846.41, stdev=12509.62

clat percentiles (nsec):

| 1.00th=[ 262], 5.00th=[ 270], 10.00th=[ 270], 20.00th=[ 270],

| 30.00th=[ 270], 40.00th=[ 282], 50.00th=[ 282], 60.00th=[ 282],

| 70.00th=[ 282], 80.00th=[ 282], 90.00th=[ 290], 95.00th=[ 302],

| 99.00th=[ 350], 99.50th=[ 370], 99.90th=[ 470], 99.95th=[ 580],

| 99.99th=[ 956]

bw ( MiB/s): min= 80, max= 1293, per=100.00%, avg=1191.11, stdev=226.74, samples=49

iops : min=20542, max=331240, avg=304925.39, stdev=58045.55, samples=49

lat (nsec) : 500=99.92%, 750=0.06%, 1000=0.01%

lat (usec) : 2=0.01%, 4=0.01%, 10=0.01%, 20=0.01%, 50=0.01%

lat (usec) : 100=0.01%, 250=0.01%, 750=0.01%

cpu : usr=14.27%, sys=84.27%, ctx=72548, majf=0, minf=195

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=0,7627063,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=1

Run status group 0 (all jobs):

READ: bw=10.9GiB/s (11.7GB/s), 10.9GiB/s-10.9GiB/s (11.7GB/s-11.7GB/s), io=326GiB (350GB), run=30001-30001msec

Run status group 1 (all jobs):

WRITE: bw=5495MiB/s (5762MB/s), 5495MiB/s-5495MiB/s (5762MB/s-5762MB/s), io=161GiB (173GB), run=30001-30001msec

Run status group 2 (all jobs):

READ: bw=2266MiB/s (2376MB/s), 2266MiB/s-2266MiB/s (2376MB/s-2376MB/s), io=66.4GiB (71.3GB), run=30001-30001msec

Run status group 3 (all jobs):

WRITE: bw=1642MiB/s (1722MB/s), 1642MiB/s-1642MiB/s (1722MB/s-1722MB/s), io=48.1GiB (51.7GB), run=30001-30001msec

Run status group 4 (all jobs):

READ: bw=5672MiB/s (5948MB/s), 5672MiB/s-5672MiB/s (5948MB/s-5948MB/s), io=166GiB (178GB), run=30001-30001msec

Run status group 5 (all jobs):

WRITE: bw=4086MiB/s (4284MB/s), 4086MiB/s-4086MiB/s (4284MB/s-4284MB/s), io=160GiB (172GB), run=40100-40100msec

Run status group 6 (all jobs):

READ: bw=191MiB/s (200MB/s), 191MiB/s-191MiB/s (200MB/s-200MB/s), io=5720MiB (5997MB), run=30001-30001msec

Run status group 7 (all jobs):

WRITE: bw=993MiB/s (1041MB/s), 993MiB/s-993MiB/s (1041MB/s-1041MB/s), io=29.1GiB (31.2GB), run=30001-30001msec

without buffering and with end_fsync

root@zephir:~# fio --filename /dev/optane/benchmark --ioengine libaio --group_reporting --time_based --runtime 30s --direct 1 --buffered 0 --end_fsync 1 --size 20G --stonewall --name read-SEQ1T8 --rw read --iodepth 1 --numjobs 8 --bs 1M --name write-SEQ1T8 --rw write --iodepth 1 --numjobs 8 --bs 1M --name read-SEQ1T1 --rw read --iodepth 1 --numjobs 1 --bs 1M --name write-SEQ1T1 --rw write --iodepth 1 --numjobs 1 --bs 1M --name read-RND4KQ1T8 --rw randread --iodepth 1 --numjobs 8 --bs 4k --name write-RND4KQ1T8 --rw randwrite --iodepth 1 --numjobs 8 --bs 4k --name read-RND4KQ1T1 --rw randread --iodepth 1 --numjobs 1 --bs 4k --name write-RND4KQ1T1 --rw randwrite --iodepth 1 --numjobs 1 --bs 4k

read-SEQ1T8: (g=0): rw=read, bs=(R) 1024KiB-1024KiB, (W) 1024KiB-1024KiB, (T) 1024KiB-1024KiB, ioengine=libaio, iodepth=1

...

write-SEQ1T8: (g=1): rw=write, bs=(R) 1024KiB-1024KiB, (W) 1024KiB-1024KiB, (T) 1024KiB-1024KiB, ioengine=libaio, iodepth=1

...

read-SEQ1T1: (g=2): rw=read, bs=(R) 1024KiB-1024KiB, (W) 1024KiB-1024KiB, (T) 1024KiB-1024KiB, ioengine=libaio, iodepth=1

write-SEQ1T1: (g=3): rw=write, bs=(R) 1024KiB-1024KiB, (W) 1024KiB-1024KiB, (T) 1024KiB-1024KiB, ioengine=libaio, iodepth=1

read-RND4KQ1T8: (g=4): rw=randread, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 4096B-4096B, ioengine=libaio, iodepth=1

...

write-RND4KQ1T8: (g=5): rw=randwrite, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 4096B-4096B, ioengine=libaio, iodepth=1

...

read-RND4KQ1T1: (g=6): rw=randread, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 4096B-4096B, ioengine=libaio, iodepth=1

write-RND4KQ1T1: (g=7): rw=randwrite, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 4096B-4096B, ioengine=libaio, iodepth=1

fio-3.25

Starting 36 processes

Jobs: 1 (f=1): [_(35),w(1)][77.3%][w=205MiB/s][w=52.5k IOPS][eta 01m:11s]

read-SEQ1T8: (groupid=0, jobs=8): err= 0: pid=989388: Thu Mar 9 22:51:26 2023

read: IOPS=6699, BW=6700MiB/s (7025MB/s)(196GiB/30001msec)

slat (usec): min=14, max=414, avg=15.92, stdev= 2.69

clat (usec): min=925, max=4845, avg=1177.52, stdev=65.58

lat (usec): min=940, max=4867, avg=1193.51, stdev=65.66

clat percentiles (usec):

| 1.00th=[ 1045], 5.00th=[ 1074], 10.00th=[ 1106], 20.00th=[ 1123],

| 30.00th=[ 1139], 40.00th=[ 1156], 50.00th=[ 1172], 60.00th=[ 1188],

| 70.00th=[ 1205], 80.00th=[ 1221], 90.00th=[ 1254], 95.00th=[ 1270],

| 99.00th=[ 1319], 99.50th=[ 1352], 99.90th=[ 1401], 99.95th=[ 1434],

| 99.99th=[ 1680]

bw ( MiB/s): min= 6535, max= 6748, per=100.00%, avg=6700.86, stdev= 4.33, samples=472

iops : min= 6531, max= 6748, avg=6700.42, stdev= 4.39, samples=472

lat (usec) : 1000=0.12%

lat (msec) : 2=99.87%, 4=0.01%, 10=0.01%

cpu : usr=0.12%, sys=1.71%, ctx=201027, majf=0, minf=2140

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=200998,0,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=1

write-SEQ1T8: (groupid=1, jobs=8): err= 0: pid=989665: Thu Mar 9 22:51:26 2023

write: IOPS=4475, BW=4476MiB/s (4693MB/s)(131GiB/30001msec); 0 zone resets

slat (usec): min=14, max=148, avg=19.69, stdev= 3.11

clat (usec): min=1230, max=6084, avg=1767.04, stdev=80.72

lat (usec): min=1260, max=6103, avg=1786.80, stdev=80.70

clat percentiles (usec):

| 1.00th=[ 1582], 5.00th=[ 1647], 10.00th=[ 1663], 20.00th=[ 1696],

| 30.00th=[ 1729], 40.00th=[ 1745], 50.00th=[ 1762], 60.00th=[ 1795],

| 70.00th=[ 1811], 80.00th=[ 1827], 90.00th=[ 1860], 95.00th=[ 1893],

| 99.00th=[ 1942], 99.50th=[ 1958], 99.90th=[ 1991], 99.95th=[ 2008],

| 99.99th=[ 2073]

bw ( MiB/s): min= 4406, max= 4512, per=100.00%, avg=4476.60, stdev= 2.56, samples=472

iops : min= 4404, max= 4512, avg=4476.44, stdev= 2.59, samples=472

lat (msec) : 2=99.91%, 4=0.09%, 10=0.01%

cpu : usr=0.48%, sys=0.94%, ctx=134293, majf=0, minf=94

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=0,134271,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=1

read-SEQ1T1: (groupid=2, jobs=1): err= 0: pid=990020: Thu Mar 9 22:51:26 2023

read: IOPS=1317, BW=1317MiB/s (1381MB/s)(38.6GiB/30001msec)

slat (usec): min=13, max=270, avg=15.27, stdev= 2.39

clat (usec): min=676, max=3997, avg=743.43, stdev=28.74

lat (usec): min=691, max=4011, avg=758.76, stdev=28.89

clat percentiles (usec):

| 1.00th=[ 709], 5.00th=[ 709], 10.00th=[ 709], 20.00th=[ 717],

| 30.00th=[ 717], 40.00th=[ 734], 50.00th=[ 750], 60.00th=[ 758],

| 70.00th=[ 766], 80.00th=[ 766], 90.00th=[ 766], 95.00th=[ 775],

| 99.00th=[ 775], 99.50th=[ 775], 99.90th=[ 783], 99.95th=[ 783],

| 99.99th=[ 807]

bw ( MiB/s): min= 1308, max= 1322, per=100.00%, avg=1317.49, stdev= 2.94, samples=59

iops : min= 1308, max= 1322, avg=1317.42, stdev= 2.95, samples=59

lat (usec) : 750=49.78%, 1000=50.22%

lat (msec) : 4=0.01%

cpu : usr=0.13%, sys=2.58%, ctx=39519, majf=0, minf=266

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=39517,0,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=1

write-SEQ1T1: (groupid=3, jobs=1): err= 0: pid=990319: Thu Mar 9 22:51:26 2023

write: IOPS=1292, BW=1293MiB/s (1355MB/s)(37.9GiB/30001msec); 0 zone resets

slat (usec): min=14, max=104, avg=18.09, stdev= 2.33

clat (usec): min=693, max=3445, avg=755.09, stdev=31.96

lat (usec): min=709, max=3462, avg=773.24, stdev=32.12

clat percentiles (usec):

| 1.00th=[ 717], 5.00th=[ 717], 10.00th=[ 717], 20.00th=[ 725],

| 30.00th=[ 734], 40.00th=[ 750], 50.00th=[ 766], 60.00th=[ 775],

| 70.00th=[ 775], 80.00th=[ 775], 90.00th=[ 783], 95.00th=[ 783],

| 99.00th=[ 791], 99.50th=[ 799], 99.90th=[ 807], 99.95th=[ 807],

| 99.99th=[ 1123]

bw ( MiB/s): min= 1284, max= 1300, per=100.00%, avg=1292.81, stdev= 4.01, samples=59

iops : min= 1284, max= 1300, avg=1292.75, stdev= 4.00, samples=59

lat (usec) : 750=41.36%, 1000=58.63%

lat (msec) : 2=0.01%, 4=0.01%

cpu : usr=1.06%, sys=1.86%, ctx=38780, majf=0, minf=12

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=0,38778,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=1

read-RND4KQ1T8: (groupid=4, jobs=8): err= 0: pid=990661: Thu Mar 9 22:51:26 2023

read: IOPS=459k, BW=1794MiB/s (1881MB/s)(52.6GiB/30001msec)

slat (nsec): min=1683, max=3382.7k, avg=2578.99, stdev=1222.76

clat (nsec): min=331, max=6609.7k, avg=14388.62, stdev=5616.78

lat (usec): min=12, max=6673, avg=17.02, stdev= 5.78

clat percentiles (nsec):

| 1.00th=[11584], 5.00th=[12224], 10.00th=[12608], 20.00th=[13248],

| 30.00th=[13632], 40.00th=[14016], 50.00th=[14272], 60.00th=[14656],

| 70.00th=[14912], 80.00th=[15296], 90.00th=[16192], 95.00th=[17024],

| 99.00th=[18816], 99.50th=[19840], 99.90th=[22656], 99.95th=[23680],

| 99.99th=[30848]

bw ( MiB/s): min= 1640, max= 1851, per=100.00%, avg=1794.30, stdev= 5.31, samples=472

iops : min=419938, max=473940, avg=459340.64, stdev=1358.50, samples=472

lat (nsec) : 500=0.01%, 750=0.01%, 1000=0.01%

lat (usec) : 2=0.01%, 4=0.01%, 10=0.01%, 20=99.54%, 50=0.45%

lat (usec) : 100=0.01%, 250=0.01%, 500=0.01%, 750=0.01%, 1000=0.01%

lat (msec) : 2=0.01%, 4=0.01%, 10=0.01%

cpu : usr=6.84%, sys=25.88%, ctx=13778322, majf=0, minf=3301

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=13778241,0,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=1

write-RND4KQ1T8: (groupid=5, jobs=8): err= 0: pid=990951: Thu Mar 9 22:51:26 2023

write: IOPS=467k, BW=1824MiB/s (1913MB/s)(53.4GiB/30001msec); 0 zone resets

slat (nsec): min=1693, max=2776.8k, avg=2535.63, stdev=1107.41

clat (nsec): min=320, max=2412.6k, avg=14121.85, stdev=2404.80

lat (usec): min=13, max=2778, avg=16.70, stdev= 2.78

clat percentiles (nsec):

| 1.00th=[11840], 5.00th=[11968], 10.00th=[12224], 20.00th=[12480],

| 30.00th=[12736], 40.00th=[13248], 50.00th=[13760], 60.00th=[14272],

| 70.00th=[14784], 80.00th=[15552], 90.00th=[16512], 95.00th=[17536],

| 99.00th=[20352], 99.50th=[21632], 99.90th=[24448], 99.95th=[25472],

| 99.99th=[29312]

bw ( MiB/s): min= 1635, max= 1931, per=100.00%, avg=1824.76, stdev= 8.13, samples=472

iops : min=418754, max=494564, avg=467138.64, stdev=2082.20, samples=472

lat (nsec) : 500=0.01%, 750=0.01%, 1000=0.01%

lat (usec) : 2=0.01%, 4=0.01%, 10=0.01%, 20=98.86%, 50=1.13%

lat (usec) : 100=0.01%, 250=0.01%, 500=0.01%, 750=0.01%, 1000=0.01%

lat (msec) : 2=0.01%, 4=0.01%

cpu : usr=7.42%, sys=25.64%, ctx=14010481, majf=0, minf=2567

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=0,14010376,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=1

read-RND4KQ1T1: (groupid=6, jobs=1): err= 0: pid=991366: Thu Mar 9 22:51:26 2023

read: IOPS=54.1k, BW=211MiB/s (221MB/s)(6336MiB/30001msec)

slat (nsec): min=1773, max=151845, avg=1881.28, stdev=312.87

clat (nsec): min=381, max=2700.2k, avg=16237.90, stdev=3397.15

lat (usec): min=13, max=2702, avg=18.16, stdev= 3.42

clat percentiles (nsec):

| 1.00th=[15808], 5.00th=[16064], 10.00th=[16064], 20.00th=[16064],

| 30.00th=[16064], 40.00th=[16064], 50.00th=[16192], 60.00th=[16192],

| 70.00th=[16192], 80.00th=[16320], 90.00th=[16512], 95.00th=[16512],

| 99.00th=[17792], 99.50th=[19840], 99.90th=[21632], 99.95th=[22400],

| 99.99th=[28032]

bw ( KiB/s): min=214712, max=217936, per=100.00%, avg=216315.54, stdev=692.64, samples=59

iops : min=53678, max=54484, avg=54078.85, stdev=173.17, samples=59

lat (nsec) : 500=0.01%

lat (usec) : 10=0.01%, 20=99.52%, 50=0.48%, 100=0.01%, 250=0.01%

lat (usec) : 500=0.01%, 750=0.01%

lat (msec) : 2=0.01%, 4=0.01%

cpu : usr=5.80%, sys=18.99%, ctx=1622031, majf=0, minf=18

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=1622024,0,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=1

write-RND4KQ1T1: (groupid=7, jobs=1): err= 0: pid=991644: Thu Mar 9 22:51:26 2023

write: IOPS=52.5k, BW=205MiB/s (215MB/s)(6150MiB/30001msec); 0 zone resets

slat (nsec): min=1764, max=328297, avg=1923.79, stdev=443.31

clat (nsec): min=320, max=1777.2k, avg=16725.09, stdev=2220.79

lat (usec): min=13, max=1779, avg=18.69, stdev= 2.28

clat percentiles (nsec):

| 1.00th=[16320], 5.00th=[16512], 10.00th=[16512], 20.00th=[16512],

| 30.00th=[16512], 40.00th=[16512], 50.00th=[16512], 60.00th=[16768],

| 70.00th=[16768], 80.00th=[16768], 90.00th=[17024], 95.00th=[17024],

| 99.00th=[18304], 99.50th=[20096], 99.90th=[22400], 99.95th=[23424],

| 99.99th=[31872]

bw ( KiB/s): min=207696, max=211328, per=100.00%, avg=209943.73, stdev=738.13, samples=59

iops : min=51924, max=52832, avg=52485.93, stdev=184.53, samples=59

lat (nsec) : 500=0.01%

lat (usec) : 4=0.01%, 10=0.01%, 20=99.49%, 50=0.51%, 100=0.01%

lat (usec) : 250=0.01%, 500=0.01%, 750=0.01%, 1000=0.01%

lat (msec) : 2=0.01%

cpu : usr=6.49%, sys=18.02%, ctx=1574421, majf=0, minf=19

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=0,1574409,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=1

Run status group 0 (all jobs):

READ: bw=6700MiB/s (7025MB/s), 6700MiB/s-6700MiB/s (7025MB/s-7025MB/s), io=196GiB (211GB), run=30001-30001msec

Run status group 1 (all jobs):

WRITE: bw=4476MiB/s (4693MB/s), 4476MiB/s-4476MiB/s (4693MB/s-4693MB/s), io=131GiB (141GB), run=30001-30001msec

Run status group 2 (all jobs):

READ: bw=1317MiB/s (1381MB/s), 1317MiB/s-1317MiB/s (1381MB/s-1381MB/s), io=38.6GiB (41.4GB), run=30001-30001msec

Run status group 3 (all jobs):

WRITE: bw=1293MiB/s (1355MB/s), 1293MiB/s-1293MiB/s (1355MB/s-1355MB/s), io=37.9GiB (40.7GB), run=30001-30001msec

Run status group 4 (all jobs):

READ: bw=1794MiB/s (1881MB/s), 1794MiB/s-1794MiB/s (1881MB/s-1881MB/s), io=52.6GiB (56.4GB), run=30001-30001msec

Run status group 5 (all jobs):

WRITE: bw=1824MiB/s (1913MB/s), 1824MiB/s-1824MiB/s (1913MB/s-1913MB/s), io=53.4GiB (57.4GB), run=30001-30001msec

Run status group 6 (all jobs):

READ: bw=211MiB/s (221MB/s), 211MiB/s-211MiB/s (221MB/s-221MB/s), io=6336MiB (6644MB), run=30001-30001msec

Run status group 7 (all jobs):

WRITE: bw=205MiB/s (215MB/s), 205MiB/s-205MiB/s (215MB/s-215MB/s), io=6150MiB (6449MB), run=30001-30001msec

root@zephir:~#

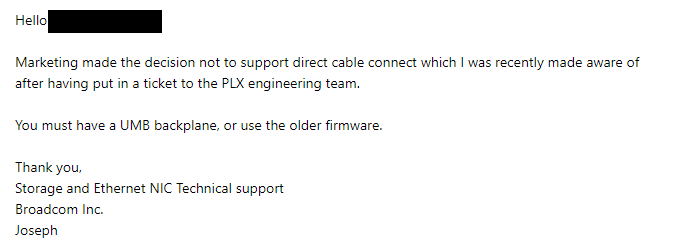

Update time!!

Time for every one who has one of these to reach out and give them an earful.

It’s almost comical how Broadcom seems to really take every step with the intention of showing how you should not handle such issues.

Do they reimburse you for the expensive Broadcom cables they sold to be able to use directly connected SSDs?

Has this reached a level to make a dedicated video about Broadcom? Maybe other models not only the three HBAs I could check (9400-8i8e, 9500-16i and P411W-32P) have been messed up as well.

Broadcom unfortunately still enjoys the reputation from their HBAs’ LSI days being recommended as the “gold standard”, which needs to change in my opinion since Broadcom obviously doesn’t care about the actual quality of their products.

I have my own “lulwut” support ticket running parallel and… I can only think that whoever owns broadcom must be winding them down or something… its the only thing that makes sense

or possibly they are going to roll them up to only sell to amd, nvida, intel, dell, hp, lenovo, quanta and like 3 ish other companies. no unwashed masses of end-users.

its the only thing that makes sense.

Hi, I wonder if anyone knows anything about some PEX 8717 PCIe Gen 3 switches I found while browsing the Taiwanese website shopee. There are no markings on the board.

Search for “PEX 8717, PCI Express Gen 3 Switch, 16 Lanes, PCI-E Card”

The title doesn’t match the product. It seems to be a 4x PCIe lane to 2x 4x PCIe lane device.

I’ve never seen anything interesting (internal development e-waste from tech companies) before show up on shopee before, so I don’t recommend making browsing this site a habit.

Each card has a 4x PCIe connector, which splits to 2x miniHDMI… I assume it’s miniHDMI. I assume each miniHDMI cable carries 4x PCIe lanes + the PCIe clock… but I’m not sure how well that works. I have no idea what sort of daughter board they would connect to; the seller doesn’t have any other hardware. It would take a considerable amount of reverse engineering to figure out the pinout and design a NVME carrier board. There are 6 pieces available for 400 TWD ~14 USD each. The whole idea here would be to build a Frankenstein setup - simply to just try it; I know it’s silly to take where 6x NVMe drives would go and turn it into 12x. I’d use some m.2 to PCIe 4x adapters, install these PCIe switches, and then run to the custom daughter boards.

Here’s an example of a MiniHDMI used on a similar device.

Search for “PCIE 1x轉Mini HDMI接口 HP1A”

I must admit, I’m slightly new to this, but what’s a UMB backplane and why can’t I find anything about it on google? ![]()

A summary of working HBA + cables + firmware would be good, I’ve either missed it in my skimming of the past 180 messages or it’s been an evolving conclusion.

Thank you for all the work you’ve done to get this far!

I saw this on wccftech:

21 m.2 cards via gen 4 x16.

Has anyone seen a PCIe Gen4 active Switch U.2/U.3 HBA where the chipset is NOT from Broadcom?

I’ve got a 3258p-32i/e, its supposed to do x1, x2, x4 or x8 wide nvme connections; connects to the host via an gen4 x16 link.

Was looking for a pure PCIe Switch AIC, only have V1/V2 Icy Dock U.2 backplanes that unfortunately don’t work with Tri-Mode HBAs ![]()

Tom’s Hardware quoted the AIC as having “an average read and write access latency of 79us and 52us, respectively.” Definitely not something to put Optanes onto for sure.

How would that compare to a normal M.2 slot, for comparison?

It would be great for throughput.

I have been thinking about this card with 21 times a Transcend 220S 2TB. This SSD is cheap (PCIe3) and has great durability with 4.4PBW per SSD. That would be 92.4 PBW (92400TBW) making it suitable to act as swap for virtual machines and a lot of writes with scripts that write temporary stuff. I could really use it as a harddrive this way. Total capacity would be 42TB. But i would probably start with a few and expand as a need more storage. ZFS already allows for RAID0 expansion. Two HDDs as backup that are mostly spun down, and it would be great for power efficiency too.

These SSDs are 119 euro making the 42TB array cost 2499 euro. Doable over time, particularly if i can start smaller with just a bunch of them and add more over time. Not sure how much the card would cost, though.

It probably has some PLX chip or something to account for the greater downstream bandwidth than the x16 upstream bandwidth? It looks very interesting to me.

If you want Optane you probably will use it directly on the motherboard M.2 slots. Optane not that interesting anyway? One of them as ZFS separate LOG device would be great.

This card with cheap SSDs instead would be awesome for ultra fast bulk storage. 32GB/s 42TB high durability and limited cost could make it really attractive for enthusiasts like me.

I don’t think durability works like in your calculation. The total durability will be determined by the min(durability of single device) not sum(durability of single device). Especially, if you plan on configuring all 21 m.2s in a RAID0 configuration - otherwise the config will not reach the expected 42TB of storage.

But what makes you think that in a RAID0 configuration the total bytes written would differ significantly from other RAID member drives?

Actually, this would apply to striping with ZFS, since ZFS can ‘load balance’ the writes, so that some drives that are faster would get more writes than drives that are slower. But with identical drives this shouldn’t cause too much of an issue even with ZFS.

Scratch that comment. I am thinking of durability as ‘expected time to failure’.

You are correct in that it is possible to write more data (TBW) to an array of disks than to any single drive (although I doubt you’ll get anywhere close to the numbers cited).

However, if you are concerned about durability and intend to use this array of drives as a single logical device, you’ll need to add redundancy in some form (RAID1, RAID5, RAIDZ1, …) which will reduce the usable capacity to less than the total capacity of the array.

Sure but MTBF is 2 million hours so 228 years (!). So what are the concerns about durability? Perhaps that the specs are wrong, that is possible.

Redundancy is not always needed. I intend to use two high capacity harddrives (18-20TB) in stripe to act as backup. And perhaps some online/offsite backup that i sync monthly. With ZFS you can do incremental backups nicely and efficiently, also remotely.

RAID-Z1/2/3 does not work well with random IOps. Unlike RAID5 where multiple drives can do individual I/O, so parallel I/O, this is not possible with RAID-Z1/2/3. One I/O will affect all drives and as such the IOps is the same as a single drive, which is not the cause for traditional RAID5.

Also, expansion for RAID-Z1/2/3 is still a work-in-progress (see: RAIDZ Expansion feature) and might take a year still. Expansion of striping has been available since ZFS version 1 i believe and is considered very safe, since it does not shuffle any data around. It also works instantly (<1 sec).

I value any feedback you and others have on my plan. But… it is pretty cool isn’t it? For not that much money you get ultra-enterprise grade performance, durability and capacity. The only downside is that the SSDs do not have PLP (power-loss protection) so they can corrupt themselves on improper shutdown. But since i would run RAID0 i will protect the data another way, using automated backups. Every morning the harddrives which act as backup would spinup and receive an incremental snapshot using ZFS script and then the drives spindown again.

Super low-power, low noise; this seems the holy grail of enthusiasts?!? ![]()